API Design

EE 547 - Unit 6

Spring 2026

Outline

API Foundations

- Request/response structure and status codes

- Methods, idempotency, and safe operations

- Headers and connection reuse

- Resource-oriented design

- Statelessness and scalability

- OpenAPI specifications and schema validation

- Versioning and breaking changes

- Pagination strategies for large collections

Security and Implementation

- Password hashing with salt and work factors

- JWT structure and stateless tokens

- OAuth 2.0 authorization flows

- Session management trade-offs

- Request routing and data extraction

- Production deployment with Gunicorn

- Resource-based permissions

- Scope-based access control

Service Boundaries Define Why APIs Exist

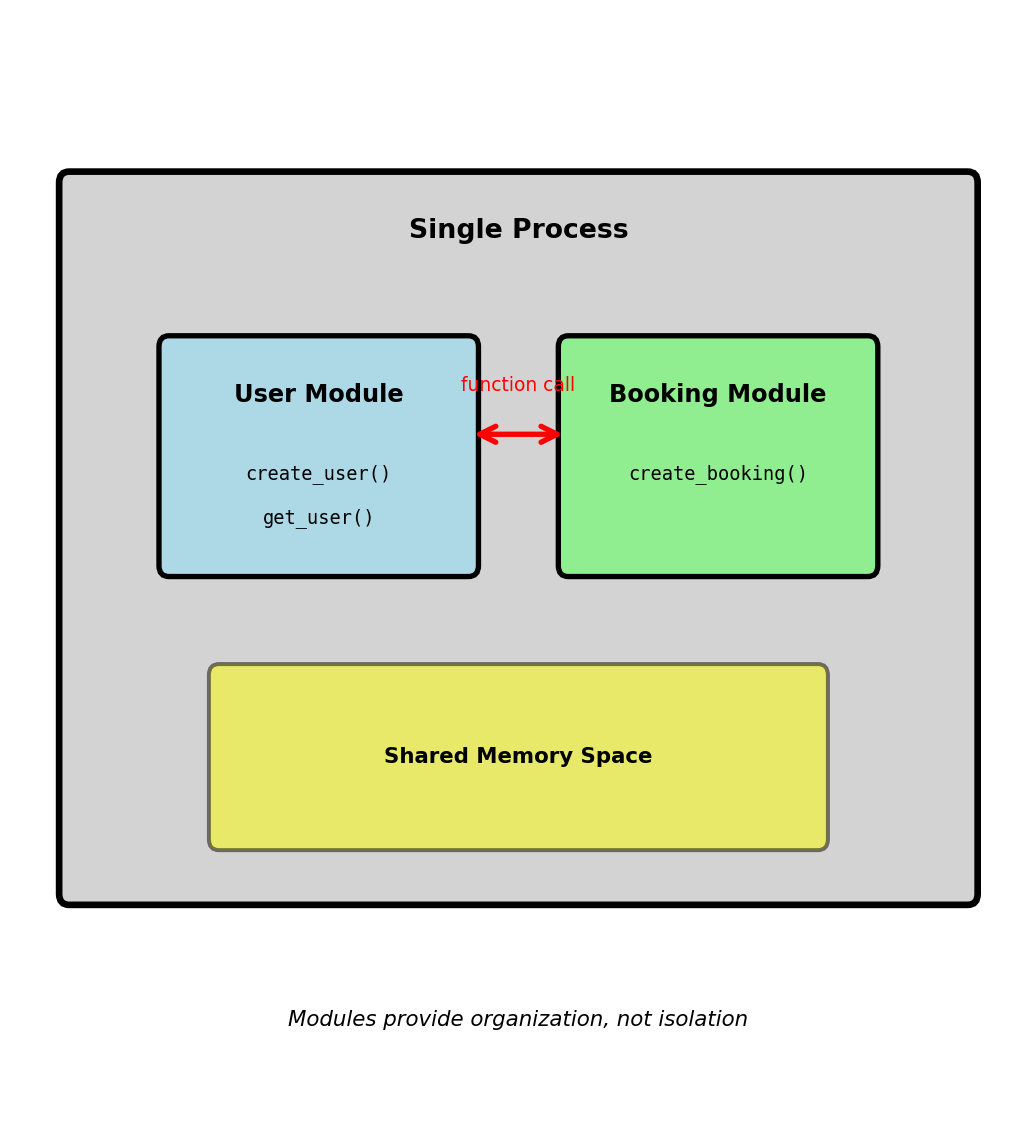

Code Organization - From Functions to Services

Software engineering fundamental: Separating concerns through interfaces

Within a single application:

# User management module

def create_user(email, password):

user_id = generate_id()

hash_pwd = hash_password(password)

store_user(user_id, email, hash_pwd)

return user_id

# Booking module

def create_booking(user_id, flight_id):

user = get_user(user_id) # Function call

if user.is_active:

return store_booking(user_id, flight_id)Module boundaries provide:

- Separation of concerns: User logic isolated from booking logic

- Independent modification: Change password hashing without touching bookings

- Contract-based interface:

get_user(user_id) → Userdefines expectations - Parallel development: Different developers work on different modules

Single process limitation:

- Shared memory space: Module bug can crash entire application

- Shared resources: CPU-intensive user validation blocks booking requests

- Deployment coupling: Update user module requires redeploying bookings

- Technology lock-in: All modules must use same language/framework

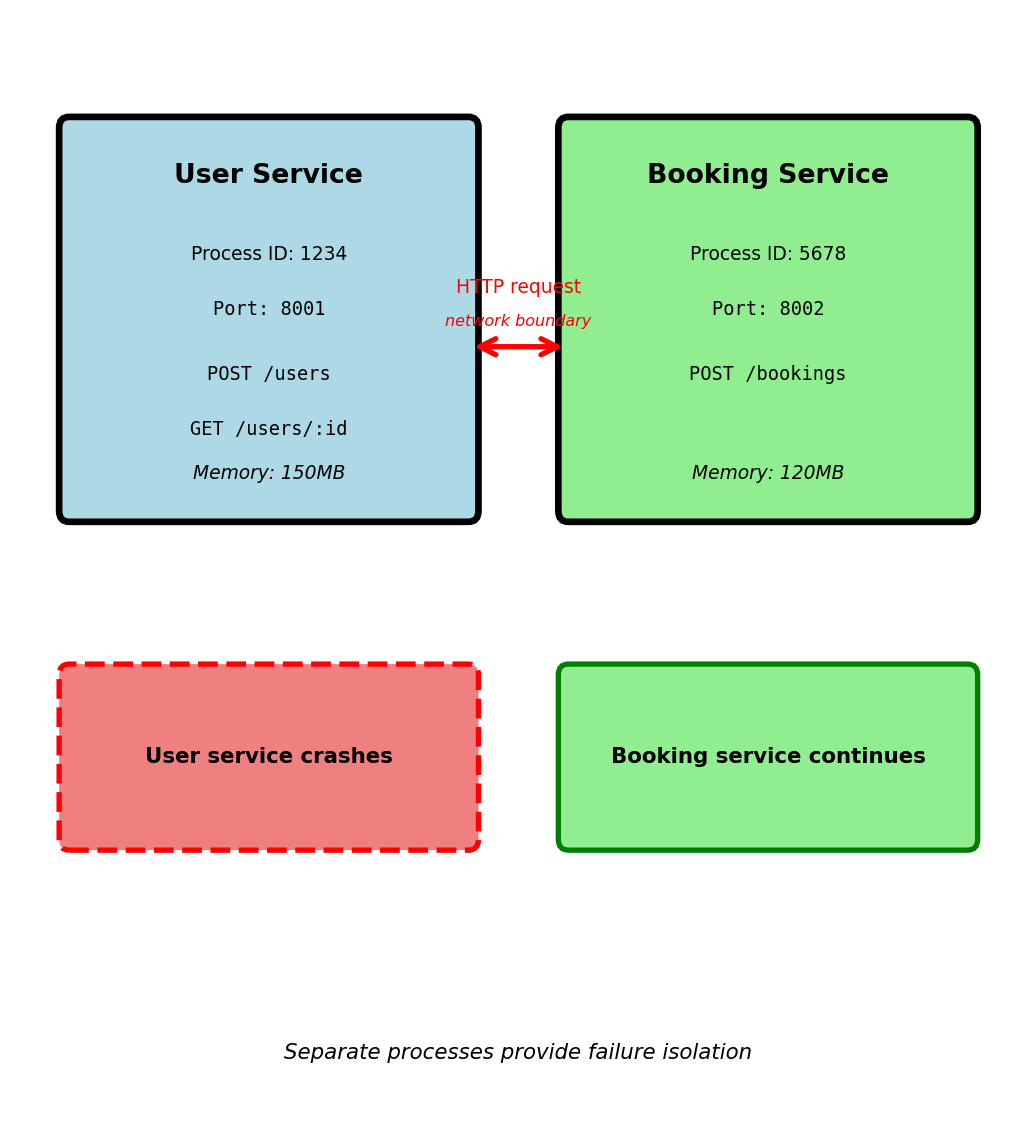

Process Isolation - Separate Failure Domains

Moving from modules to separate processes

Same code, different execution model:

# User service (separate process)

# Listens on port 8001

@app.route('/users', methods=['POST'])

def create_user():

email = request.json['email']

password = request.json['password']

user_id = generate_id()

hash_pwd = hash_password(password)

store_user(user_id, email, hash_pwd)

return {'user_id': user_id}

# Booking service (separate process)

# Listens on port 8002

@app.route('/bookings', methods=['POST'])

def create_booking():

user_id = request.json['user_id']

flight_id = request.json['flight_id']

# HTTP request instead of function call

response = requests.get(f'http://localhost:8001/users/{user_id}')

user = response.json()

if user['is_active']:

return store_booking(user_id, flight_id)Why separate processes:

- Failure isolation: User service crash doesn’t terminate booking service

- Resource isolation: CPU-intensive user validation doesn’t block booking requests

- Independent deployment: Update user service without restarting booking service

- Technology flexibility: User service in Python, booking service in Go

API Contract - Defining Service Boundaries

API: Application Programming Interface - contract for communication

Function call contract:

HTTP API contract for same operation:

Request: GET /users/123 on host user-service:8001

Success response: HTTP 200 OK

Not found response: HTTP 404 Not Found

API contract specifies:

- Endpoint:

GET /users/123identifies resource and operation - Input format: User ID in URL path

- Success response: JSON with

user_id,email,is_activefields (status 200) - Error response: JSON with

errormessage (status 404)

Why explicit contracts matter:

Services can evolve independently:

- Booking service knows the user API returns

is_activefield - User service can change implementation (database, caching) without breaking bookings

- Contract violations detected immediately: 404 instead of silent failure

API documentation as contract:

GET /users/:user_id — Retrieve user by ID

Parameters:

user_id(integer, path, required): User identifier

Responses:

- 200 User found: Returns

user_id(integer),email(string),is_active(boolean) - 404 Not found: Returns

error(string),user_id(integer) - 500 Server error: Internal error occurred

Contract enforcement:

- Booking service expects specific JSON structure

- User service must provide that structure

- Change requires coordination: Update contract, then implementation

- Compare to function call: Type system enforces contract at compile time

APIs make implicit function contracts explicit and enforceable

Independent Evolution - Versioning and Breaking Changes

Scenario: User service needs to add email verification

Version 1 response: GET /users/123

Version 2 - Adding fields (backward compatible)

Backward compatible change:

- Booking service ignores unknown fields

- Continues using

is_activeas before - No coordination required

Version 2 - Breaking change (not compatible)

Problem: Booking service still reads is_active field

- Field doesn’t exist in response

- Booking service interprets as

falseor crashes - Breaking change requires coordinated deployment

Version management strategies:

URL-based versioning:

GET /v1/users/123→ Old response (includesis_active)GET /v2/users/123→ New response (includesaccount_status)Booking service continues using

/v1/usersNew services can use

/v2/usersUser service maintains both versions temporarily

Version distribution (airline system, 45 days after v2 launch):

- v1 endpoint: 12% of requests (older services)

- v2 endpoint: 88% of requests (updated services)

Cannot remove v1 until 100% migrated

Why versioning needed:

- 20 services depend on user API

- Coordinated update across 20 services: weeks of planning

- Independent updates with versioning: gradual rollout

- Compare to function signature change: Compiler forces simultaneous update

APIs enable independent deployment through versioning

Multiple Clients - Contract Stability

API serves multiple independent consumers

Four clients calling GET /users/123:

- Booking service — Checks

is_activebefore creating booking - Email service — Sends email to

user['email'] - Admin dashboard — Displays user profile

- Mobile app — Renders profile screen (external client via

https://api.airline.com)

All four clients depend on same contract

Client code example:

Internal change in user service:

# Original: Users stored in PostgreSQL

def get_user(user_id):

row = db.query("SELECT * FROM users WHERE id = ?", user_id)

return {

'user_id': row['id'],

'email': row['email'],

'is_active': row['active']

}

# New: Users moved to Redis cache (performance improvement)

def get_user(user_id):

cached = redis.get(f'user:{user_id}')

if cached:

return json.loads(cached)

# Fallback to database...Impact on clients: None

- API contract unchanged: Still returns same JSON structure

- Implementation details hidden behind API

- Database → Redis migration invisible to consumers

- No client code changes required

Contract violation example:

User service developer changes field name:

Cascading failures:

- Booking service:

KeyError: 'email'when sending confirmation - Email service: Crashes attempting to read user email

- Admin dashboard: Profile page displays blank email

- Mobile app: 500 errors rendered to users

4 clients break simultaneously from single field rename

Why contracts matter with multiple clients:

- Function rename in module: Compiler catches all call sites

- API field rename: No compile-time check, runtime failures

- More clients = higher cost of breaking changes

- Explicit contract + automated testing prevents accidental breakage

APIs require stability when serving multiple independent clients

HTTP Structures Client-Server Communication

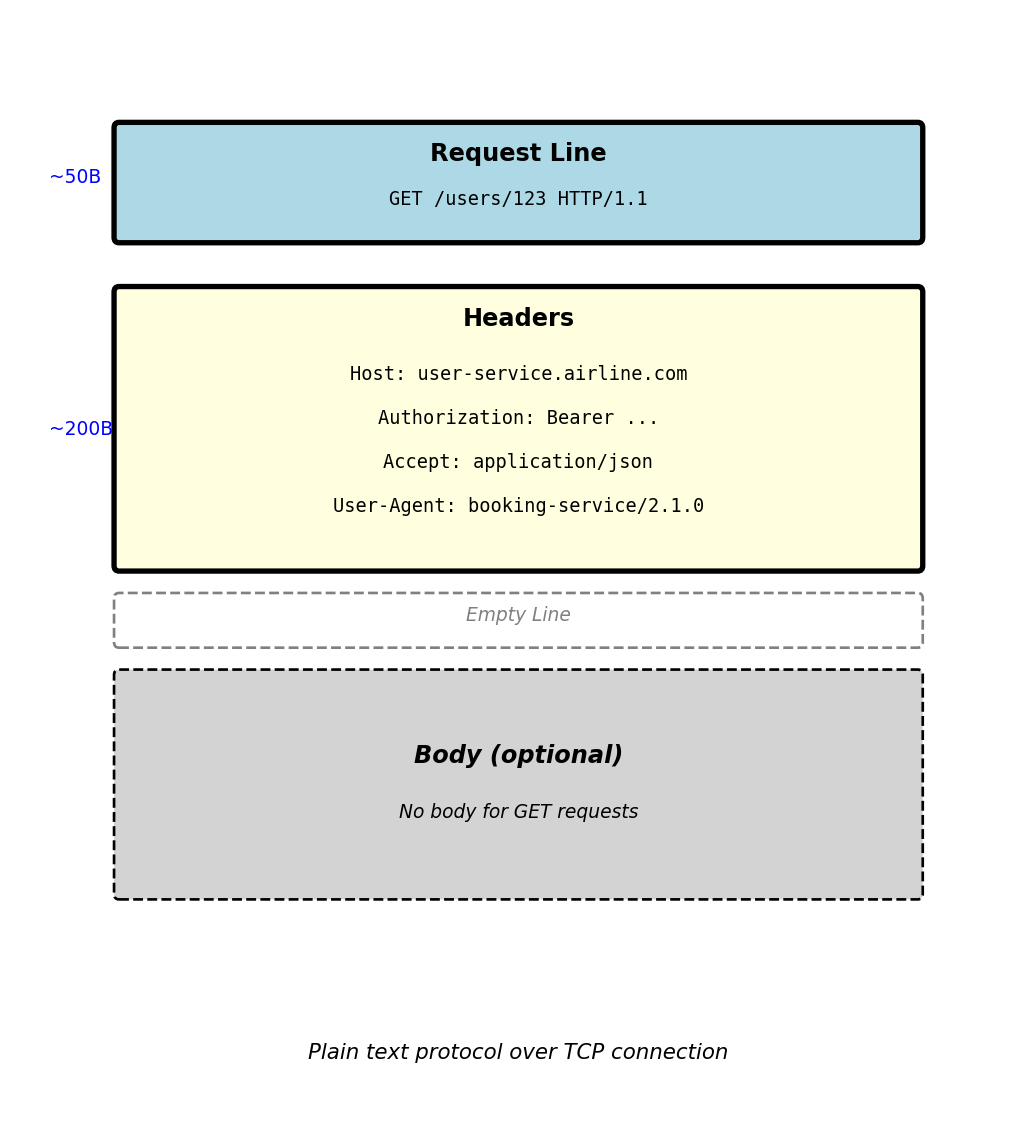

HTTP Request Structure - Client to Server

HTTP request anatomy:

GET /users/123 HTTP/1.1

Host: user-service.airline.com

Authorization: Bearer eyJhbGc...

Accept: application/json

User-Agent: booking-service/2.1.0Request line components:

- Method:

GET- what operation to perform - Path:

/users/123- which resource to access - Protocol:

HTTP/1.1- version of HTTP

Request headers (metadata):

Host: Which server to route to (required in HTTP/1.1)Authorization: Credentials for authenticationAccept: What response format client understandsUser-Agent: Identifies client making request

Headers are key-value pairs: Header-Name: value

Empty line separates headers from body

Requests without body (GET, DELETE) end after headers

Typical request size:

- Typical GET request: 250-400 bytes

- Headers: 200-350 bytes

- Request line: 50 bytes

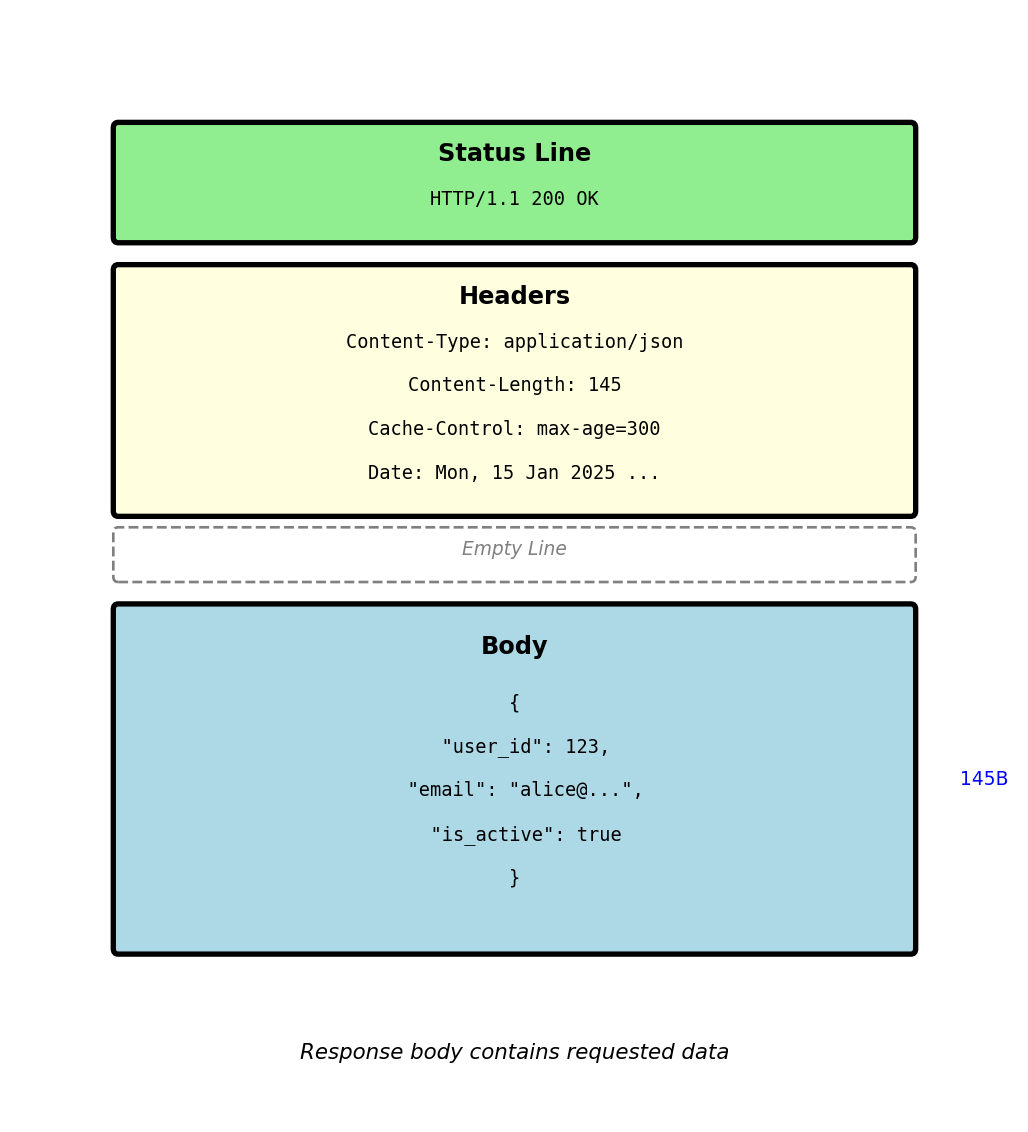

HTTP Response Structure - Server to Client

HTTP response anatomy:

HTTP/1.1 200 OK

Content-Type: application/json

Content-Length: 145

Cache-Control: max-age=300

Date: Mon, 15 Jan 2025 14:30:00 GMTStatus line components:

- Protocol:

HTTP/1.1 - Status code:

200- numeric result indicator - Reason phrase:

OK- human-readable description

Response headers:

Content-Type: Format of response body (JSON, HTML, etc)Content-Length: Body size in bytesCache-Control: How long response can be cachedDate: When response was generated

Response body:

- Actual data returned by server

- Format specified by

Content-Typeheader - In this case: JSON with user data

Empty line separates headers from body (same as request)

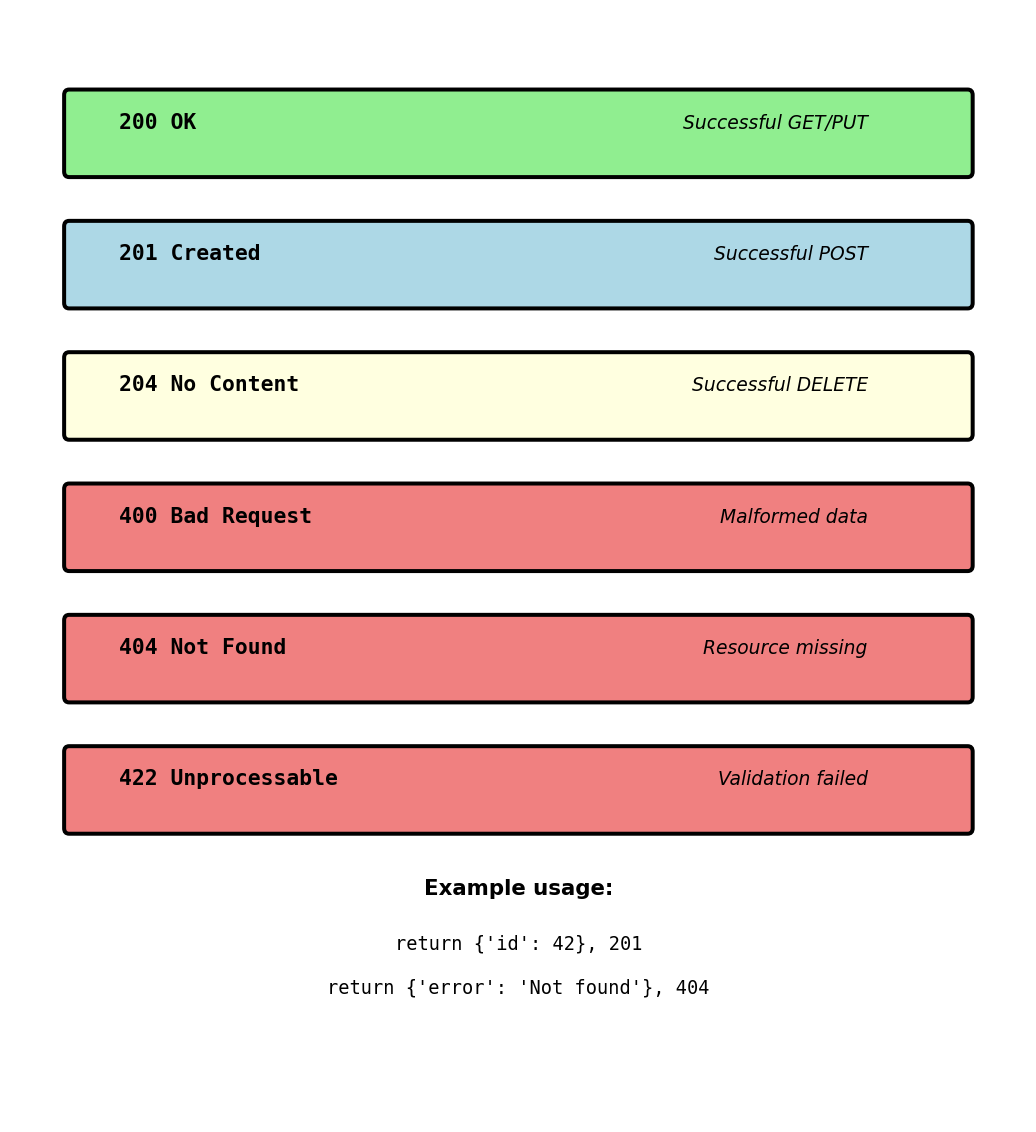

Status Codes Determine Client Behavior

Status code tells client what happened and what to do next

response = requests.get('http://user-service/users/123')

if response.status_code == 200:

user = response.json() # Success - process data

elif response.status_code == 404:

return None # User doesn't exist - normal case

elif response.status_code == 401:

refresh_token() # Get new auth token

retry_request() # Try again

elif response.status_code == 503:

time.sleep(5) # Service down

retry_request() # Retry with backoff

elif response.status_code >= 500:

alert_ops_team() # Server problem

return fallback_response()Different codes require different handling:

2xx: Process response

4xx: Fix request or handle business logic

5xx: Retry or use fallback

Common status codes in production:

200 OK — Request succeeded

Return data in response body

201 Created — Resource created

Location header has new resource URL

204 No Content — Success, no data

DELETE succeeded, nothing to return

400 Bad Request — Malformed request

Invalid JSON, missing required field

401 Unauthorized — No valid auth

Token expired or missing

403 Forbidden — Not allowed

Valid auth but wrong permissions

404 Not Found — Resource missing

Normal for checking existence

429 Too Many Requests — Rate limited

Check Retry-After header

500 Internal Server Error — Bug

Unhandled exception in server

503 Service Unavailable — Overloaded

Retry with exponential backoff

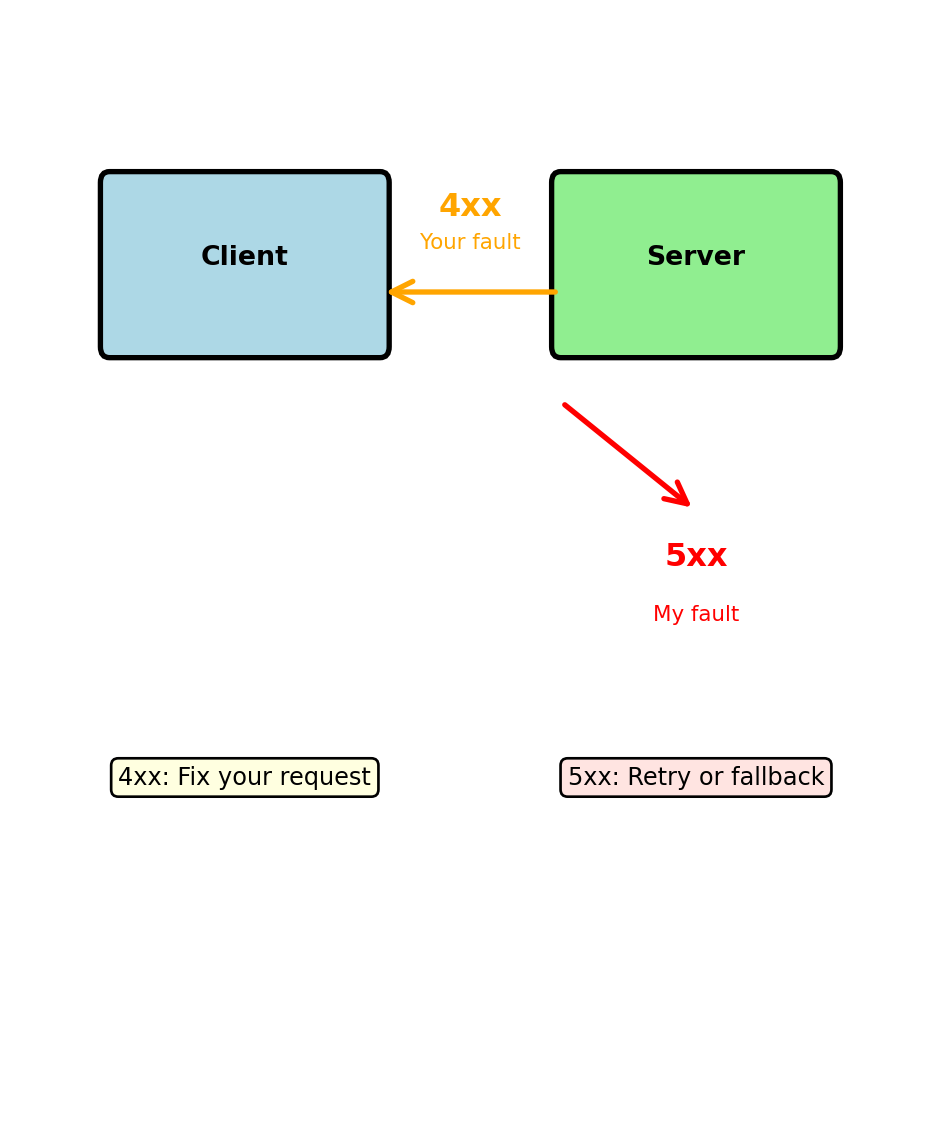

4xx vs 5xx: Client Problem vs Server Problem

4xx = Your request has a problem

POST /users

Content-Type: application/json

{"email": "not-an-email", "age": "twenty"}Response: 400 Bad Request

Client must fix the request:

- Validate input before sending

- Check required fields

- Use correct data types

5xx = Server has a problem

Response: 500 Internal Server Error

Client should retry (server might recover):

- Use exponential backoff

- Have circuit breaker

- Log for debugging

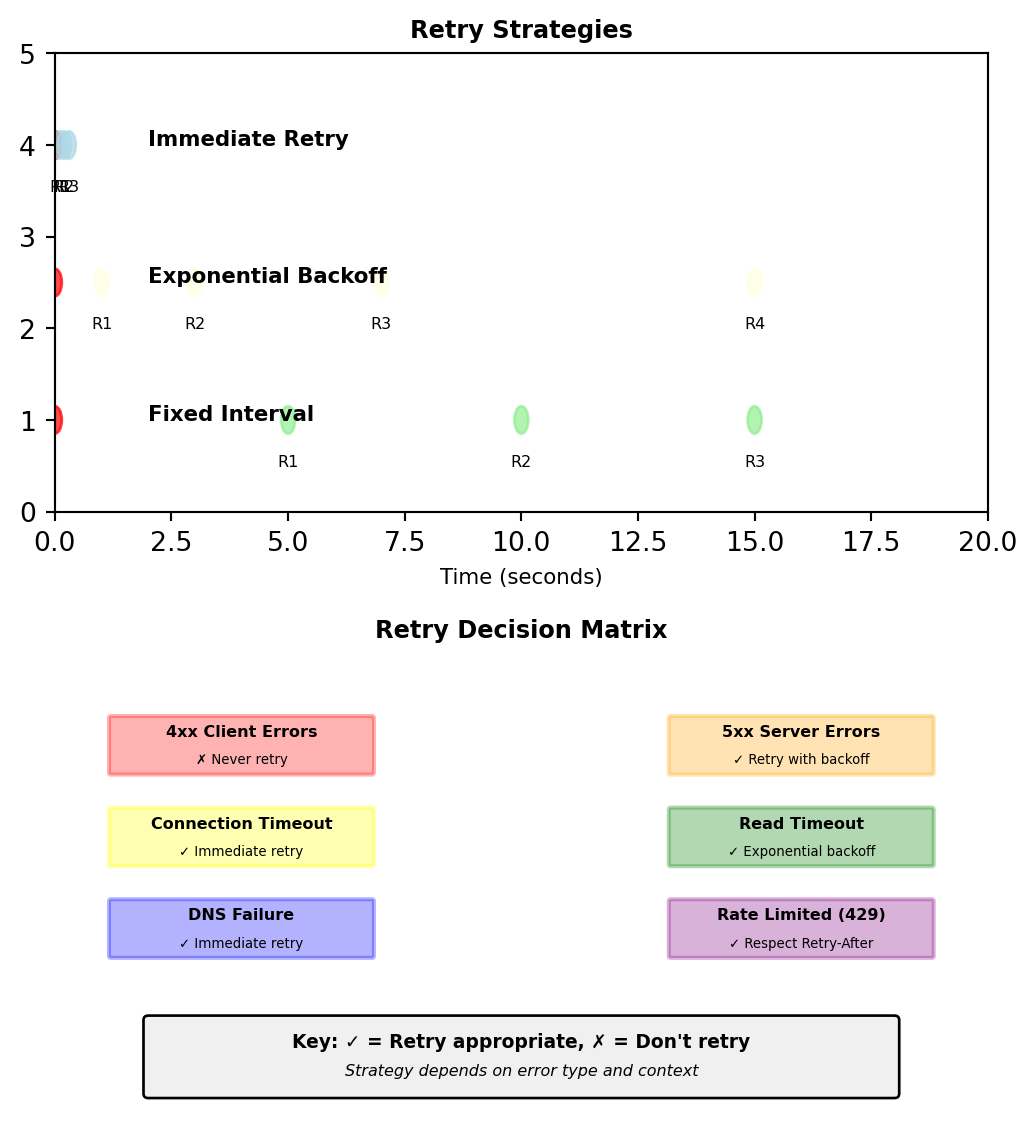

Retry strategies differ:

4xx errors: Don’t retry same request

- Fix the problem first

- 401: Get new token

- 429: Wait for rate limit reset

5xx errors: Retry might work

- Server might recover

- Different server might work

- Use exponential backoff

429 and 503: Handling Overload

429 Too Many Requests — You’re sending too fast

HTTP/1.1 429 Too Many Requests

X-RateLimit-Limit: 100

X-RateLimit-Remaining: 0

X-RateLimit-Reset: 1697299200

Retry-After: 60Client must slow down:

503 Service Unavailable — Server overloaded

HTTP/1.1 503 Service Unavailable

Retry-After: 30Server is temporarily unable to handle requests:

- Too many connections

- Database down

- Deployment in progress

Different causes, different handling:

429 = Rate limiting (intentional)

- Protects service from abuse

- Per-client limits

- Predictable reset times

- Client should queue/batch requests

503 = Overload (unintentional)

- Service can’t handle load

- Affects all clients

- Unknown recovery time

- Client should back off

Exponential backoff pattern:

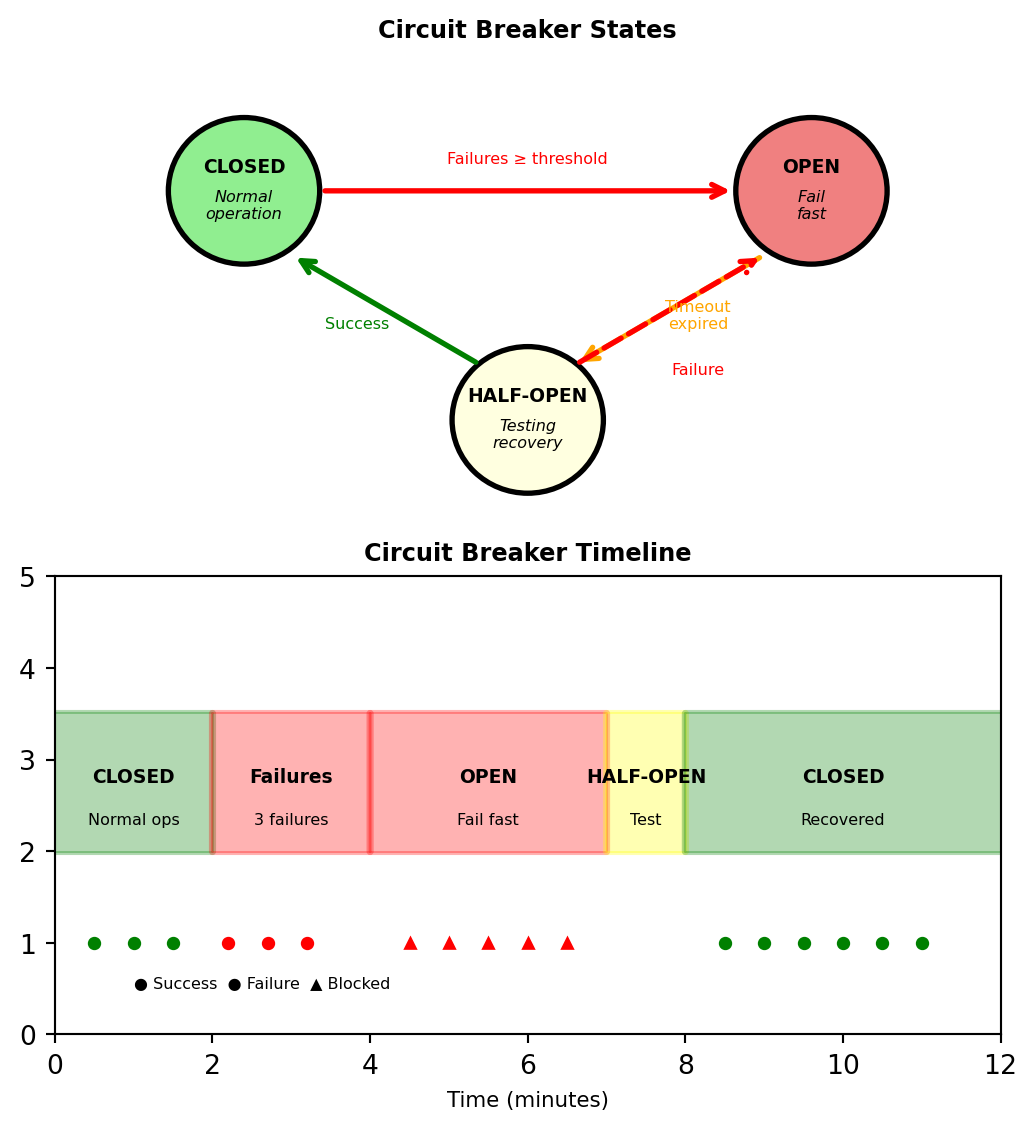

Circuit breaker pattern:

- After N failures, stop trying

- Let service recover

- Periodically test if service back

Methods Define What Happens to Resources

HTTP methods specify the operation type

GET — Read data

GET /users/123Returns user 123’s data. No changes to server state.

POST — Create new

POST /users

{"email": "alice@example.com", "password": "..."}Creates new user. Server assigns ID.

PUT — Replace entirely

PUT /users/123

{"email": "new@example.com", "is_active": false}Replaces ALL fields of user 123.

PATCH — Update partially

PATCH /users/123

{"email": "new@example.com"}Updates ONLY email, leaves other fields unchanged.

DELETE — Remove

DELETE /users/123Removes user 123 from system.

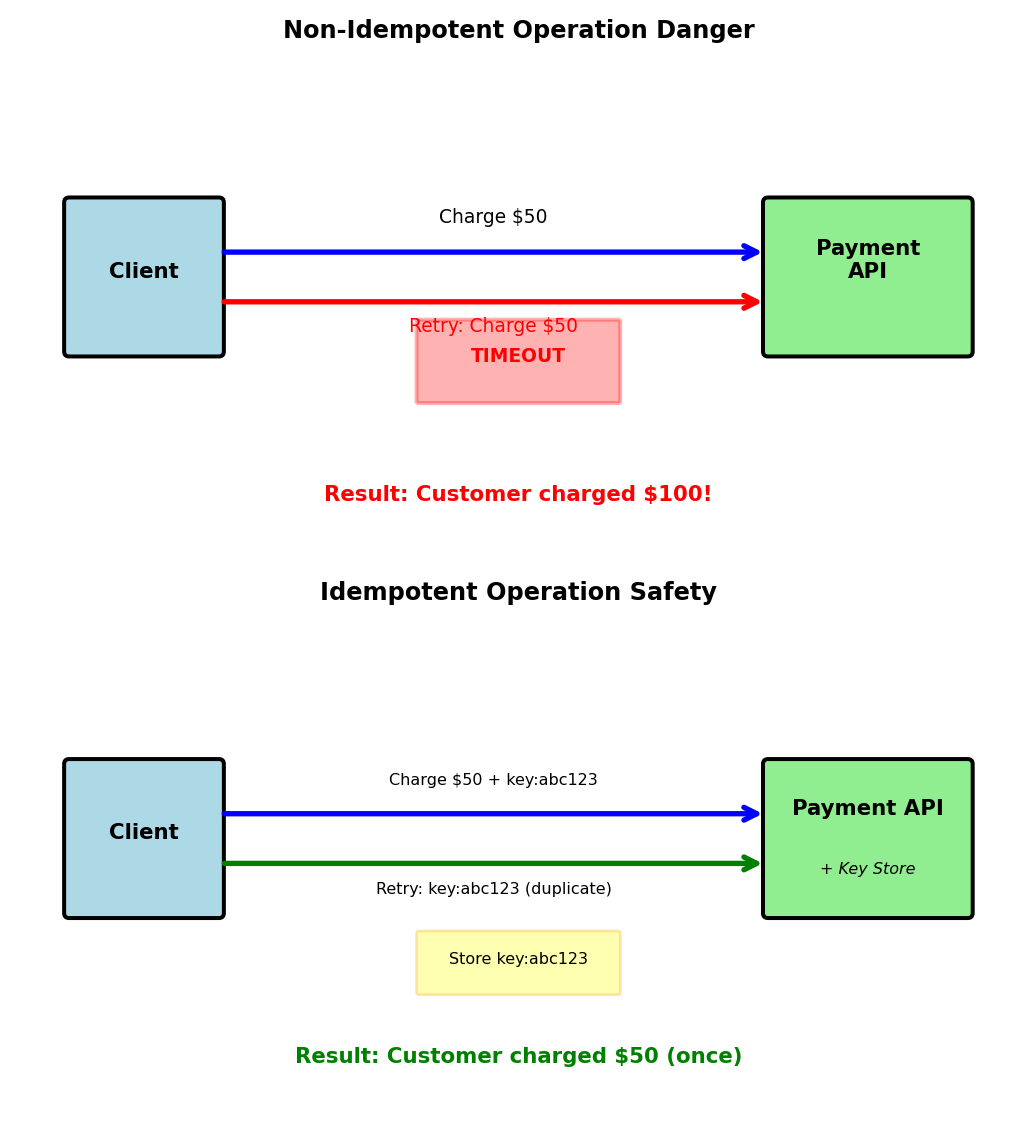

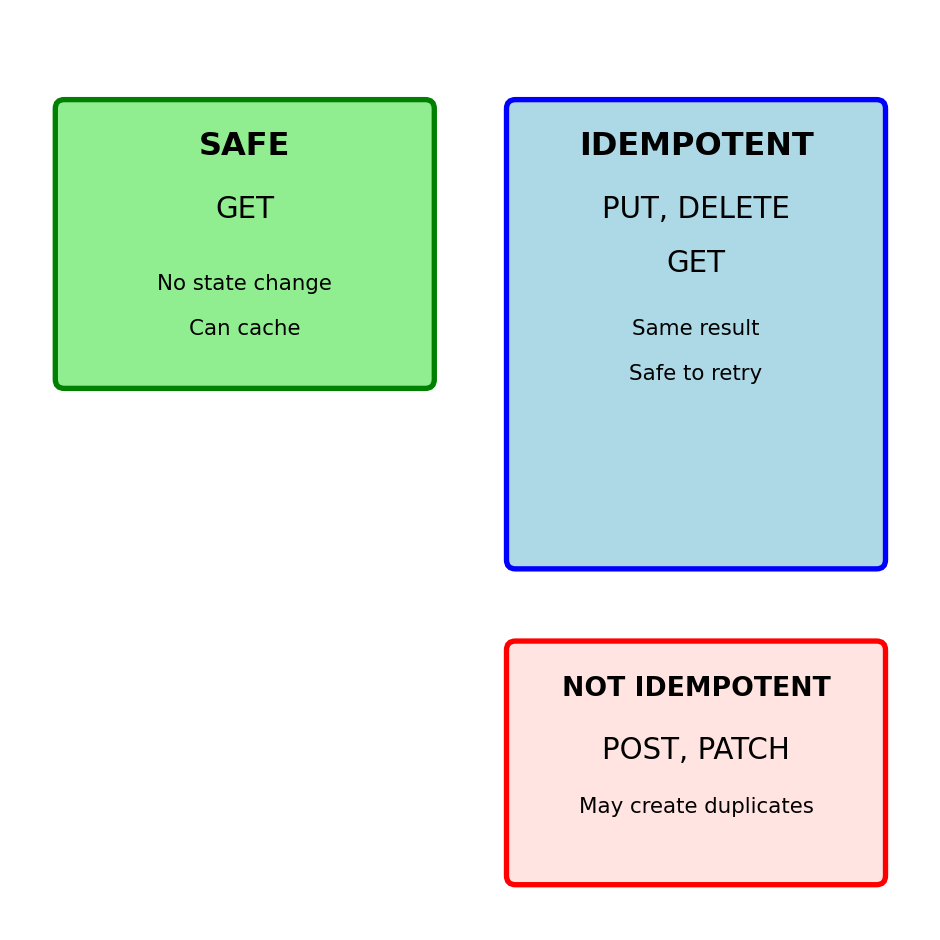

Critical property: Idempotency

Idempotent = Same result from multiple identical calls

| Method | Idempotent | Safe | Use Case |

|---|---|---|---|

| GET | Yes | Yes | Read data |

| POST | No | No | Create new |

| PUT | Yes | No | Replace all |

| PATCH | No | No | Update some |

| DELETE | Yes | No | Remove |

Why idempotency matters:

Network fails after server processes but before client gets response.

Idempotent (PUT, DELETE):

- Client can safely retry

- No duplicate side effects

Not idempotent (POST):

- Retry might create duplicate

- Need idempotency keys

Safe = No server state changes

Only GET is safe (can cache, prefetch)

POST vs PUT: Creation Patterns

POST - Server assigns identifier

PUT - Client specifies identifier

POST is not idempotent:

When to use each:

Use POST when:

- Server generates IDs (auto-increment, UUID)

- Creating dependent resources

- Running actions/commands

- Resource location unknown

Use PUT when:

- Client controls IDs

- Replacing entire resource

- Upsert operations (create or update)

- Resource location known

Real examples:

GitHub:

POST /repos/owner/repo/issues

# Creates issue, GitHub assigns number

PUT /repos/owner/repo/contents/README.md

# Creates/replaces file at exact pathAWS S3:

PUT /bucket/object-key

# Always PUT - client controls key

# Creates new or replaces existingIdempotency in practice:

- PUT same data multiple times = one resource

- POST same data multiple times = multiple resources (or error)

PATCH vs PUT: Partial vs Full Updates

PUT replaces entire resource

# Current user state

{

"id": 123,

"email": "alice@example.com",

"name": "Alice",

"role": "user",

"is_active": true

}

# PUT request (missing fields)

PUT /users/123

{

"email": "alice@example.com",

"name": "Alice Updated"

}

# Result - other fields lost/defaulted

{

"id": 123,

"email": "alice@example.com",

"name": "Alice Updated",

"role": null, # Lost!

"is_active": false # Lost!

}PATCH updates only specified fields

Common PATCH formats:

JSON Merge Patch (simple):

JSON Patch (RFC 6902):

When to use each:

PUT:

- Form submissions (have all fields)

- Config file updates

- Immutable updates

PATCH:

- Single field updates

- Large resources

- Partial forms

- Mobile apps (bandwidth)

Common mistake: Using PUT for single field update loses data

Safe and Unsafe Methods: Retry Implications

Safe methods can be called without side effects

Unsafe methods change server state

# DELETE is idempotent but unsafe

DELETE /users/123 # Returns 204 No Content

DELETE /users/123 # Returns 404 Not Found

DELETE /users/123 # Returns 404 Not Found

# Final state same, but state did change

# POST is neither safe nor idempotent

POST /orders # Creates order 1

POST /orders # Creates order 2 (duplicate!)

POST /orders # Creates order 3 (duplicate!)Network failure handling:

Retry safety:

Always safe: GET

Safe if idempotent: PUT, DELETE

Dangerous: POST, PATCH

Need idempotency keys for POST/PATCH

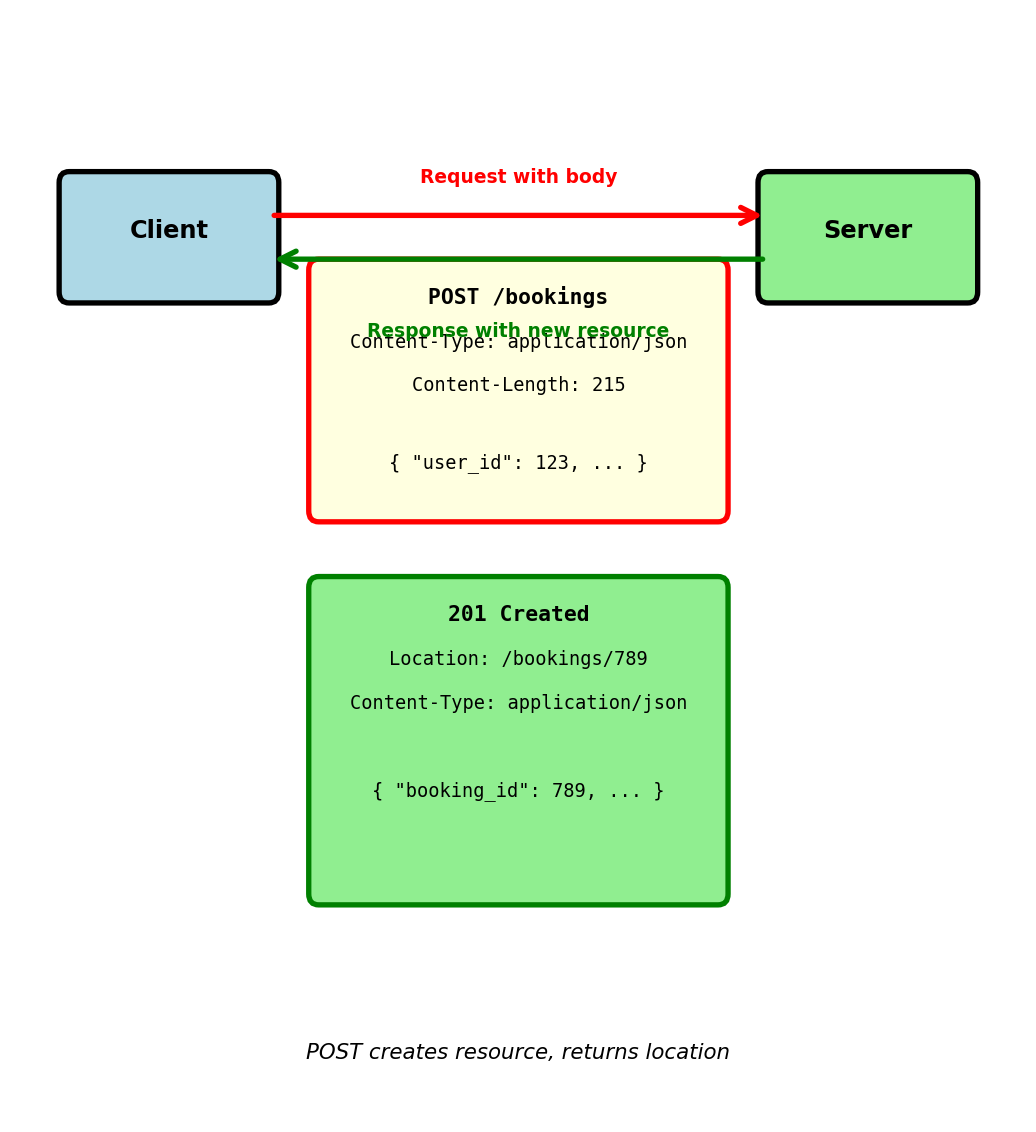

Request with Body - POST Example

Creating new booking via POST:

POST /bookings HTTP/1.1

Host: booking-service.airline.com

Content-Type: application/json

Content-Length: 215

Authorization: Bearer eyJhbGc...Additional headers for body:

Content-Type: Specifies body format (JSON, XML, form data)Content-Length: Exact size in bytes (required by HTTP/1.1)

Server response:

HTTP/1.1 201 Created

Location: /bookings/789

Content-Type: application/json

Content-Length: 87201 Created status indicates:

- New resource successfully created

Locationheader provides URL to access new resource- Response body contains resource details

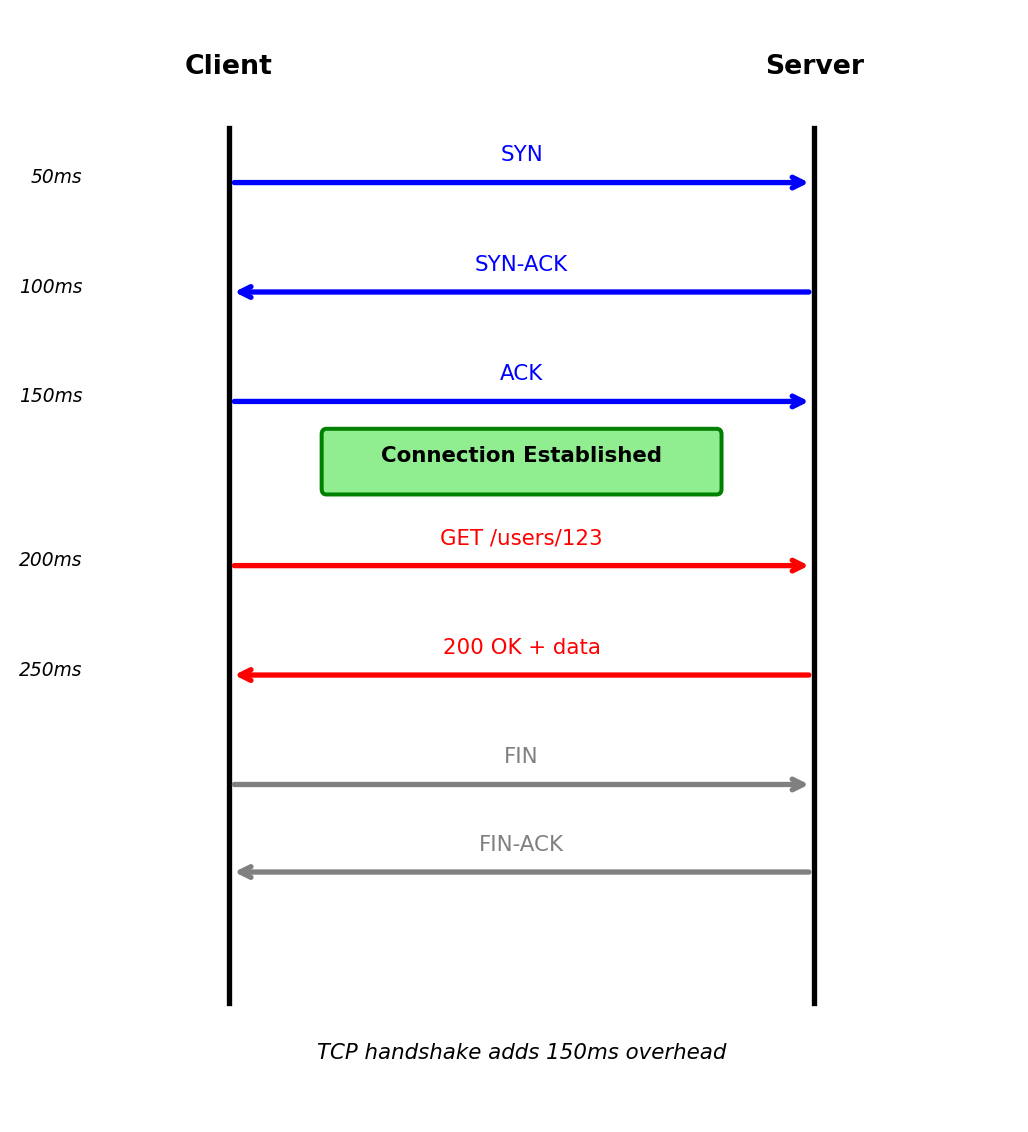

Connection Lifecycle - TCP Under HTTP

HTTP runs over TCP connection:

1. TCP handshake (connection establishment):

Client Server

| |

|--- SYN -------->| (50ms)

|<-- SYN-ACK -----| (50ms)

|--- ACK -------->| (50ms)

| |

[TCP established]- 3-way handshake establishes connection

- Total latency: 150ms (client-server round trip)

- Required before any HTTP data sent

2. HTTP request/response over established connection:

| |

|- GET /users/123->| (50ms)

|<- 200 OK + data -| (50ms)

| |- Request sent over TCP connection

- Response returned on same connection

- Total: 100ms for request/response

3. Connection close:

| |

|---- FIN --------->|

|<--- FIN-ACK ------|

| |

[Connection closed]Total measured latency for single request:

- TCP handshake: 150ms

- HTTP request/response: 100ms

- Total: 250ms

Round-trip latency varies by distance:

- Same datacenter: 1-2ms per round trip

- Cross-coast US: 60-80ms per round trip

- Transpacific: 150-200ms per round trip

3-way handshake before HTTP request

Connection Reuse - HTTP Keep-Alive

TCP connections are expensive to establish — each requires a 3-way handshake before any data transfers

Without keep-alive (HTTP/1.0 default):

Request 1:

TCP handshake: 150ms

HTTP request/response: 100ms

Close connection

Total: 250ms

Request 2:

TCP handshake: 150ms (again!)

HTTP request/response: 100ms

Close connection

Total: 250ms

Request 3:

TCP handshake: 150ms (again!)

HTTP request/response: 100ms

Close connection

Total: 250ms

Total for 3 requests: 750msWith keep-alive (HTTP/1.1 default):

Request 1:

TCP handshake: 150ms

HTTP request/response: 100ms

Keep connection open

Total: 250ms

Request 2:

HTTP request/response: 100ms

(reuse connection)

Total: 100ms

Request 3:

HTTP request/response: 100ms

(reuse connection)

Total: 100ms

Total for 3 requests: 450ms40% latency reduction by reusing connection

Keep-alive headers:

Request includes: Connection: keep-alive Response includes: Connection: keep-alive and Keep-Alive: timeout=5, max=1000

Keep-alive parameters:

timeout=5: Server keeps connection open for 5 seconds idlemax=1000: Maximum 1000 requests on this connection

Connection pooling in practice:

Estimated impact (100 sequential requests, cross-coast):

- Without keep-alive: 25 seconds (250ms × 100)

- With keep-alive (10 connections in pool): 3.5 seconds

- 7× improvement

Connection reuse critical for performance

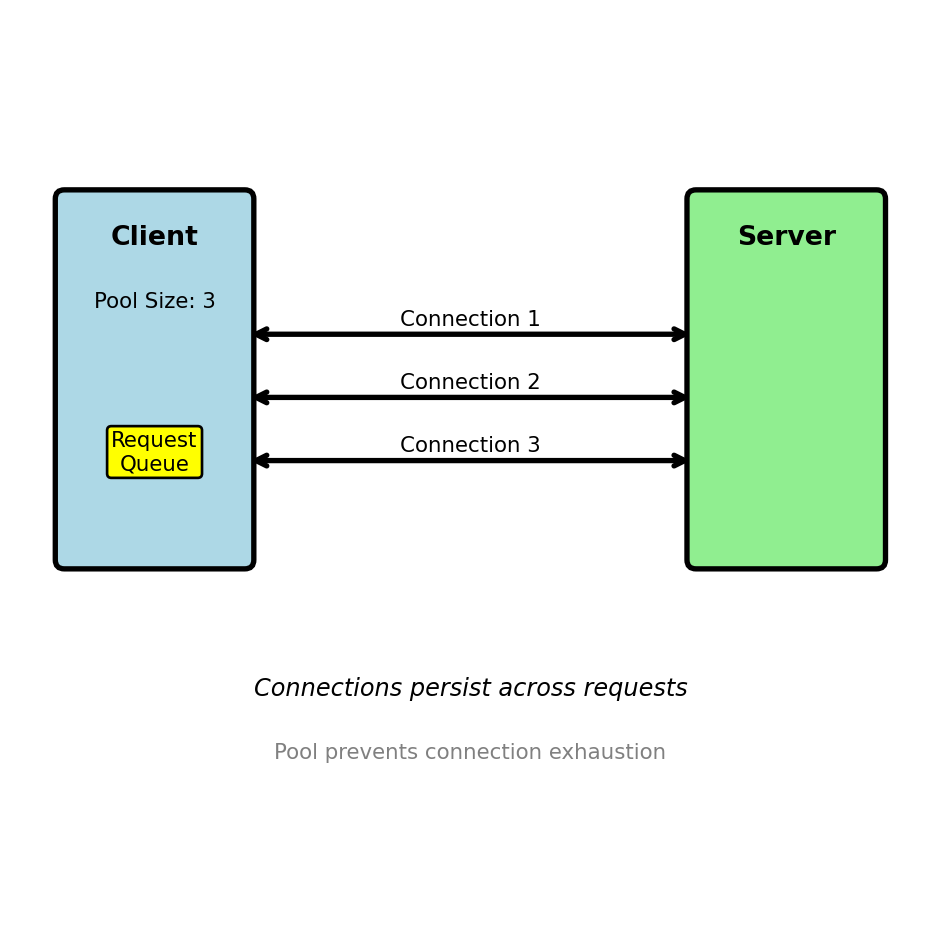

Connection Pooling: Managing Concurrent Requests

A single connection serializes requests — concurrent clients need concurrent connections

Single connection serves requests sequentially:

Connection 1: [Req1]->[Resp1]->[Req2]->[Resp2]->[Req3]->[Resp3]Connection pool serves requests in parallel:

Connection 1: [Req1]->[Resp1] [Req4]->[Resp4]

Connection 2: [Req2]->[Resp2] [Req5]->[Resp5]

Connection 3: [Req3]->[Resp3]Connection pool implementation:

from urllib3 import PoolManager

# Create pool with size limits

pool = PoolManager(

num_pools=10, # Max 10 different hosts

maxsize=20, # Max 20 connections per host

block=True # Wait if pool exhausted

)

# Connections managed automatically

response = pool.request('GET', 'http://api/users/123')

# Connection returned to pool after response readPool sizing considerations:

- Too small: Requests wait for available connection

- Too large: Memory overhead, server connection limits

- Typical: 10-50 connections per host

Pool exhaustion behavior:

Real scenarios requiring pools:

- Web server → Database (10-20 connections)

- API Gateway → Backend services (50-100 per service)

- Microservice → Other microservices (10-30 per service)

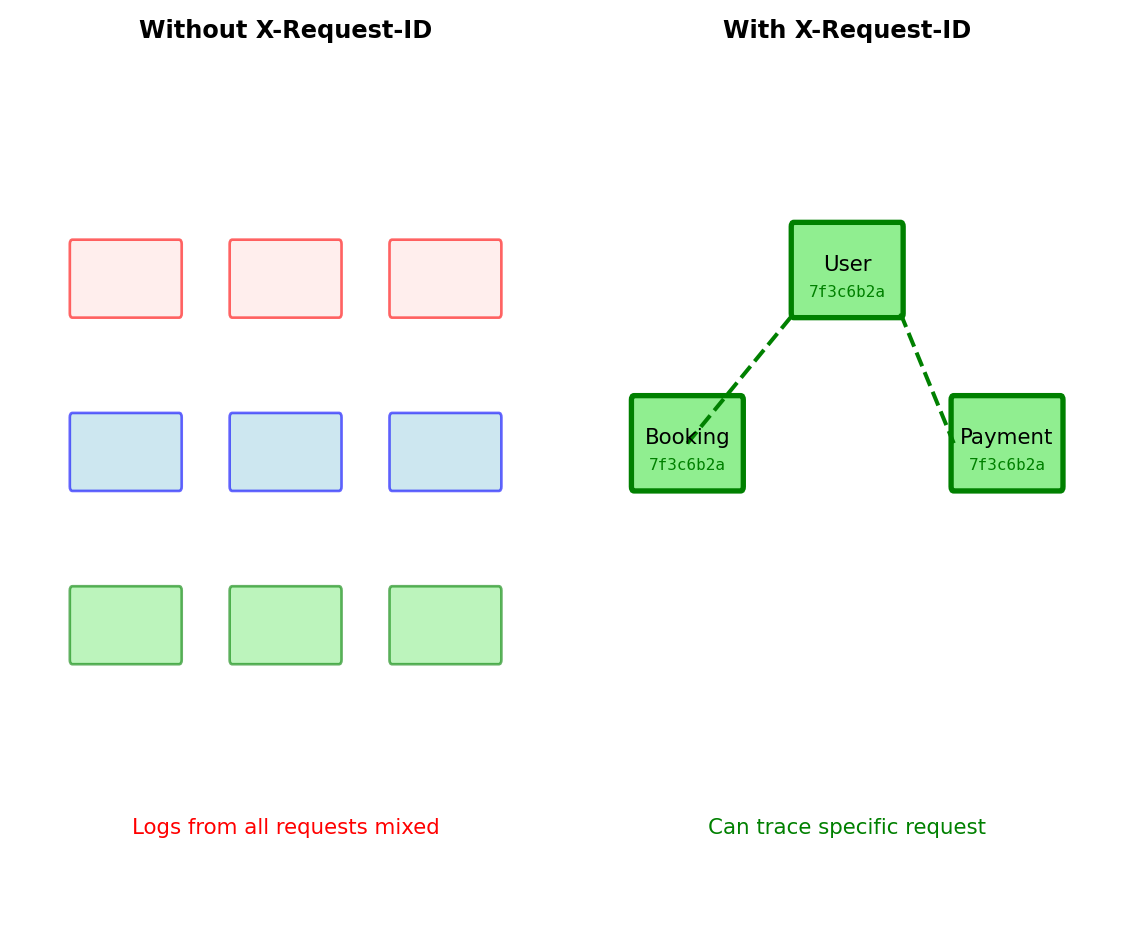

Headers Control Service Behavior Beyond Content

HTTP headers determine how services process requests

Four critical functions in distributed systems:

1. Authentication/Authorization Authorization: Bearer eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9...

Service validates identity and permissions before processing

2. Content Negotiation Content-Type: application/json; charset=utf-8 Accept: application/json

Ensures correct parsing and response format

3. Request Correlation X-Request-ID: 7f3c6b2a-5d9e-4f8b-a1c3-9e8d7c6b5a4f

Traces requests across multiple services for debugging

4. Service Metadata User-Agent: booking-service/2.1.0 X-API-Version: 2

Enables version-specific handling and deprecation

What happens without proper headers:

Missing Authorization → 401 Unauthorized

Wrong Content-Type → Data corruption

No X-Request-ID → Can’t trace failures

Invalid Accept → Client can’t parse response

Headers every request needs:

Authorization— Identity and permissionsContent-Type— How to parse bodyAccept— What format you want back

Headers for debugging:

X-Request-ID— Correlation across servicesUser-Agent— Which client sent this

Headers in responses:

- Status code — Did it work?

X-RateLimit-Remaining— Quota statusCache-Control— Can this be cached?

Headers are contracts between services

Content Negotiation: Preventing Silent Corruption

Content-Type tells server how to parse request body

POST /models/123/predict

Content-Type: application/json; charset=utf-8

Accept: application/json

{"features": [1.2, 3.4, 5.6], "threshold": 0.8}Server uses Content-Type to route parsing:

@app.route('/models/<id>/predict', methods=['POST'])

def predict(id):

content_type = request.headers.get('Content-Type', '')

if 'application/json' in content_type:

data = request.get_json() # JSON parser

elif 'application/x-www-form-urlencoded' in content_type:

data = request.form # Form parser

elif 'multipart/form-data' in content_type:

data = request.files # File parser

else:

return {'error': 'Unsupported Content-Type'}, 415

# Check Accept header for response format

accept = request.headers.get('Accept', 'application/json')

if 'application/json' not in accept:

return {'error': 'Cannot produce requested format'}, 406

result = model.predict(data)

return jsonify(result), 200How wrong Content-Type corrupts data:

Content-Type controls parsing:

application/json → JSON parser

application/x-www-form-urlencoded → Form parser

multipart/form-data → File upload parser

application/octet-stream → Raw bytes

Why explicit headers matter:

- Wrong Content-Type silently corrupts data

- Missing Accept causes client parse failures

- No charset breaks Unicode characters

Every request should include:

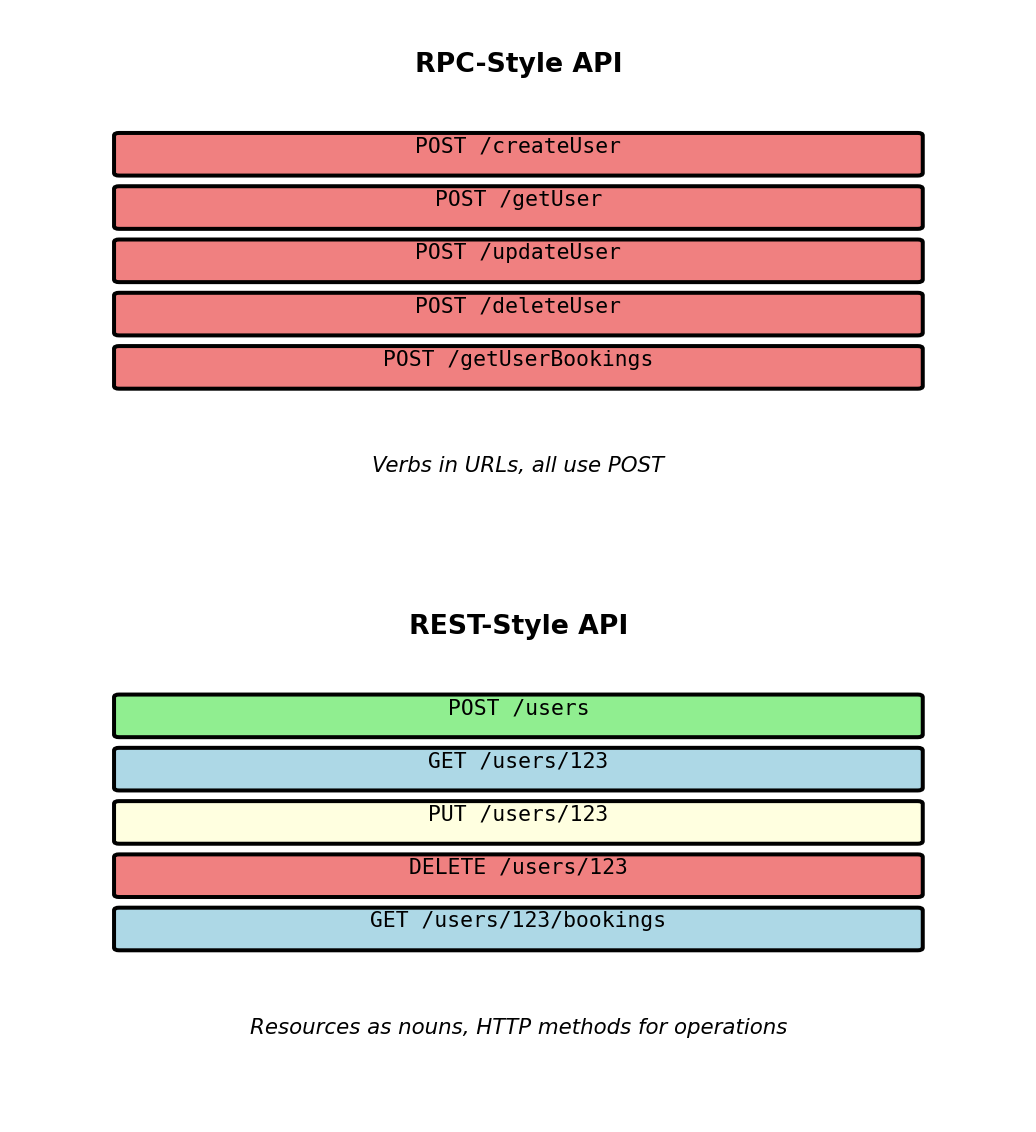

REST Models APIs as Resources

REST - Architectural Style for APIs

REST: Representational State Transfer

Architectural style, not a protocol or standard

Coined by Roy Fielding (2000 dissertation) based on HTTP design principles

Core idea: Resources identified by URLs, manipulated via standard HTTP methods

What REST is NOT:

- Not a specification with compliance tests

- Not a protocol like HTTP or SOAP

- Not limited to JSON (can use XML, HTML, etc)

- Not the only way to design APIs

What REST provides:

- Set of design principles for building APIs

- Conventions for mapping operations to HTTP methods

- Guidelines for URL structure

- Constraints that enable scalability and simplicity

REST vs other approaches:

- RPC-style:

/createUser,/getUser,/deleteUser(verbs in URLs) - REST-style:

POST /users,GET /users/123,DELETE /users/123(resources + methods)

REST treats everything as a resource accessible via URL

Resource-Oriented Design - Nouns Not Verbs

REST principle: URLs identify resources (things), methods specify operations

Resource hierarchy in airline API:

User resources:

/users— Collection of all users/users/123— Specific user/users/123/bookings— User’s bookings (sub-collection)/users/123/bookings/789— Specific booking

Flight resources:

/flights— Collection of all flights/flights/456— Specific flight/flights/456/seats— Available seats

Airport resources:

/airports— Collection of airports/airports/LAX— Specific airport/airports/LAX/flights— Flights from LAX

URL structure conventions:

- Use plural nouns:

/usersnot/user - Use hyphens for readability:

/frequent-flyersnot/frequentFlyers - Nest resources to show relationships:

/users/123/bookings - Keep hierarchy shallow (2-3 levels maximum)

Operations via HTTP methods:

GET /users— Get all usersGET /users/123— Get specific userPOST /users— Create new user (body: email, password)PUT /users/123— Update user (body: complete resource)DELETE /users/123— Delete user

Nested resources show relationships:

GET /users/123/bookings returns array of user’s bookings:

GET /users/123/bookings/789 returns specific booking via user path:

GET /bookings/789 returns same booking via direct path:

Design choice: Provide both paths when resource makes sense independently

/users/123/bookings— User-centric view (all bookings for user)/bookings/789— Booking-centric view (single booking)

Different access patterns for different use cases

GET and DELETE - Read and Remove

GET retrieves resource without modification

Request targets specific resource by ID:

GET /users/456 HTTP/1.1Server returns resource representation:

HTTP/1.1 200 OK

Content-Type: application/json

GET characteristics:

- No request body (parameters in URL or query string)

- 200 OK when resource exists

- 404 Not Found when resource doesn’t exist

- Idempotent: Repeated calls return same data

- Safe: No side effects on server state

GET on collections returns multiple resources:

GET /users HTTP/1.1

HTTP/1.1 200 OK

DELETE removes resource

Request targets specific resource:

DELETE /users/456 HTTP/1.1Server removes resource, returns minimal response:

HTTP/1.1 204 No ContentDELETE characteristics:

- No request body

- 204 No Content on success (nothing to return)

- 404 Not Found if resource already deleted

- Idempotent: Multiple deletes produce same final state

Subsequent DELETE returns 404:

First delete:

DELETE /users/456 → 204 No Content (deleted)

Second delete:

DELETE /users/456 → 404 Not Found (already gone)

Final state identical: User 456 doesn’t exist

Both methods are idempotent:

- GET: Same data returned each time

- DELETE: Same final state (resource absent)

Idempotency enables safe retries on network failures

POST and PUT - Create and Replace

POST creates new resource

Request sent to collection URL:

POST /users HTTP/1.1

Content-Type: application/json

Server assigns ID and creates resource:

HTTP/1.1 201 Created

Location: /users/456

Content-Type: application/json

POST characteristics:

- POSTs to collection (

/usersnot/users/456) - Server assigns resource ID

- 201 Created status indicates success

Locationheader contains new resource URL- Not idempotent: Repeated POSTs create multiple resources

Why not idempotent:

POST /users {"email": "test@example.com"} → 201 Created, user_id=456

POST /users {"email": "test@example.com"} → 201 Created, user_id=789 (different resource!)

PUT replaces entire resource

Request sent to specific resource URL:

PUT /users/456 HTTP/1.1

Content-Type: application/json

Server replaces resource completely:

HTTP/1.1 200 OK

PUT characteristics:

- PUTs to specific resource (

/users/456) - Client specifies resource ID

- Request body contains complete resource

- 200 OK with updated resource

- Idempotent: Multiple identical PUTs result in same state

PUT replaces entirely:

Missing fields in request are removed:

PUT /users/456 {"email": "new@example.com"}

Result: name field removed (entire resource replaced, not email alone)

Use PATCH for partial updates instead

Query Parameters - Filtering Collections

Query parameters modify which resources are returned

Example: GET /flights?departure_airport=LAX

Path /flights identifies collection, departure_airport=LAX filters results

Query parameter syntax:

- Appended after

?in URL - Key-value pairs:

key=value - Multiple parameters joined with

& - URL-encoded: Space →

%20, special characters escaped

Filtering examples:

Single filter: GET /flights?departure_airport=LAX → Returns only flights departing from LAX

Multiple filters: GET /flights?departure_airport=LAX&arrival_airport=JFK&date=2025-02-15 → Returns LAX→JFK flights on specific date

Three-way filter: GET /flights?departure_airport=LAX&status=scheduled&aircraft_type=737 → Returns scheduled 737 flights from LAX

All filters are AND conditions - flight must match all criteria

Parameter validation returns 400 Bad Request:

Invalid value:

GET /flights?date=Feb-15-2025

HTTP/1.1 400 Bad Request

Server validates parameters before database query

Sorting with parameters:

GET /flights?sort=departure_time— Ascending order (default)GET /flights?sort=-departure_time— Descending order (minus prefix)GET /flights?sort=departure_airport,departure_time— Multiple fields (comma-separated)

Last example sorts LAX flights before JFK, then by time within each airport

Combining filters and sorting:

GET /flights?departure_airport=LAX&status=scheduled&sort=-departure_time

Returns scheduled LAX flights, most recent first

Query parameters keep URL structure clean while enabling flexible filtering

Pagination - Handling Large Collections

Collections grow beyond what a single response can efficiently carry

Without pagination: GET /flights → Returns 2,500 flights, 4MB response, 8 second load time

With pagination: GET /flights?limit=50&offset=0 → Returns 50 flights, 80KB response, 150ms load time

Offset-based pagination:

limit controls page size, offset controls starting position

- First page (flights 0-49):

GET /flights?limit=50&offset=0 - Second page (flights 50-99):

GET /flights?limit=50&offset=50 - Third page (flights 100-149):

GET /flights?limit=50&offset=100

Formula: offset = page_number × limit

Pagination metadata in response:

Pagination with filters:

GET /flights?departure_airport=LAX&limit=50&offset=0 → First 50 LAX flights GET /flights?departure_airport=LAX&limit=50&offset=50 → Next 50 LAX flights

Filters applied before pagination

Measured performance (2,500 flight collection):

- Full response: 8s, 4MB

- Paginated (50/page): 150ms, 80KB per page

- 53× faster initial load

Alternative strategies:

- Cursor-based: Position encoded in opaque token, stable under concurrent modifications, constant-time lookups regardless of page depth

- Page-based:

?page=2&per_page=50— server calculates offset internally

Offset is simple but degrades at large offsets and produces inconsistent results when data changes between page fetches. Cursor-based pagination addresses both — covered in detail in API Specification.

Idempotency - Safe Retry Behavior

Idempotent operation: Multiple identical requests have same effect as single request

GET - Idempotent and safe:

# Call once

response1 = requests.get('http://api/users/123')

user1 = response1.json() # {"user_id": 123, "email": "alice@..."}

# Call again

response2 = requests.get('http://api/users/123')

user2 = response2.json() # {"user_id": 123, "email": "alice@..."}

# Same result, no side effects

assert user1 == user2PUT - Idempotent but not safe:

# Call once

requests.put('http://api/users/123',

json={"email": "alice.new@example.com", "is_active": true})

# Result: email changed to alice.new@example.com

# Call again with same data

requests.put('http://api/users/123',

json={"email": "alice.new@example.com", "is_active": true})

# Result: email still alice.new@example.com (no additional change)

# Multiple calls → same final stateDELETE - Idempotent:

POST - Not idempotent:

# Call once

response1 = requests.post('http://api/users',

json={"email": "bob@example.com"})

# Response: 201 Created, user_id=456

# Call again with same data

response2 = requests.post('http://api/users',

json={"email": "bob@example.com"})

# Response: 201 Created, user_id=789 (different user!)

# Two users created - NOT idempotentIdempotency matters for retries:

Network timeout scenario:

Idempotency key pattern:

Server implementation:

Statelessness - No Server-Side Session

REST constraint: Each request contains all information needed to process it

Stateful approach (violates REST):

# Login creates server-side session

POST /login

Body: {"email": "alice@example.com", "password": "..."}

Response:

HTTP/1.1 200 OK

Set-Cookie: session_id=abc123

# Server stores:

sessions['abc123'] = {

'user_id': 123,

'email': 'alice@example.com',

'logged_in_at': '2025-01-15T10:00:00Z'

}

# Subsequent requests reference session

GET /bookings

Cookie: session_id=abc123

# Server looks up session['abc123'] to get user_idProblems with server-side sessions:

- Server must store session for every active user

- 10K active users = 10K session objects in memory

- Load balancer must route all requests from user to same server

- Server restart loses all sessions

- Horizontal scaling requires session replication

Stateless approach (REST-compliant):

Stateless request:

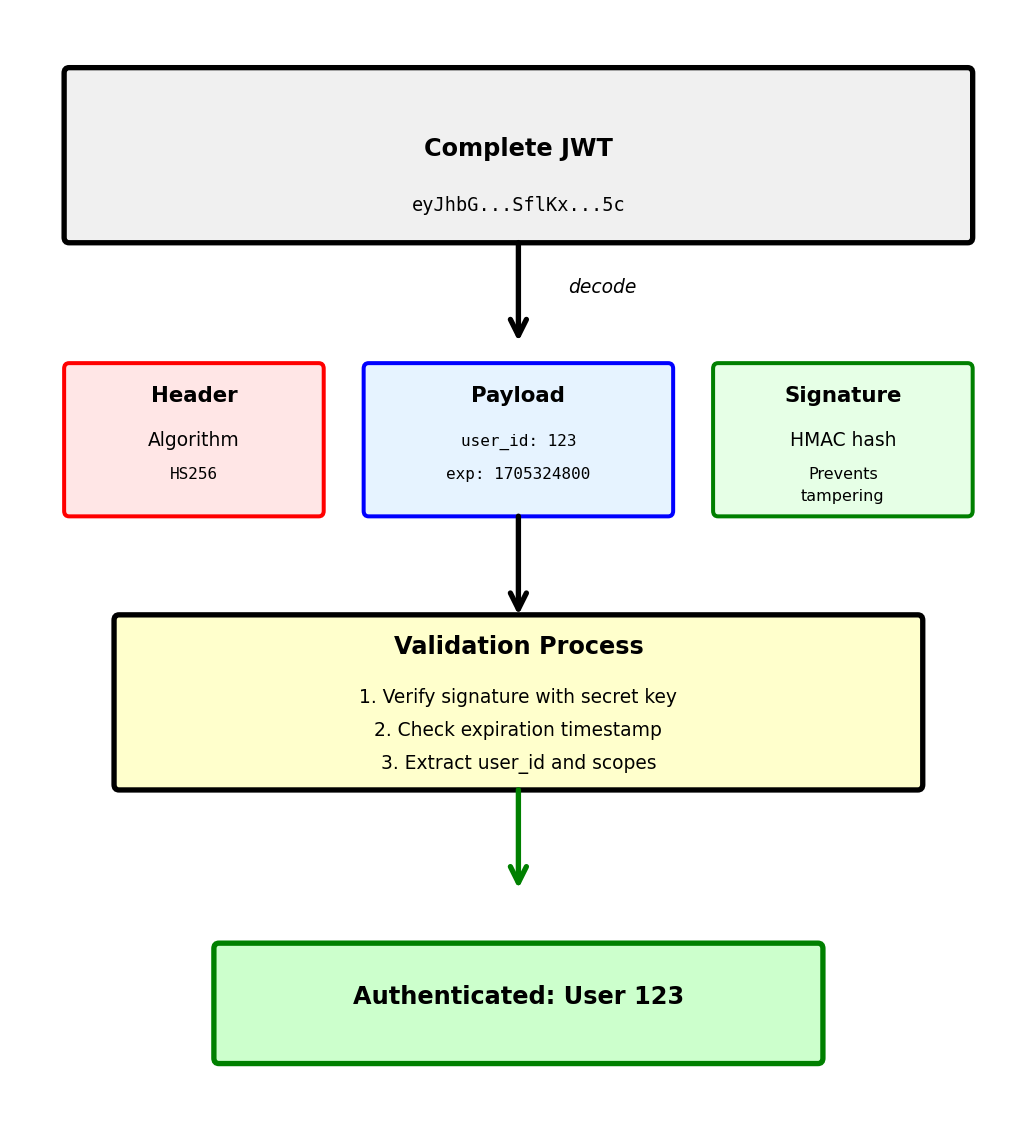

JWT (JSON Web Token) structure:

Header:

{

"alg": "HS256",

"typ": "JWT"

}

Payload:

{

"user_id": 123,

"email": "alice@example.com",

"exp": 1705324800, # Expiration timestamp

"iat": 1705321200 # Issued at timestamp

}

Signature:

HMACSHA256(

base64(header) + "." + base64(payload),

server_secret_key

)

Final token:

base64(header).base64(payload).signatureBenefits of stateless design:

- Server doesn’t store session data (no memory overhead)

- Any server can handle any request (no sticky sessions)

- Horizontal scaling trivial (add servers)

- Server restart doesn’t invalidate tokens

- Token validation: O(1) decode vs O(1) session lookup

Token expiration:

- Short-lived: 15 minutes (security)

- Refresh token: 30 days (obtain new access token)

- Client responsibility to manage token lifecycle

Statelessness: any server can handle any request — horizontal scaling without shared state

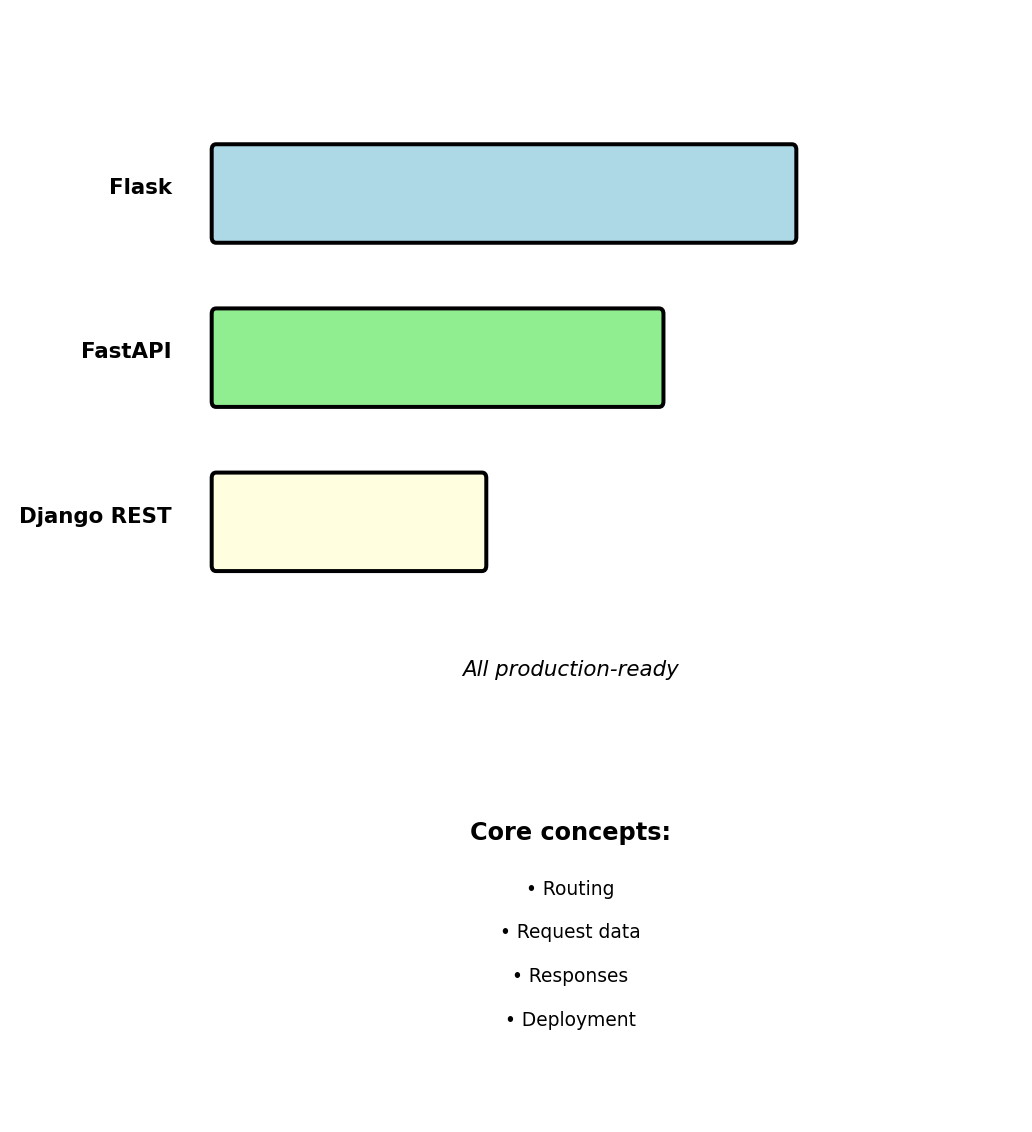

REST with Python (Flask)

Framework Choice: Flask

Python web frameworks for APIs:

- Flask: Minimal, explicit routing

- FastAPI: Modern, type-safe

- Django REST Framework: Full-featured

EE 547 uses Flask

Minimal abstractions make core concepts visible. Patterns transfer to FastAPI and Django REST.

Framework-agnostic concepts covered:

- Request/response flow

- URL routing and parameters

- Request data extraction

- Production deployment

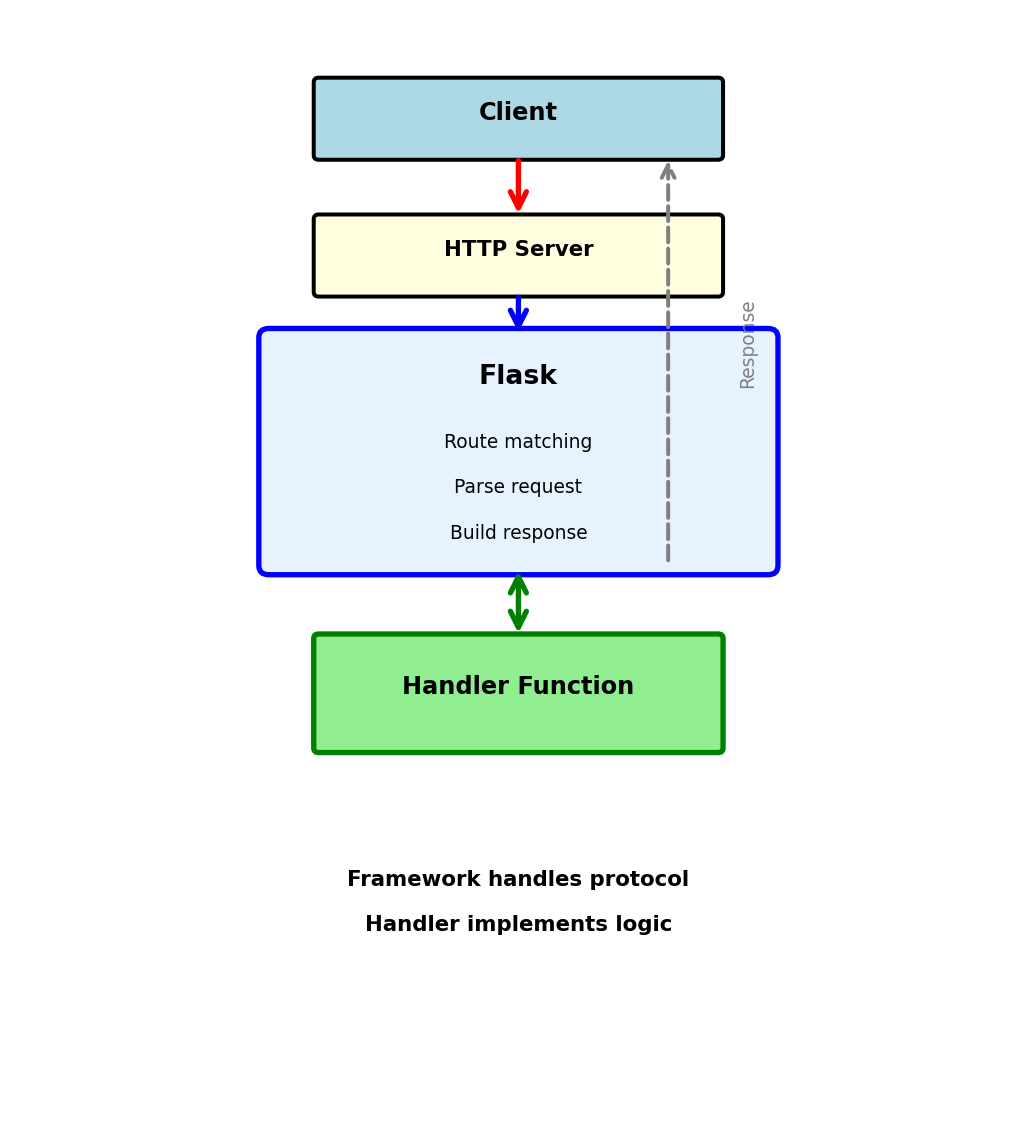

Web Framework Architecture

Framework sits between HTTP server and handler code

Client

↓ HTTP Request

HTTP Server (gunicorn)

↓ WSGI

Flask Framework

↓ Calls

Handler Function

↓ Returns

Flask Framework

↓ WSGI

HTTP Server

↓ HTTP Response

ClientWhat Flask does:

- Match URL to function

- Parse incoming data

- Call handler function

- Build HTTP response

Handler implementation:

- Functions that process requests

- Business logic

- Return data

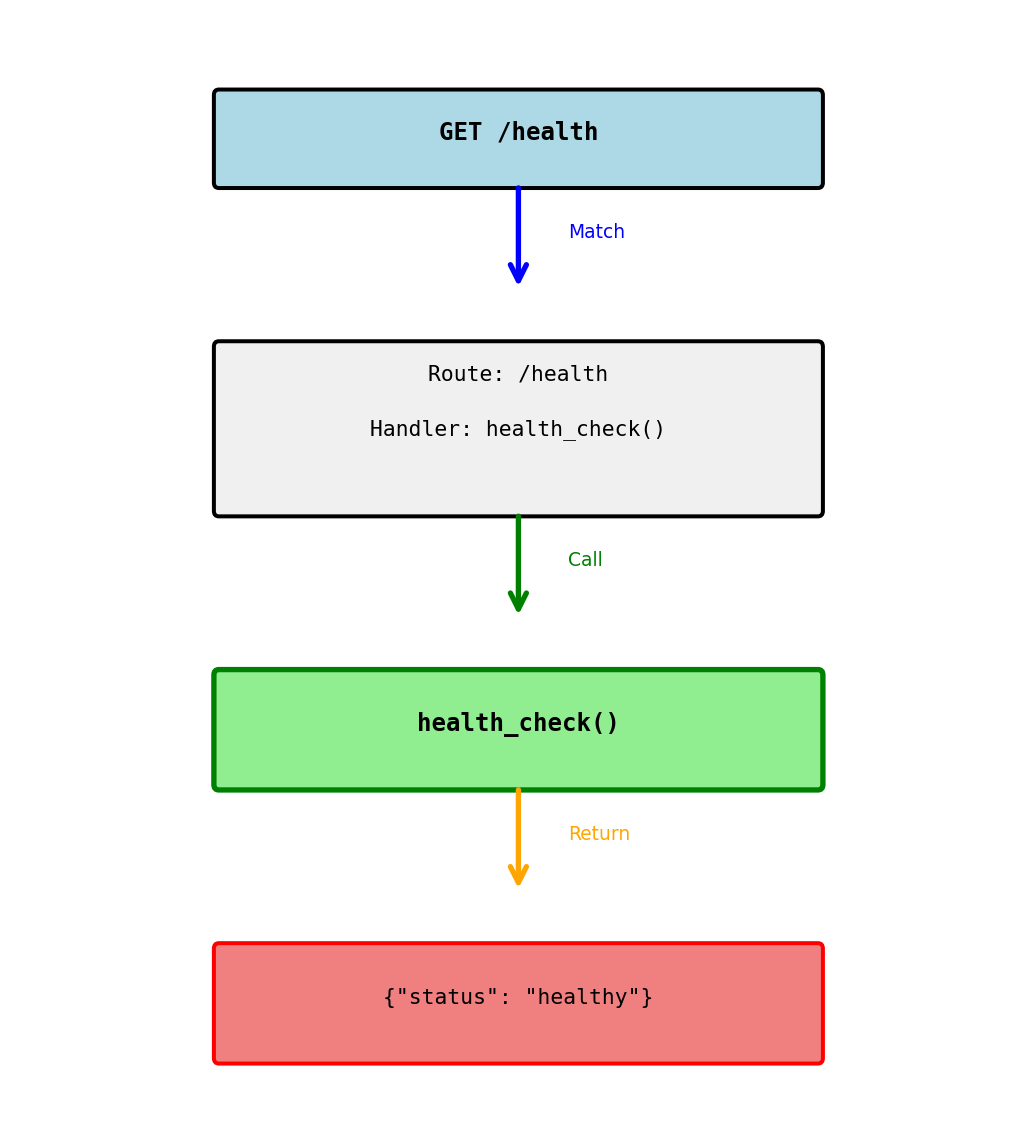

Simple Route Example

Connecting a URL to a function

What happens:

@app.route('/health')registers the route- Client sends:

GET /health - Flask sees

/healthmatches registered route - Flask calls

health_check()function - Function returns dict

- Flask converts to JSON response

Response:

HTTP/1.1 200 OK

Content-Type: application/json

{"status": "healthy"}Flask automatically:

- Sets status code to 200

- Sets Content-Type header

- Converts dict to JSON

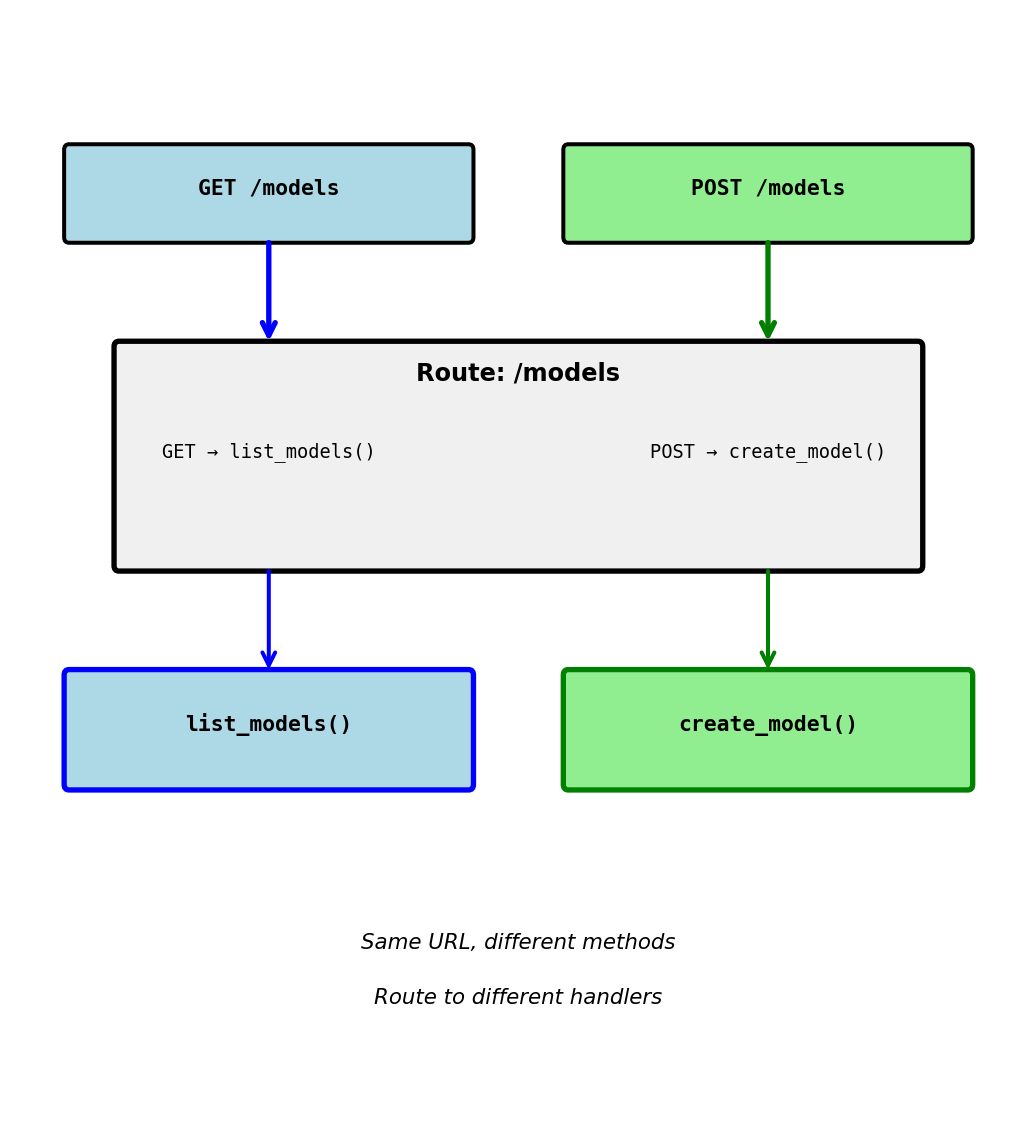

HTTP Methods in Routes

Restricting which HTTP methods a route accepts

Same URL, different methods:

GET /models→ callslist_models()POST /models→ callscreate_model()PUT /models→ 405 Method Not Allowed

Why separate by method:

- GET: Read data (list models)

- POST: Create data (new model)

- Different operations, different functions

- Clear separation of concerns

Default is GET only:

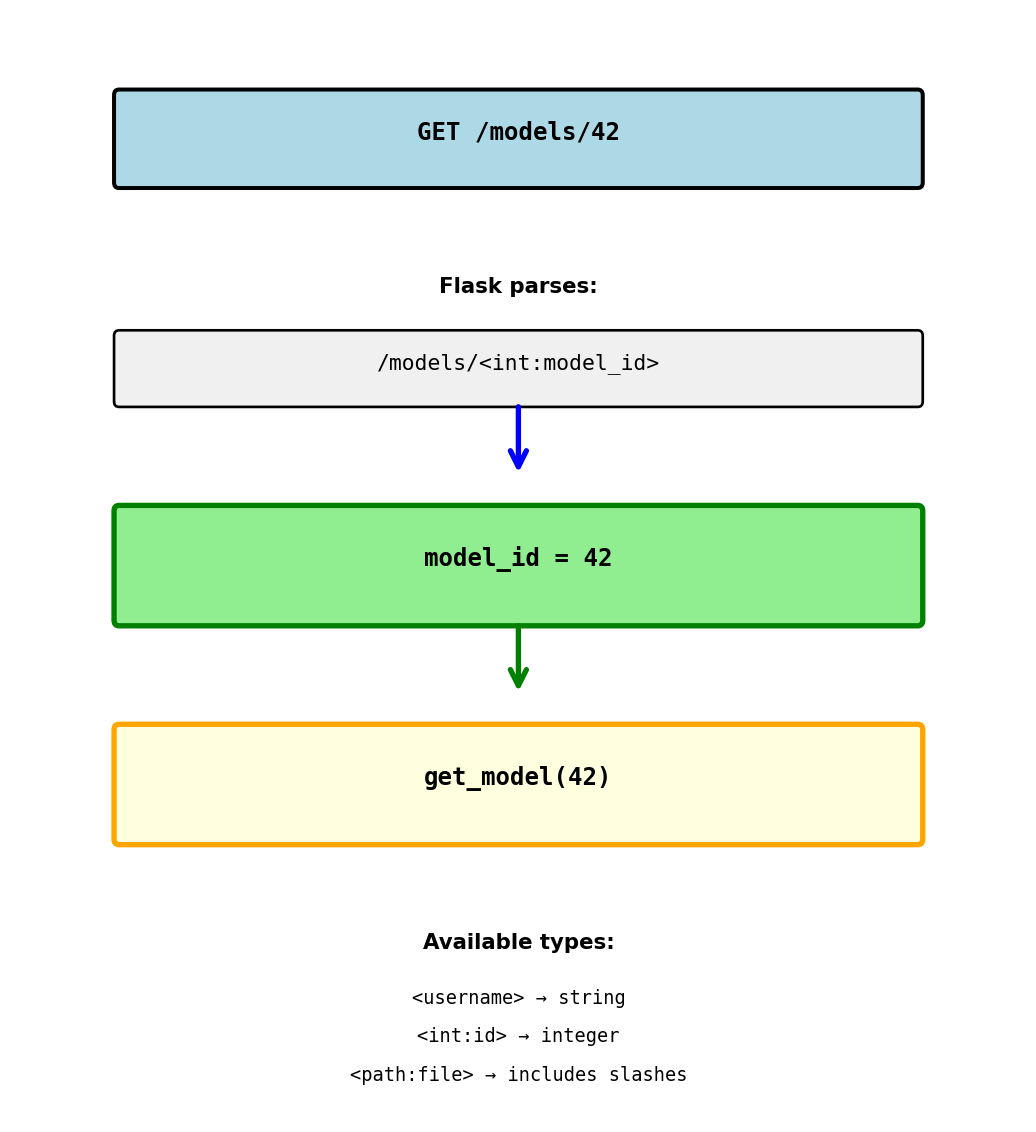

URL Parameters

Capturing values from the URL

URL: GET /models/42 Result: model_id = "42" (string)

Type conversion:

URL: GET /models/42 Result: model_id = 42 (integer)

URL: GET /models/abc Result: 404 Not Found (can’t convert to int)

Multiple parameters:

URL: GET /models/42/predictions/xyz Result: model_id = 42, pred_id = "xyz"

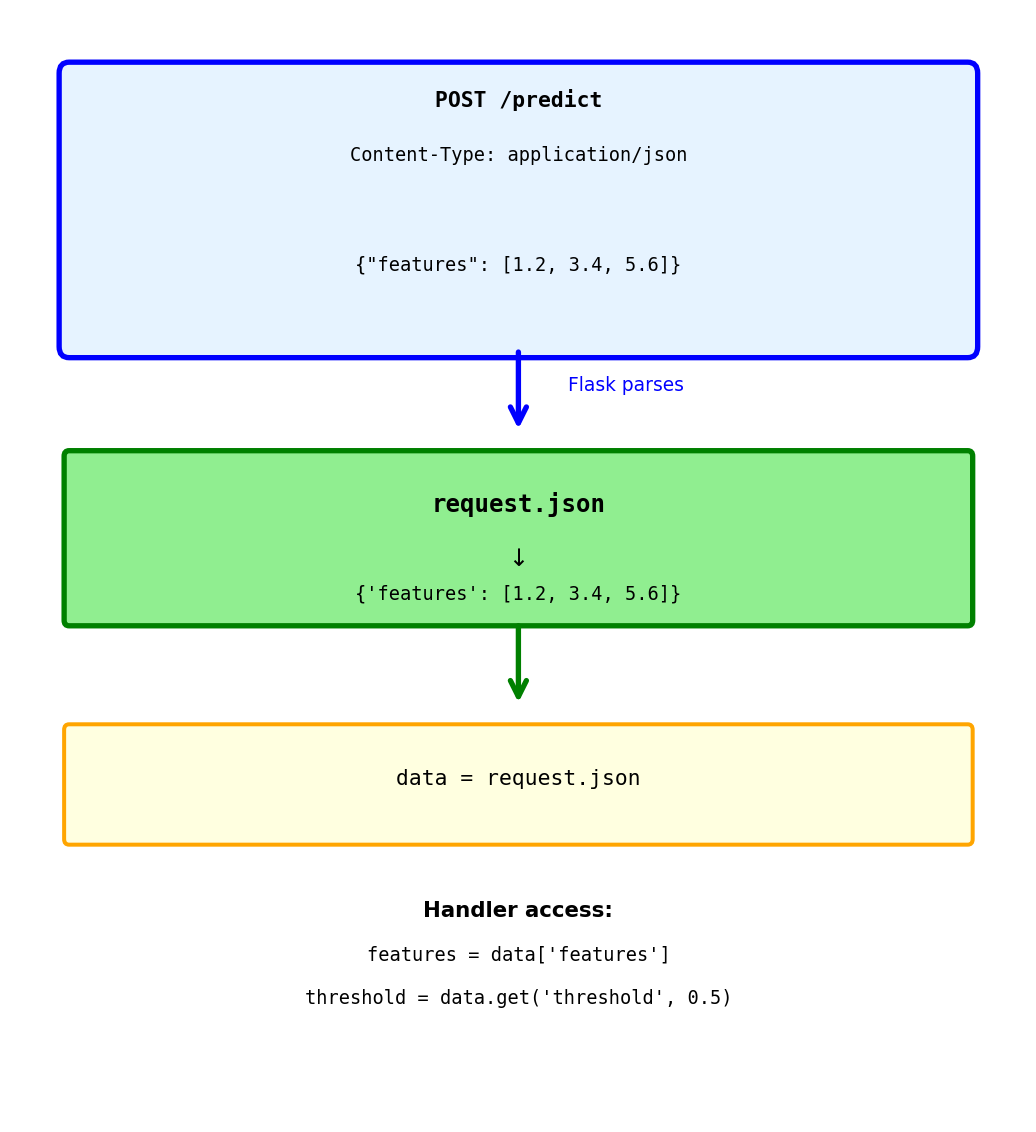

Accessing Request Data: JSON Body

Reading JSON from request body

Client sends:

POST /predict

Content-Type: application/json

{"features": [1.2, 3.4, 5.6]}Flask automatically:

- Checks Content-Type header

- Parses JSON string

- Creates Python dict

- Makes available as

request.json

Safe access with get():

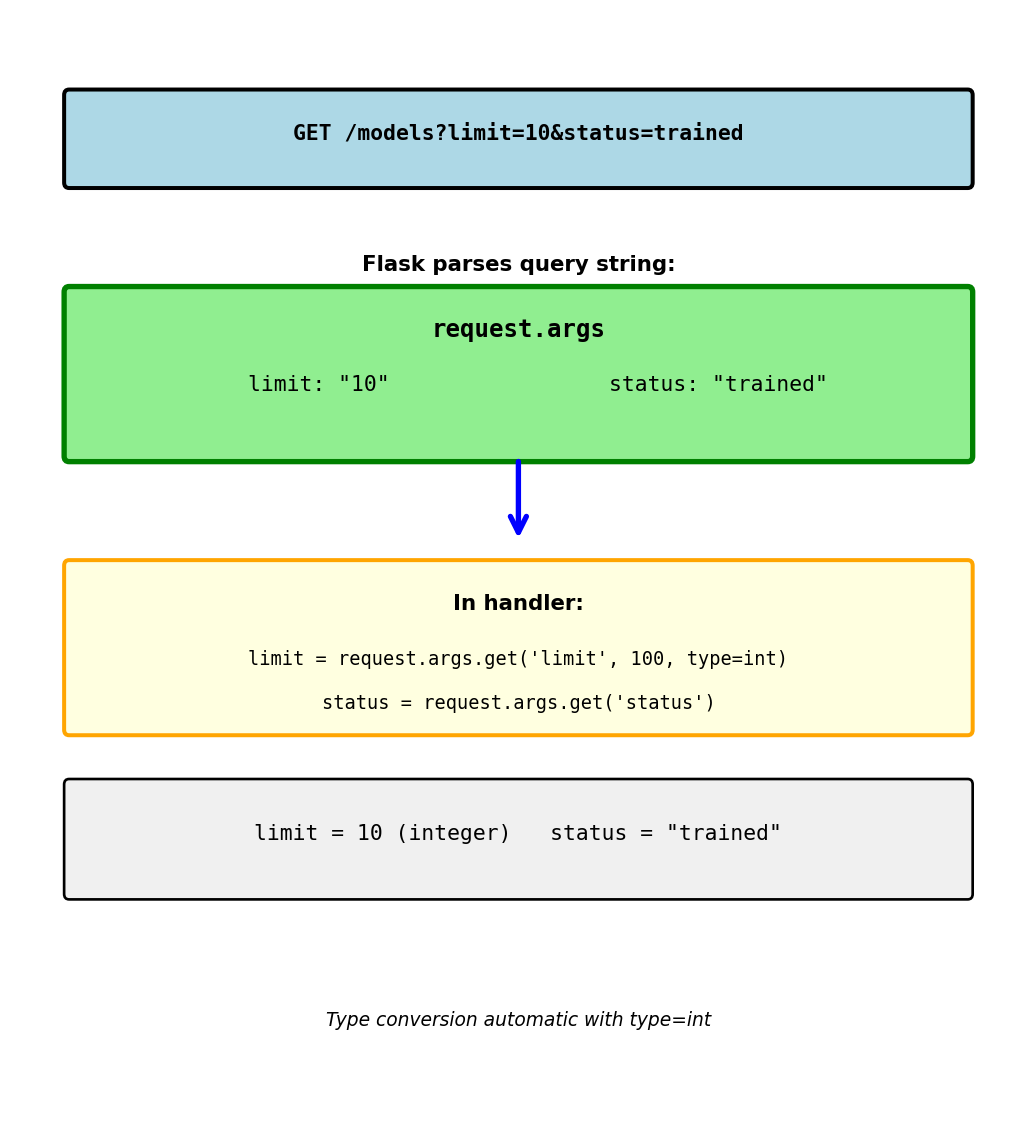

Accessing Request Data: Query Parameters

Reading parameters from URL query string

Query string after ? in URL:

- Key-value pairs:

key=value - Multiple params:

&separator /models?limit=10&status=trained

request.args.get() parameters:

- First arg: parameter name

- Second arg: default value if missing

type=int: convert to integer

Without default:

With default:

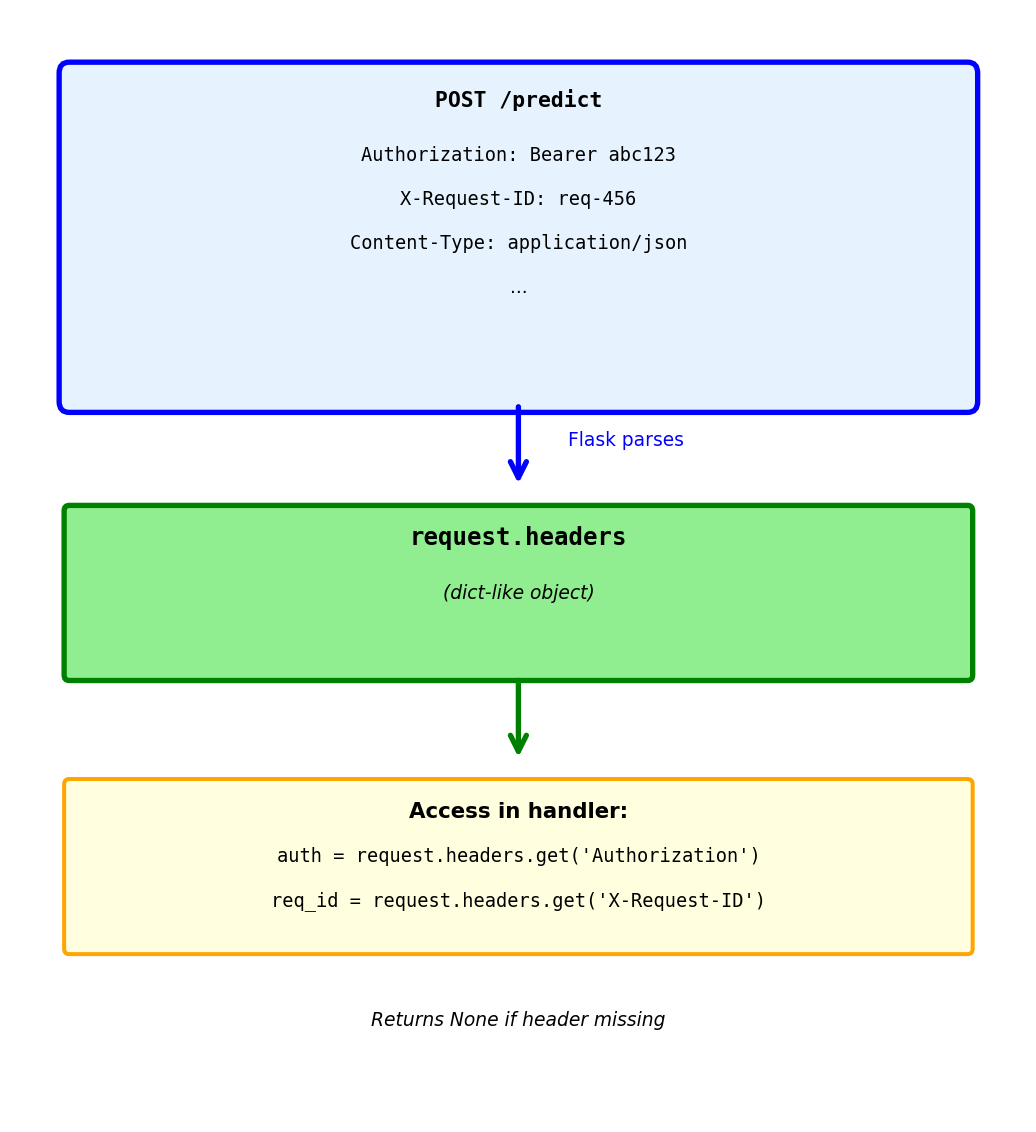

Accessing Request Data: Headers

Reading HTTP headers

@app.route('/predict', methods=['POST'])

def predict():

# Authorization header

auth = request.headers.get('Authorization')

# "Bearer eyJhbGci..."

# Custom headers

request_id = request.headers.get('X-Request-ID')

# Content type

content_type = request.headers.get('Content-Type')

# Validate token

if not auth:

return {'error': 'Missing authorization'}, 401

if not validate_token(auth):

return {'error': 'Invalid token'}, 401

# Process request

return {'prediction': 0.87}Common headers:

Authorization: Auth tokensContent-Type: Body formatX-Request-ID: Request trackingUser-Agent: Client information

Headers case-insensitive:

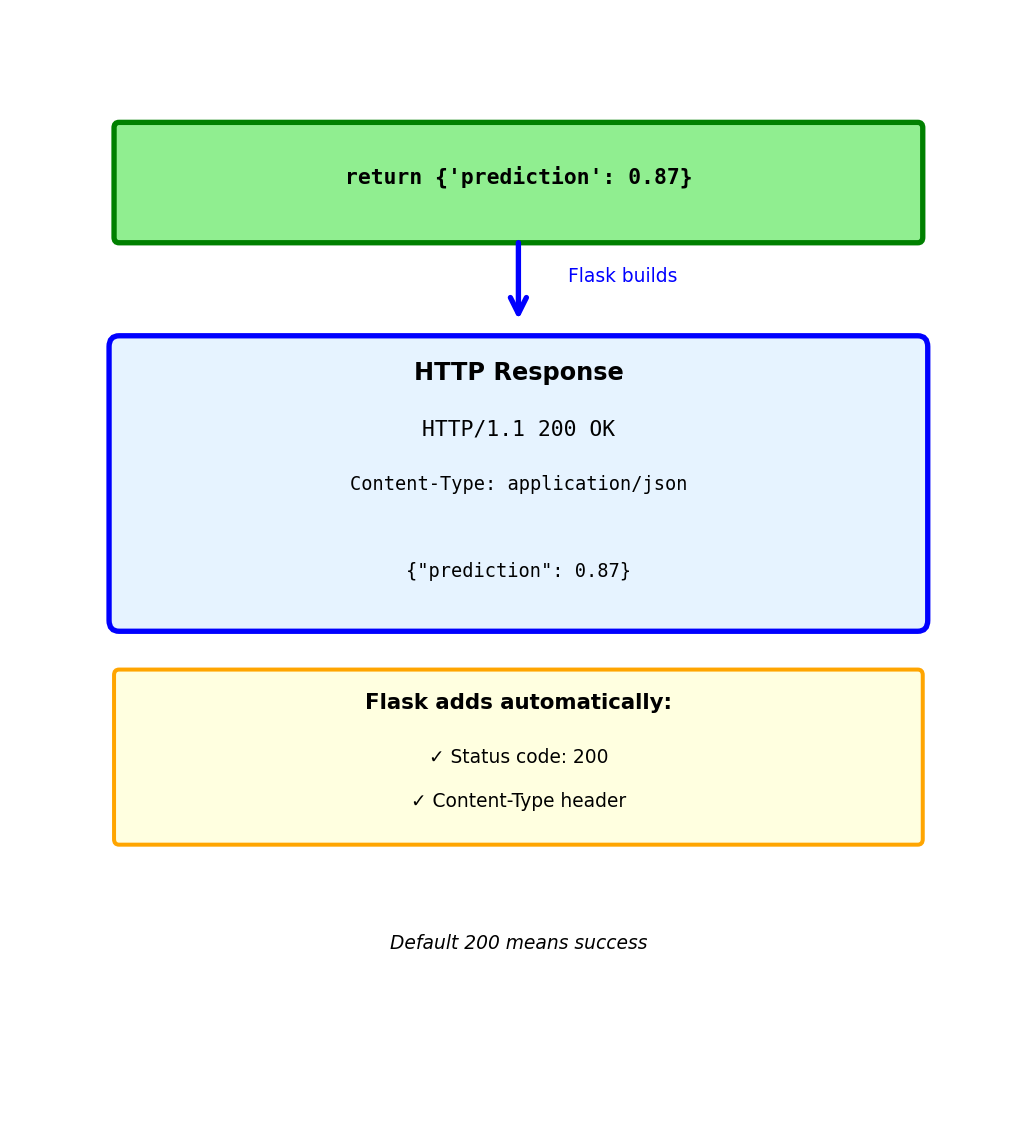

Building Responses: Simple Return

Return dict → Flask converts to JSON

Response Flask generates:

HTTP/1.1 200 OK

Content-Type: application/json

{"prediction": 0.87}Flask automatically:

- Sets status code to 200 (success)

- Sets Content-Type to application/json

- Converts Python dict to JSON string

- Returns properly formatted HTTP response

This is the most common pattern:

- Simple and clean

- Works for most GET/PUT requests

- Default 200 status appropriate for success

Building Responses: Custom Status Code

Return tuple: (data, status_code)

Response:

HTTP/1.1 201 Created

Content-Type: application/json

{"id": 42}When to use different status codes:

201 Created - Resource successfully created (POST)

204 No Content - Success but no data to return (DELETE)

404 Not Found - Resource doesn’t exist

422 Unprocessable Entity - Validation failed

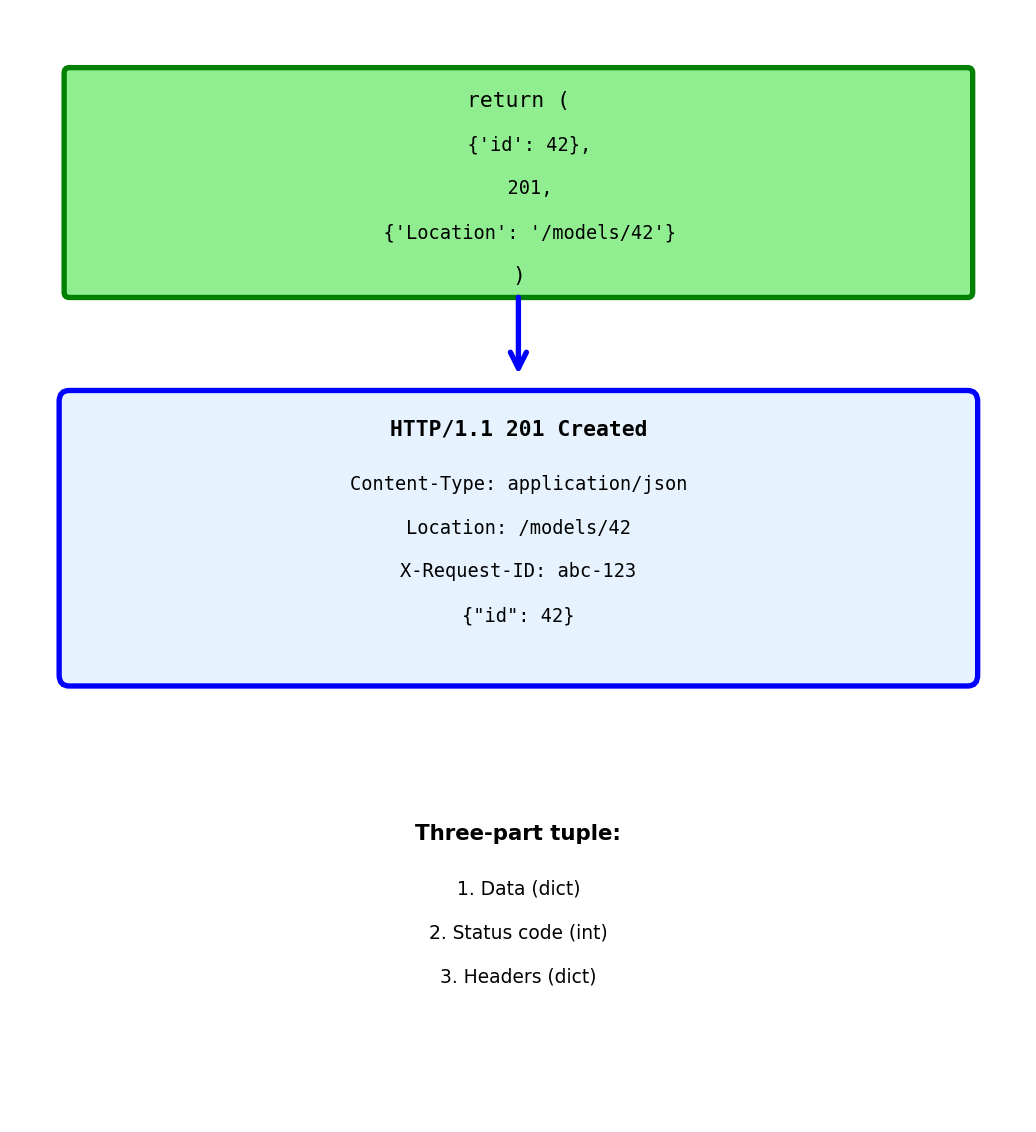

Building Responses: Adding Headers

Return tuple: (data, status, headers)

Response:

HTTP/1.1 201 Created

Content-Type: application/json

Location: /models/42

X-Request-ID: abc-123

{"id": 42}Common response headers:

Location - URL of newly created resource

X-Request-ID - Echo back for tracking

Cache-Control - Control caching

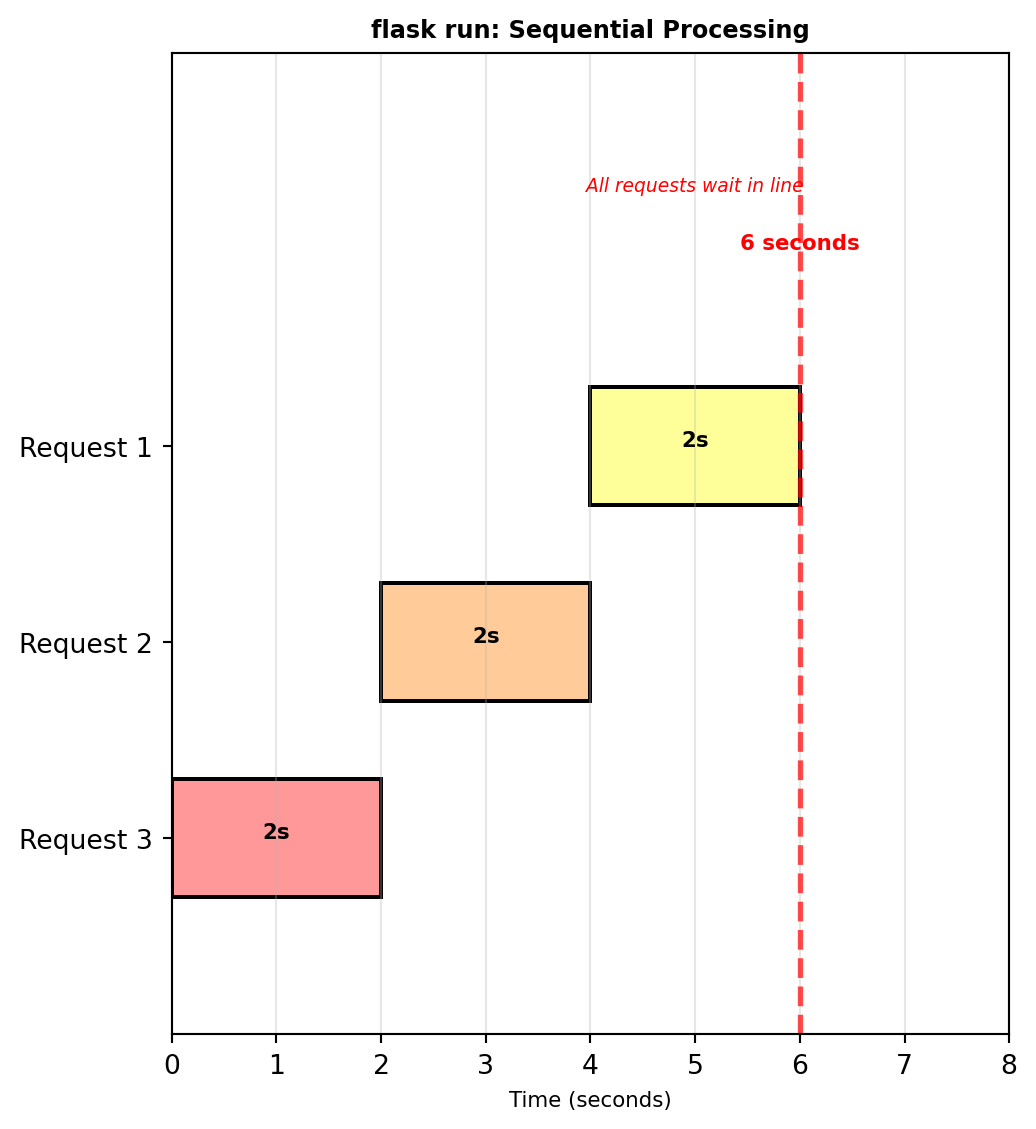

Production: Why Not flask run

Development server not for production

Problems:

- Single process, single thread

- Handles one request at a time

- No crash recovery

- Debug mode exposes code

- Poor performance under load

Example:

With flask run:

- Request 1: 0-2 seconds

- Request 2: 2-4 seconds (waits)

- Request 3: 4-6 seconds (waits)

- All sequential, no concurrency

Production needs:

- Multiple worker processes

- Concurrent request handling

- Automatic crash recovery

- Process management

Single process means:

- One request blocks others

- No parallelism

- Poor resource usage

- Unacceptable for production

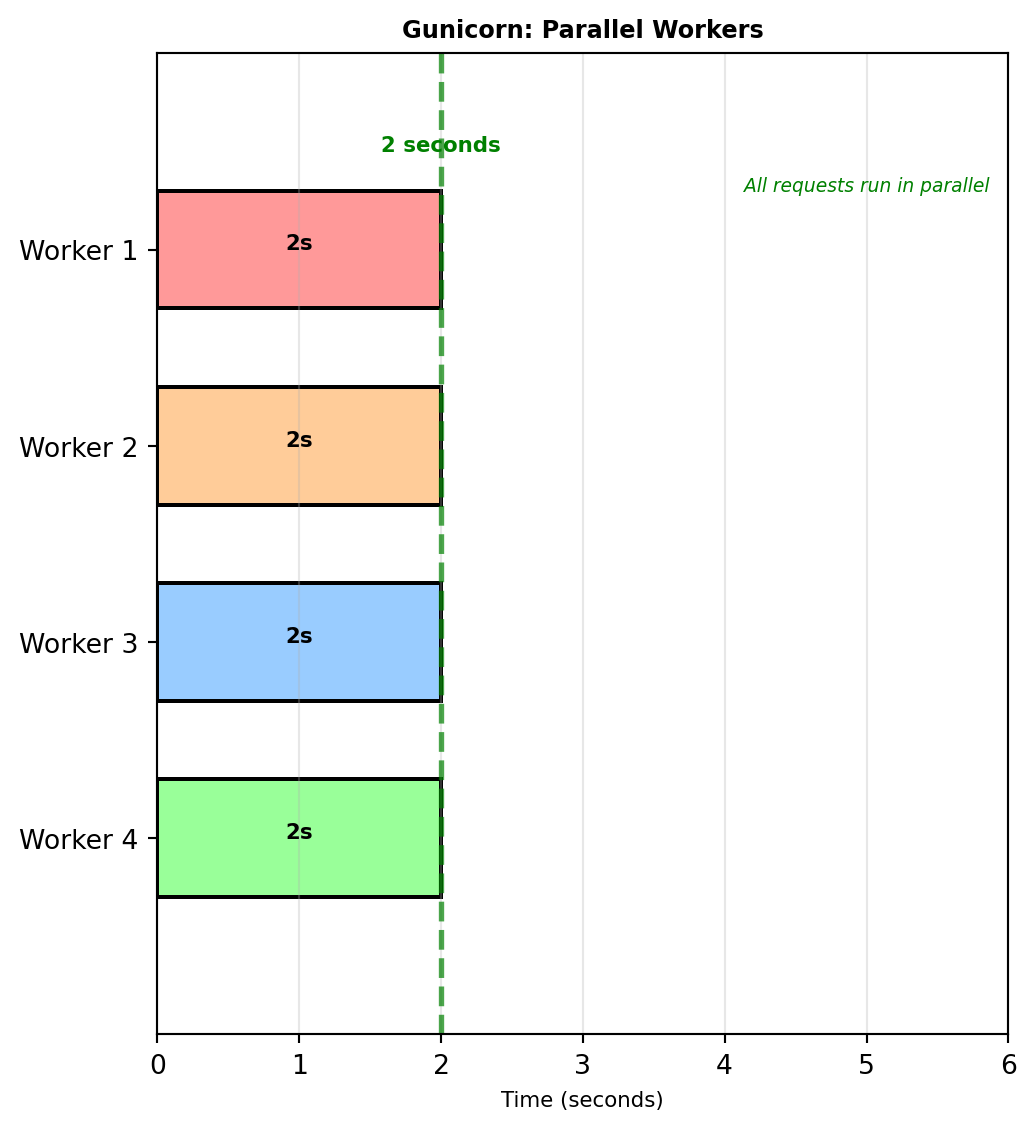

Production: Gunicorn with Workers

Gunicorn - Production WSGI server

What this does:

- Starts 4 separate worker processes

- Each worker handles one request at a time

- 4 concurrent requests possible

- Load balanced across workers

Worker calculation:

workers = (CPU cores × 2) + 12-core machine → 5 workers 4-core machine → 9 workers

Same 2-second prediction with 4 workers:

- Requests 1-4: All start at 0s, finish at 2s

- Request 5: Starts at 2s when worker frees

4× improvement for concurrent requests

Configuration file:

Multiple workers = concurrent processing

Each worker is independent process

Static Files: Production Strategies

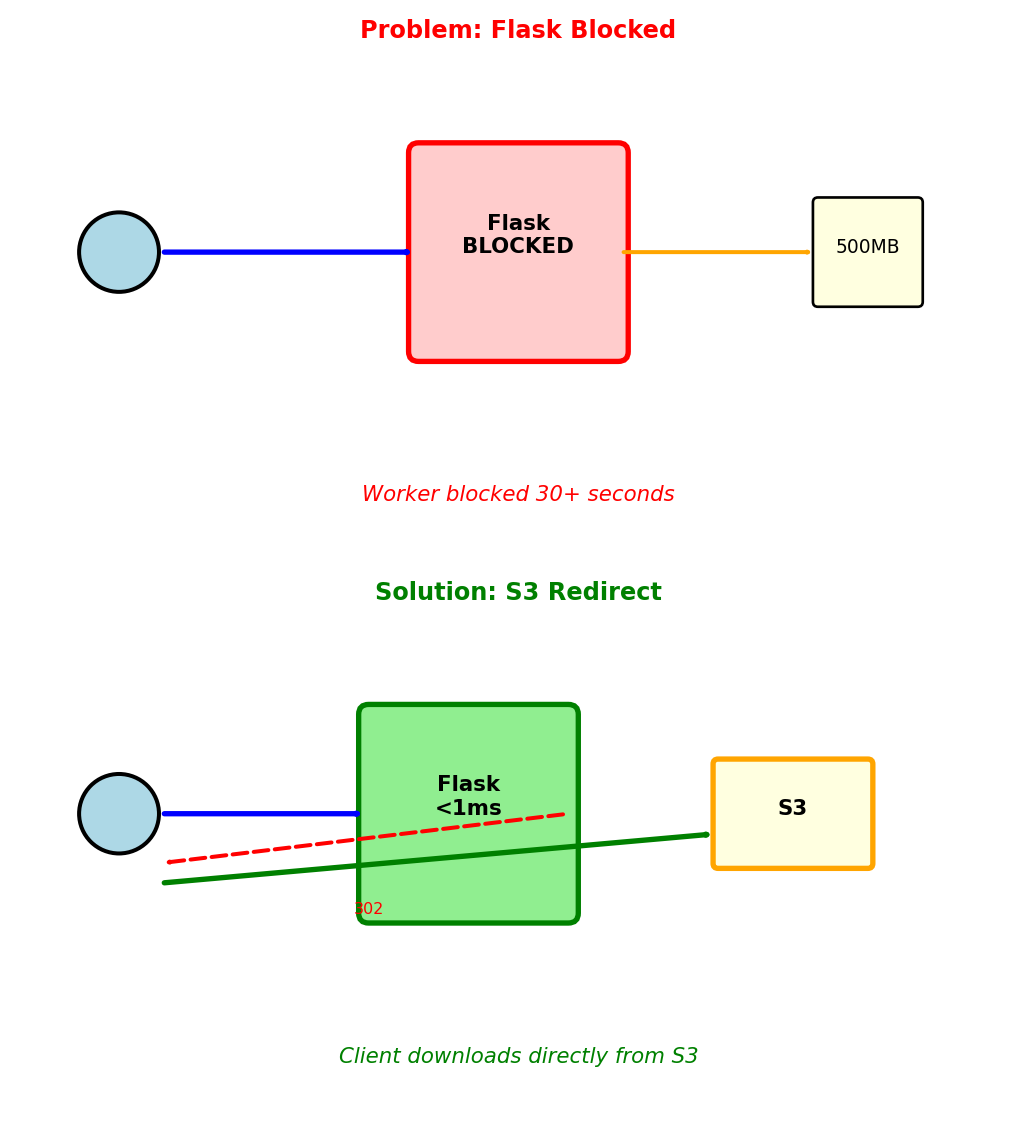

Flask serves static files synchronously, blocking the worker for the entire transfer

What happens:

- Flask worker reads 500MB file

- Sends to client over network

- Worker blocked 30+ seconds

- 4 concurrent downloads = all workers blocked

Solution 1: Nginx serves static files

Nginx handles /static/* directly

Flask never sees these requests

Workers free for API callsSolution 2: S3 redirect pattern

Flow:

- Client requests file from Flask

- Flask returns 302 redirect to S3

- Client downloads directly from S3

- Flask worker free in <1ms

Use S3 redirect for: Large files (>10MB), model weights, datasets, user uploads

API Contracts Define and Enforce Interfaces

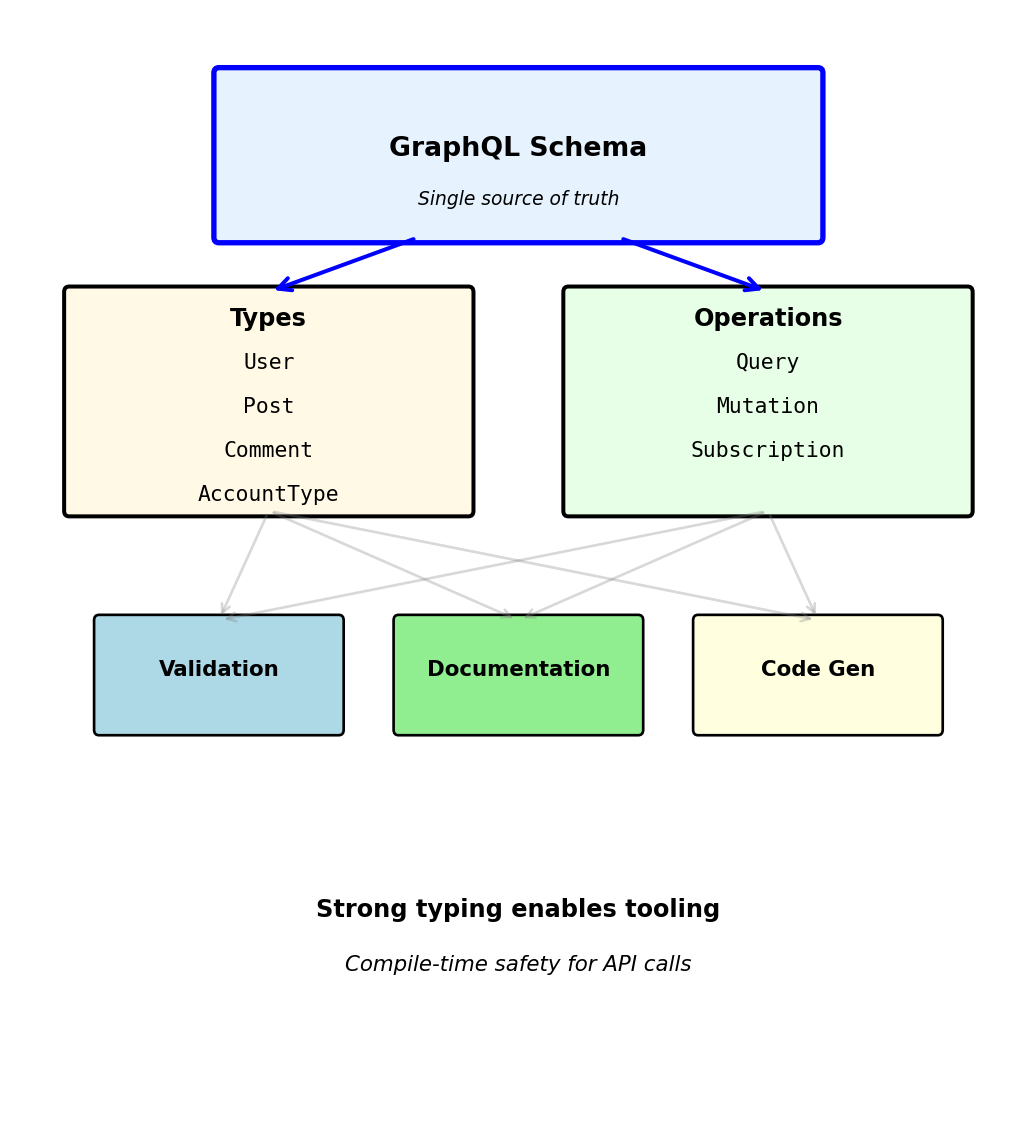

OpenAPI Specification

OpenAPI defines API structure in machine-readable format

Specification written in YAML or JSON, describes:

- Available endpoints and operations

- Request parameters and body schemas

- Response formats and status codes

- Authentication requirements

- Data types and constraints

Example specification for user endpoint:

openapi: 3.0.0

info:

title: User Service API

version: 2.1.0

paths:

/users/{userId}:

get:

parameters:

- name: userId

in: path

required: true

schema:

type: integer

minimum: 1

responses:

'200':

description: User found

content:

application/json:

schema:

$ref: '#/components/schemas/User'

'404':

description: User not found

components:

schemas:

User:

type: object

required: [user_id, email, is_active]

properties:

user_id: {type: integer}

email: {type: string, format: email}

is_active: {type: boolean}

engagement_score: {type: number, minimum: 0, maximum: 100}Specification serves multiple purposes:

1. Documentation source - Swagger UI generates interactive docs - Always synchronized with implementation - Developers explore API without writing code

2. Validation layer - Request validation against schema - Response validation before sending - Type checking and constraint enforcement

3. Code generation - Server stubs with routing - Client SDKs in multiple languages - Type-safe API calls

4. Contract testing - Verify implementation matches spec - Detect breaking changes - Test compliance automatically

Specification-first development:

Write spec → Generate code → Implement handlers

Ensures API design considered before implementation details

Alternative: Code-first

Write code → Generate spec from annotations

Easier to start, harder to maintain consistency

Schema Validation

OpenAPI schemas define data structures with constraints

ML prediction endpoint schema:

paths:

/models/{modelId}/predict:

post:

parameters:

- name: modelId

in: path

schema: {type: string, pattern: '^[a-z0-9-]+$'}

requestBody:

required: true

content:

application/json:

schema:

type: object

required: [features, model_version]

properties:

features:

type: array

items: {type: number}

minItems: 10

maxItems: 10

model_version:

type: string

enum: [v1.0, v1.1, v2.0]

threshold:

type: number

minimum: 0.0

maximum: 1.0

default: 0.5

responses:

'200':

content:

application/json:

schema:

type: object

required: [prediction, confidence]

properties:

prediction: {type: number}

confidence: {type: number, minimum: 0, maximum: 1}Schema constraints validated automatically:

- Type checking: string vs number vs array

- Array length: exactly 10 features required

- Value ranges: threshold between 0 and 1

- Enum validation: model_version must match list

- Required fields: features and model_version mandatory

Invalid requests rejected before processing:

Missing required field:

Wrong array length:

Invalid enum value:

Validation prevents:

- Type errors in application code

- Database constraint violations

- Model inference crashes

- Invalid computation results

Schema validation rejects bad requests in microseconds — before the server spends time on database queries or model inference

Specification-Driven Design

Single OpenAPI specification generates multiple artifacts

1. Interactive documentation (Swagger UI)

Browsable interface with:

- List of all endpoints grouped by resource

- Request/response examples

- Try-it-out functionality for live testing

- Schema definitions with types and constraints

- Authentication requirements

Developers test endpoints without writing client code

2. Server stubs

Generated code includes:

- Route definitions matching specification

- Request parsing and validation

- Response serialization

- Type hints (in typed languages)

- Handler function signatures

# Generated from OpenAPI spec

@app.route('/models/<model_id>/predict', methods=['POST'])

def predict_model(model_id: str):

# Request already validated against schema

body = request.json # Type: PredictionRequest

# Implement business logic here

result = run_prediction(model_id, body['features'])

# Response validated before sending

return {'prediction': result, 'confidence': 0.87}3. Client SDKs

Type-safe client libraries:

# Generated Python client

from api_client import UserServiceClient

client = UserServiceClient(base_url='https://api.example.com')

# Method signatures from spec

user = client.get_user(user_id=123) # Type: User

print(user.email) # IDE autocomplete knows fields

# Type checker catches errors

client.get_user(user_id="abc") # Error: expected int4. Request validation middleware

Automatically generated validators:

# Validates before handler executes

def validate_request(spec):

def decorator(f):

def wrapper(*args, **kwargs):

# Check request matches spec

errors = validate_against_schema(

request,

spec['paths'][request.path]

)

if errors:

return {'error': errors}, 400

return f(*args, **kwargs)

return wrapper

return decorator5. Mock servers

Generate mock API from specification:

- Returns example responses

- Validates request format

- Enables frontend development before backend complete

Code generation tools:

- OpenAPI Generator: 40+ language targets

- Swagger Codegen: Server and client generation

- Prism: Mock server from specification

- Redoc: Alternative documentation renderer

Specification as single source of truth:

Change spec → Regenerate all artifacts

Documentation, validation, and client code stay synchronized

Manual maintenance alternative:

- Write documentation separately (becomes outdated)

- Write validation logic per endpoint (inconsistent)

- Manually create client libraries (error-prone)

- Update all three when API changes (forgotten)

Machine-readable specification prevents divergence

API Versioning

APIs evolve but clients update slowly

Version placement options:

URL path versioning (most common):

GET /v1/users/123

GET /v2/users/123Advantages: - Version immediately visible in URL - Easy to route in load balancer - Clear in logs and monitoring

Disadvantages: - URL changes with version - Resource “same” user has different URLs

Header versioning:

GET /users/123

Accept: application/vnd.api.v1+json

GET /users/123

Accept: application/vnd.api.v2+jsonAdvantages: - URLs remain stable - Content negotiation pattern

Disadvantages: - Version not visible in URL - Harder to test in browser - Requires header inspection

Custom header:

GET /users/123

API-Version: 1

GET /users/123

API-Version: 2Similar trade-offs to Accept header

Query parameter (not recommended):

GET /users/123?version=1

GET /users/123?version=2Disadvantages: - Mixes version with filtering parameters - Caching issues (query params affect cache key)

Version granularity:

Major versions (breaking changes): - v1 → v2: Field removed or renamed - v2 → v3: Response structure changed - Requires separate implementation

Minor versions (additions): - v2.0 → v2.1: New optional field added - v2.1 → v2.2: New endpoint added - Backward compatible within major version

Semantic versioning pattern:

MAJOR.MINOR.PATCH

- MAJOR: Breaking changes (v1 → v2)

- MINOR: New features, backward compatible (v2.0 → v2.1)

- PATCH: Bug fixes (v2.1.0 → v2.1.1)

When to increment major version:

- Removing endpoint

- Removing required field from response

- Adding required field to request

- Changing field type

- Renaming field

- Changing authentication method

Backward compatible additions:

- New optional request field

- New field in response (clients ignore unknown)

- New endpoint

- New optional query parameter

- New HTTP status code for new error case

Parallel version support:

Both versions active simultaneously:

Breaking vs Compatible Changes

Breaking change: Modification that causes existing clients to fail

Common breaking changes:

Field removal:

Client code accessing response['phone'] raises KeyError

Field rename:

Client parsing created field receives KeyError

Type change:

Client expecting string, performs string operations on number → TypeError

New required field:

// v1 request

POST /bookings

{"flight_id": 456, "user_id": 123}

// v2 request (requires seat_class)

POST /bookings

{"flight_id": 456, "user_id": 123, "seat_class": "economy"}Old clients missing seat_class → 400 Bad Request

Status code change:

// v1: Returns 200 OK when user not found (empty result)

// v2: Returns 404 Not Found when user not foundClient checking status == 200 for success misses 404 case

Non-breaking changes (backward compatible):

Adding optional field to response:

Old clients ignore unknown created_at field

Adding optional request parameter:

// v1: GET /flights?departure=LAX

// v2: GET /flights?departure=LAX&max_price=500Old clients don’t send max_price, server uses default behavior

Adding new endpoint:

// v1: GET /users, POST /users

// v2: GET /users, POST /users, GET /users/searchOld clients unaware of /users/search, continue using existing endpoints

Adding new HTTP method to existing endpoint:

// v1: GET /users/123

// v2: GET /users/123, PATCH /users/123Old clients only use GET, PATCH addition doesn’t affect them

Deprecation headers indicate future removal:

HTTP/1.1 200 OK

Deprecation: true

Sunset: Wed, 31 Dec 2025 23:59:59 GMT

Link: </v2/users/123>; rel="successor-version"Clients warned field or endpoint will be removed

Contract testing prevents breaking changes:

Test fails if response structure changes, preventing accidental breaking changes

Version Migration: Operational Constraints

Removing old API versions requires full client migration

Parallel operation is mandatory:

- Deploy v2 alongside v1

- Existing clients continue on v1 uninterrupted

- New clients adopt v2 immediately

- Server maintains both implementations simultaneously

Monitor adoption via request logs:

Cannot remove v1 until 0% traffic remains

Signal deprecation through HTTP headers:

HTTP/1.1 200 OK

Deprecation: true

Sunset: Mon, 15 Sep 2025 23:59:59 GMT

Link: </docs/v2-migration>; rel="deprecation-policy"Clients can programmatically detect pending removal

Gradual enforcement before shutdown:

- Make v1 read-only: GET continues, mutations return 410 Gone

- Identify remaining clients by API key / User-Agent

- Contact directly for migration assistance

Clients that block migration:

SELECT client_id, COUNT(*) as requests

FROM api_logs

WHERE version = 'v1'

AND timestamp > NOW() - INTERVAL '7 days'

GROUP BY client_id

ORDER BY requests DESC;

-- batch-job-1: 8,234 (automated, no owner)

-- mobile-app: 2,109 (old app version)

-- partner-api: 1,876 (quarterly release cycle)

-- unknown: 234 (API key ownership lost)Forgotten batch jobs, outdated mobile apps, and third-party integrations with slow release cycles are the typical blockers

Final shutdown returns 410 Gone:

HTTP/1.1 410 Gone

{

"error": "API v1 has been retired",

"migration_guide": "/docs/v1-to-v2",

"support": "api-support@example.com"

}Cost of maintaining parallel versions:

- Duplicate code, tests, and security patches

- Monitoring and support for both

- Roughly doubles development and testing burden during overlap

Internal API migrations can take months; external deprecations can stretch considerably longer

Error Response Structure

Structured errors provide actionable information

Basic error response:

Detailed validation errors:

Rate limit error with retry information:

Resource not found with suggestions:

Error response components:

1. Machine-readable code

Enables programmatic handling:

if response.status_code == 400:

error = response.json()['error']

if error['code'] == 'VALIDATION_ERROR':

# Fix validation issues

for detail in error['details']:

log.warning(f"Field {detail['field']}: {detail['message']}")

elif error['code'] == 'RATE_LIMIT_EXCEEDED':

# Wait and retry

time.sleep(error['retry_after'])2. Human-readable message

For developer debugging and logs

3. Context-specific details

Field-level errors for validation failures

4. Actionable information

Rate limits include reset time and retry delay

5. Request correlation

Include in support tickets for log correlation

6. Documentation links

Error code categories:

VALIDATION_ERROR: Client sent invalid dataAUTHENTICATION_ERROR: Token missing or invalidAUTHORIZATION_ERROR: Valid token, insufficient permissionsRATE_LIMIT_EXCEEDED: Too many requestsRESOURCE_NOT_FOUND: Requested resource doesn’t existCONFLICT: Operation conflicts with current stateSERVER_ERROR: Internal server failure

Consistent error structure across all endpoints

Pagination: Offset-Based

Large collections require pagination

Collection with 2,500 users:

Without pagination: GET /users - Returns all 2,500 users - Response size: 3.8 MB - Load time: 6-8 seconds - Client memory: Entire collection

With pagination: GET /users?limit=50&offset=0 - Returns 50 users - Response size: 76 KB (50× smaller) - Load time: 120ms (50× faster) - Client memory: Current page only

Offset-based pagination parameters:

limit: Number of items per page (page size) offset: Number of items to skip (starting position)

Fetching pages:

Page 1 (users 1-50):

GET /users?limit=50&offset=0Page 2 (users 51-100):

GET /users?limit=50&offset=50Page 3 (users 101-150):

GET /users?limit=50&offset=100Formula: offset = (page_number - 1) × limit

Pagination metadata in response:

Offset pagination with filters:

GET /users?status=active&limit=50&offset=0Filter applied before pagination: 1. Query users where status=‘active’ (1,200 matching) 2. Skip first 0 users 3. Return next 50 users

Offset pagination advantages:

- Simple to implement

- Easy to understand

- Can jump to arbitrary page

- Total count available

Offset pagination limitations:

1. Performance degrades with large offsets

Database query: SELECT * FROM users LIMIT 50 OFFSET 10000

Must scan 10,000 rows before returning 50

- Page 1 (offset=0): 15ms

- Page 100 (offset=5000): 340ms

- Page 1000 (offset=50000): 4200ms

2. Inconsistent results during modifications

Client requests page 1 (users 1-50) User 25 gets deleted Client requests page 2 (offset=50)

Receives users 51-100 (previously users 52-101) User 51 never seen by client

3. Duplicate results with insertions

Client requests page 1 (users 1-50) New user inserted at position 10 Client requests page 2 (offset=50)

Receives users 51-100 (previously users 50-99) User 50 appears on both pages

Cursor-based pagination solves these issues

Pagination: Cursor-Based

Cursor encodes position in result set

Instead of numeric offset, use opaque cursor token

Initial request:

GET /users?limit=50Response with cursor:

Next page request:

GET /users?limit=50&cursor=eyJ1c2VyX2lkIjo1MH0=Cursor is base64-encoded JSON: {"user_id": 50}

Database query using cursor:

No OFFSET clause - uses indexed WHERE condition

Cursor for different sort orders:

Sort by created_at descending:

Decoded: {"created_at": "2025-01-15T10:30:00Z", "user_id": 50}

Include user_id for tie-breaking when timestamps equal

Cursor pagination advantages:

1. Consistent performance

Direct index lookup, no scanning:

- Page 1: 15ms

- Page 100: 15ms

- Page 1000: 15ms (constant time)

2. Stable results during modifications

Client requests page 1 with cursor User 25 gets deleted Client requests page 2 using cursor

Cursor points to user_id > 50, deletion of user 25 doesn’t affect next page

3. No duplicate results from insertions

Cursor maintains position relative to sorted order, new insertions don’t cause duplicates

Cursor pagination limitations:

Cannot jump to arbitrary page

No “go to page 50” - must traverse sequentially

Cannot display total page count

Computing total requires full count query (expensive)

Cursor must be opaque to client

// Bad: Exposing internal structure

GET /users?after_id=50

// Good: Opaque cursor

GET /users?cursor=eyJ1c2VyX2lkIjo1MH0=Allows server to change cursor format without breaking clients

When to use each approach:

Offset pagination: - Need page numbers (UI with page selector) - Need total count - Data rarely changes - Small to medium collections

Cursor pagination: - Large collections (millions of rows) - Data frequently updated - Mobile apps (efficient, consistent) - Infinite scroll UX

Many APIs support both: limit/offset for random access, limit/cursor for efficient traversal

Authentication Proves Identity

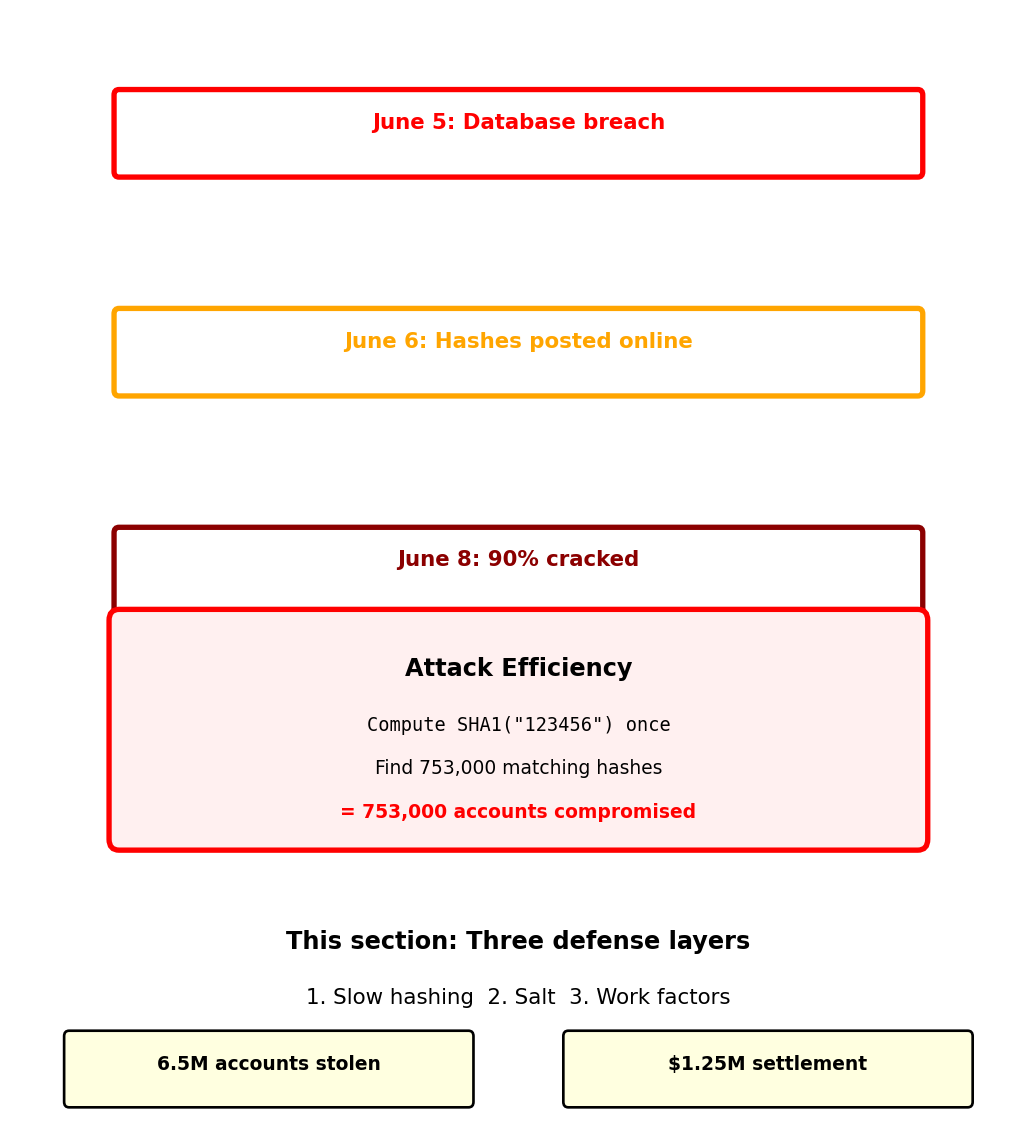

Authentication Breach: LinkedIn 2012

June 2012: 6.5 million LinkedIn password hashes stolen1

What LinkedIn did:

What attackers did:

- Built password dictionary (10 million common passwords)

- Computed hashes once:

- Searched stolen database:

Reported result: ~90% of passwords cracked within 72 hours

Why it failed:

- SHA-1 designed for speed: GPU computes 10 billion hashes/second

- No salt: Same password → same hash

- One computation → thousands of accounts compromised

Same password “123456” compromised 753,000 accounts simultaneously

Identity in Distributed Systems

LinkedIn’s breach shows authentication failures cascade across systems

ML API requires authentication to prevent unauthorized access:

Every API request needs to answer two questions:

- Who is making this request? (Authentication)

- Can they perform this action? (Authorization)

In a single process, identity is implicit:

In distributed systems, identity must be explicit:

HTTP is stateless - no memory between requests:

- No persistent connection to maintain identity

- Each request independent

- Must prove identity every time

Three approaches to maintaining identity across requests:

- Include credentials every request (HTTP Basic Auth)

- Create server-side session (Cookie-based)

- Issue cryptographic proof (Token-based)

Each approach makes different trade-offs between security, scalability, and complexity.

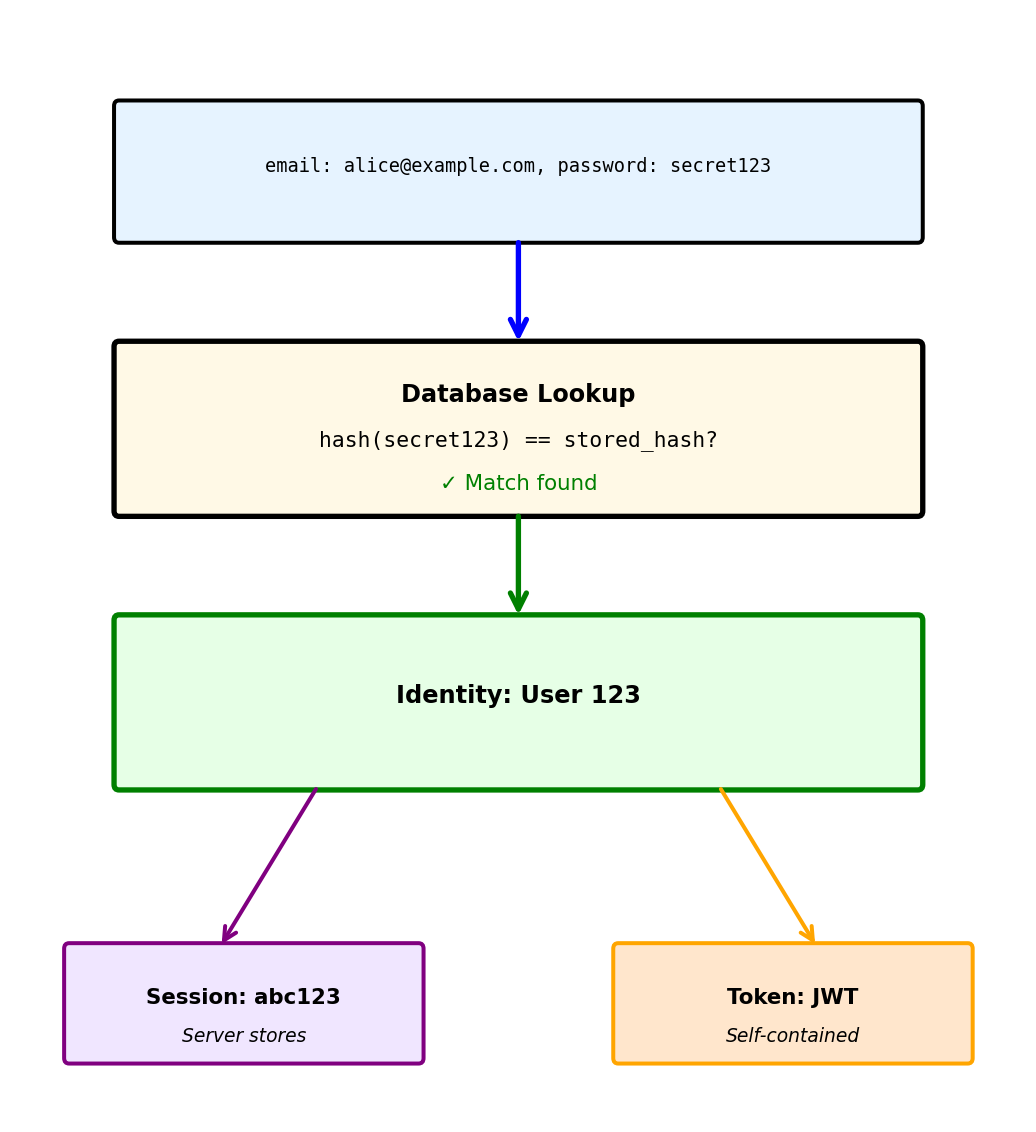

Password Authentication: Converting Secrets to Identity

Authentication transforms a secret into verified identity

Step 1: User provides credentials

Step 2: Server verifies against stored credentials

Step 3: Server issues proof of authentication

Password storage determines breach impact:

Never store plaintext passwords:

Store cryptographic hashes instead:

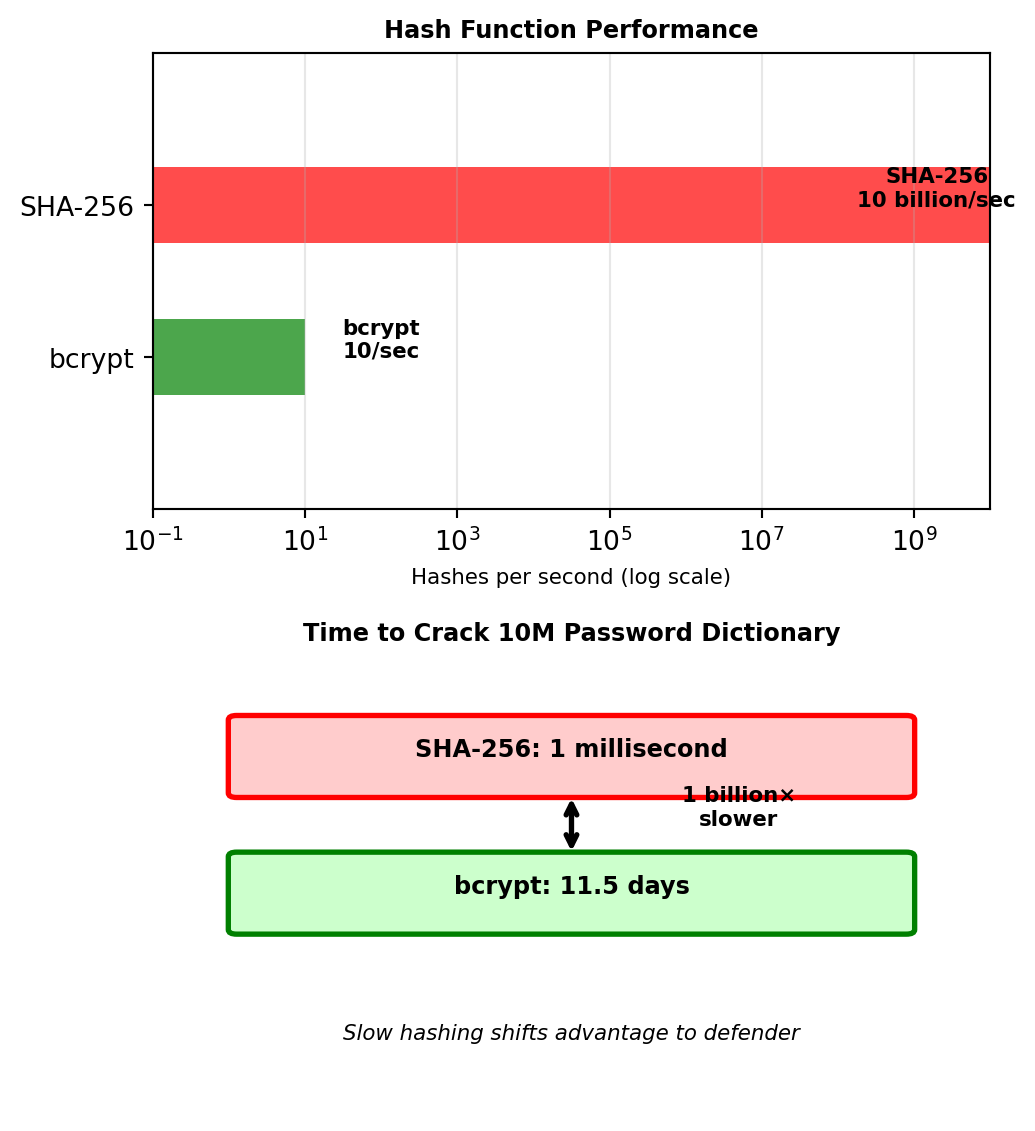

Hash Functions: Time as a Defense Mechanism

LinkedIn used SHA-1 hashing - why wasn’t that enough?

First, understand why plaintext is catastrophic:

Database breach with plaintext passwords:

All accounts immediately compromised.

Hash functions provide one-way transformation:

Cannot reverse: hash → original password (computationally infeasible)

Asymmetry favors attackers:

Legitimate use: Verify one password for one user

Attack: Try millions of passwords against all users

Solution: Make hashing deliberately slow

- SHA-1 (LinkedIn’s mistake): Designed for speed → 10 billion/second on GPU

- bcrypt: Designed for passwords → 10/second on GPU

- Time difference: 1 billion× slower

This is why LinkedIn’s passwords fell in 72 hours - SHA-1 allowed rapid dictionary attacks.

This asymmetry favors defenders over attackers.

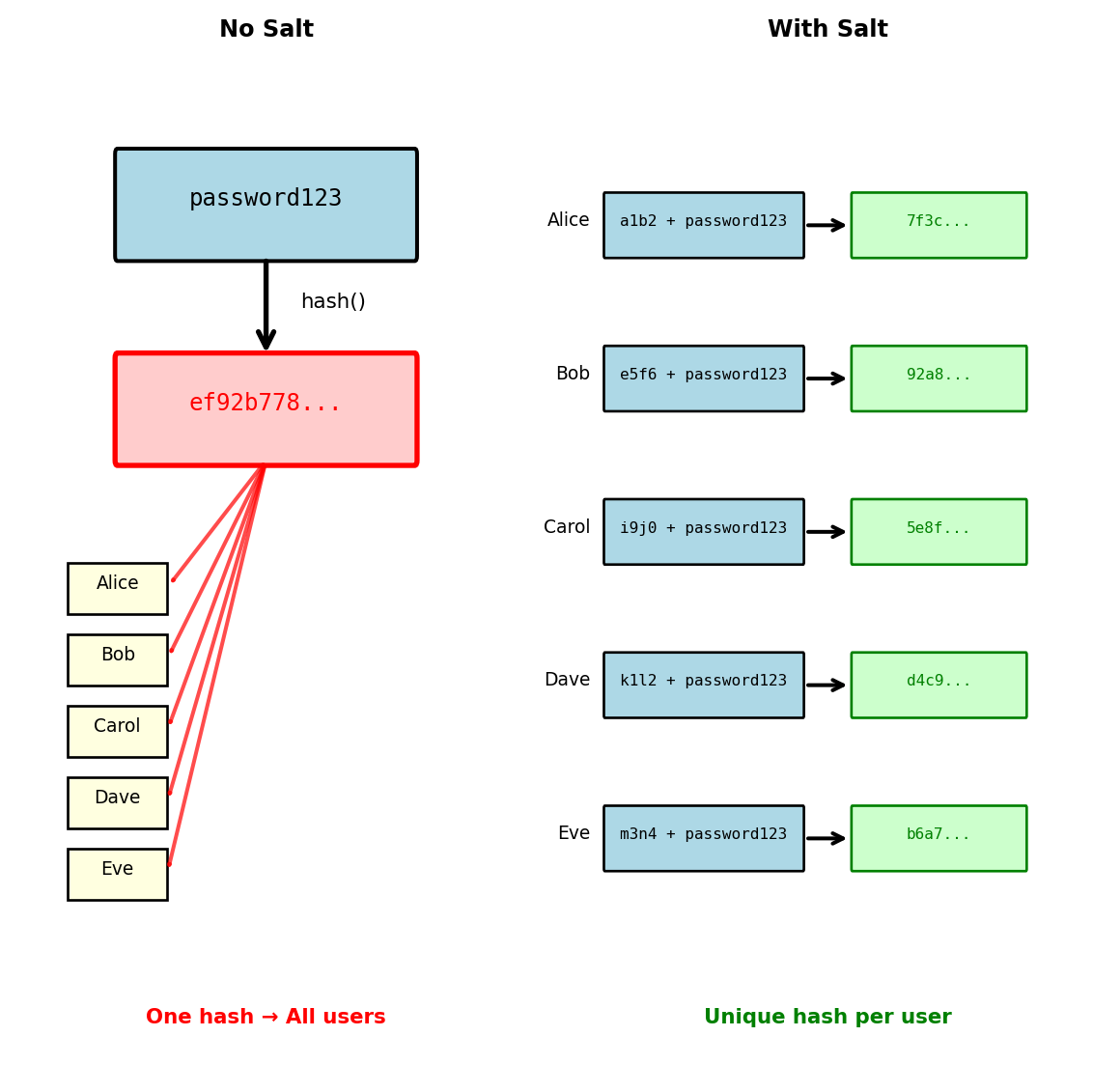

Salt: Preventing Parallel Attacks

LinkedIn’s second mistake: No salt

Even with slow hashing, common passwords create identical hashes:

Without salt, all users with “password123” have same hash:

Salt: Random value unique to each user

Now identical passwords produce different hashes:

Impact on attack strategy:

Without salt: One computation compromises all instances

With salt: Must attack each user individually

Salt is not secret - stored with hash, prevents mass attacks not targeted ones

With salt, LinkedIn’s 753,000 “123456” users would each need individual attacks

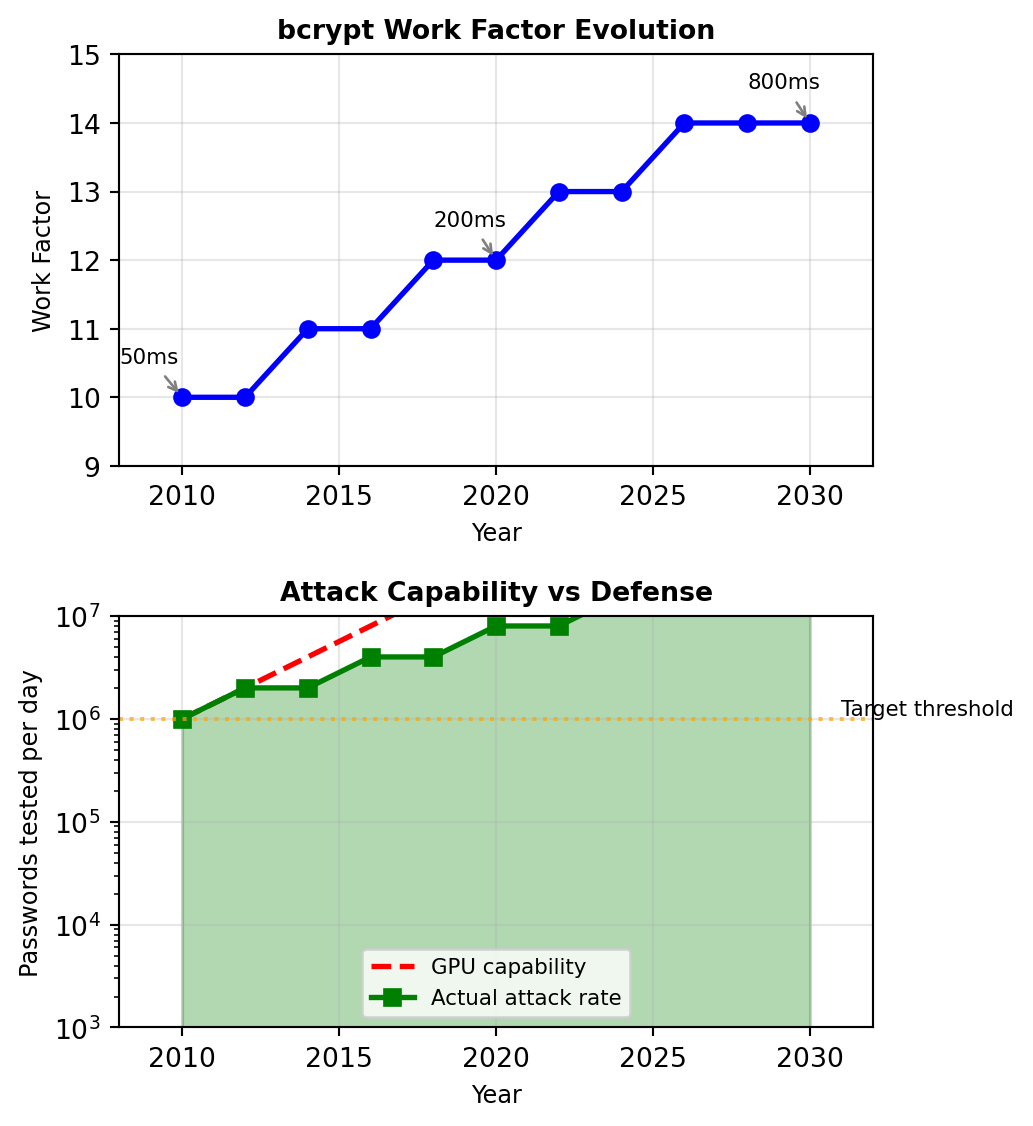

Work Factors: Adaptive Security

Combining defenses: Slow hashing + Salt + Adaptive work factor

bcrypt’s configurable work factor scales with hardware improvements:

Each increment doubles computation time:

| Factor | Iterations | Time/Hash | Passwords/Day |

|---|---|---|---|

| 10 | 1,024 | 50ms | 1.7M |

| 11 | 2,048 | 100ms | 864K |

| 12 | 4,096 | 200ms | 432K |

| 13 | 8,192 | 400ms | 216K |

| 14 | 16,384 | 800ms | 108K |

Balancing security and usability:

def choose_work_factor():

# Target: 250ms computation time

test_password = b"benchmark"

for factor in range(10, 15):

start = time.time()

bcrypt.hashpw(test_password, bcrypt.gensalt(factor))

duration = time.time() - start

if duration > 0.250: # 250ms target

return factor

return 14 # Maximum reasonable factorMoore’s Law compensation:

- Computing power doubles every 2 years

- Increase work factor by 1 every 2 years

- Maintains constant security margin

Security parameter improves over time without code changes

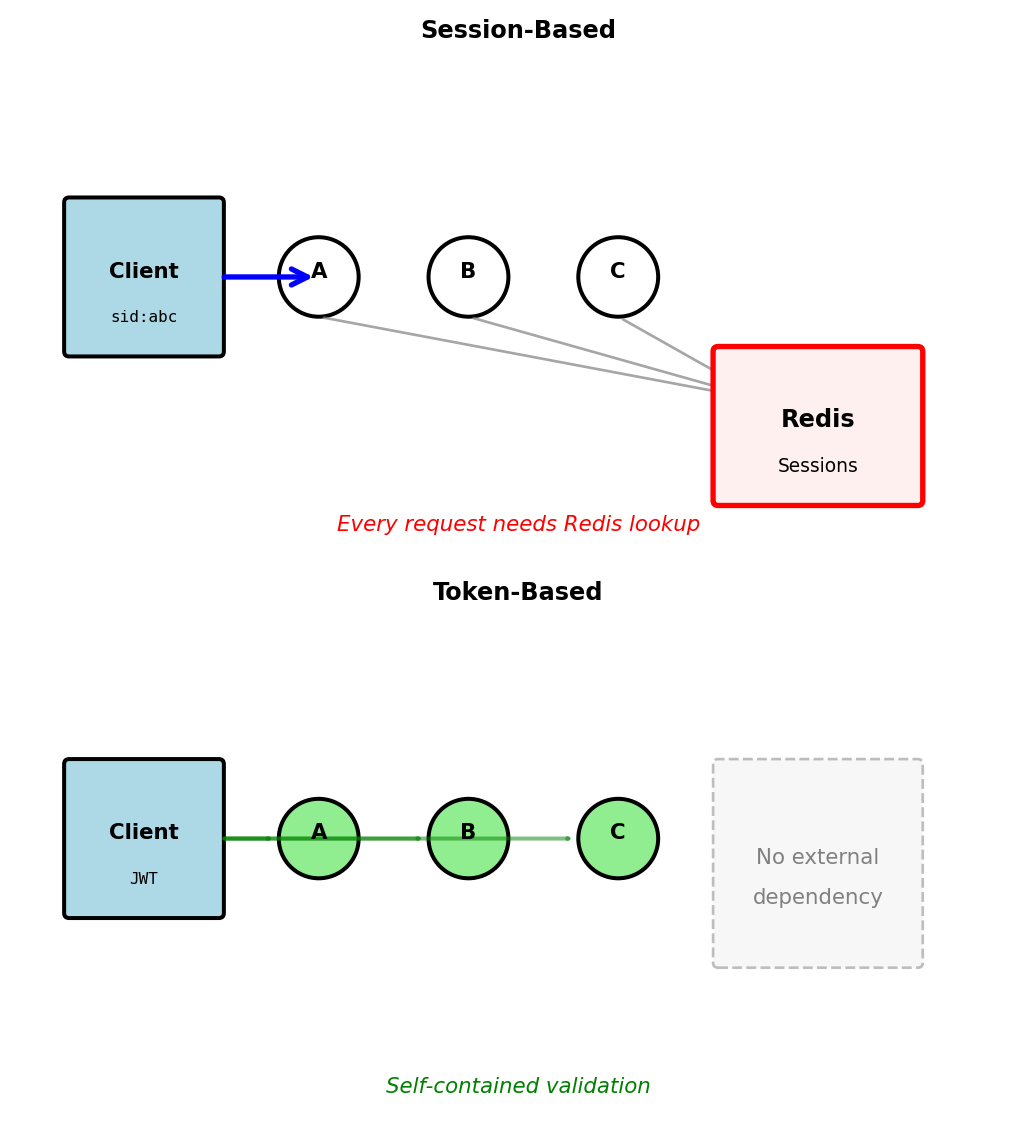

Sessions vs Tokens: State Management Trade-offs

Server sessions: Centralized state

# Login creates session in shared store

session_id = generate_uuid()

redis.set(f"session:{session_id}", {

"user_id": 123,

"created": timestamp,

"permissions": ["read", "write"]

})

response.set_cookie("session_id", session_id)

# Every request requires lookup

def handle_request(request):

session_id = request.cookies.get("session_id")

session = redis.get(f"session:{session_id}") # Network call

if not session:

return 401Tokens: Distributed state

# Login creates self-contained token

payload = {

"user_id": 123,

"exp": timestamp + 3600,

"permissions": ["read", "write"]

}

token = jwt.encode(payload, SECRET_KEY)

return {"token": token}

# Every request validates locally

def handle_request(request):

token = request.headers["Authorization"].split(" ")[1]

payload = jwt.decode(token, SECRET_KEY) # CPU only

# No network call requiredTrade-offs in practice:

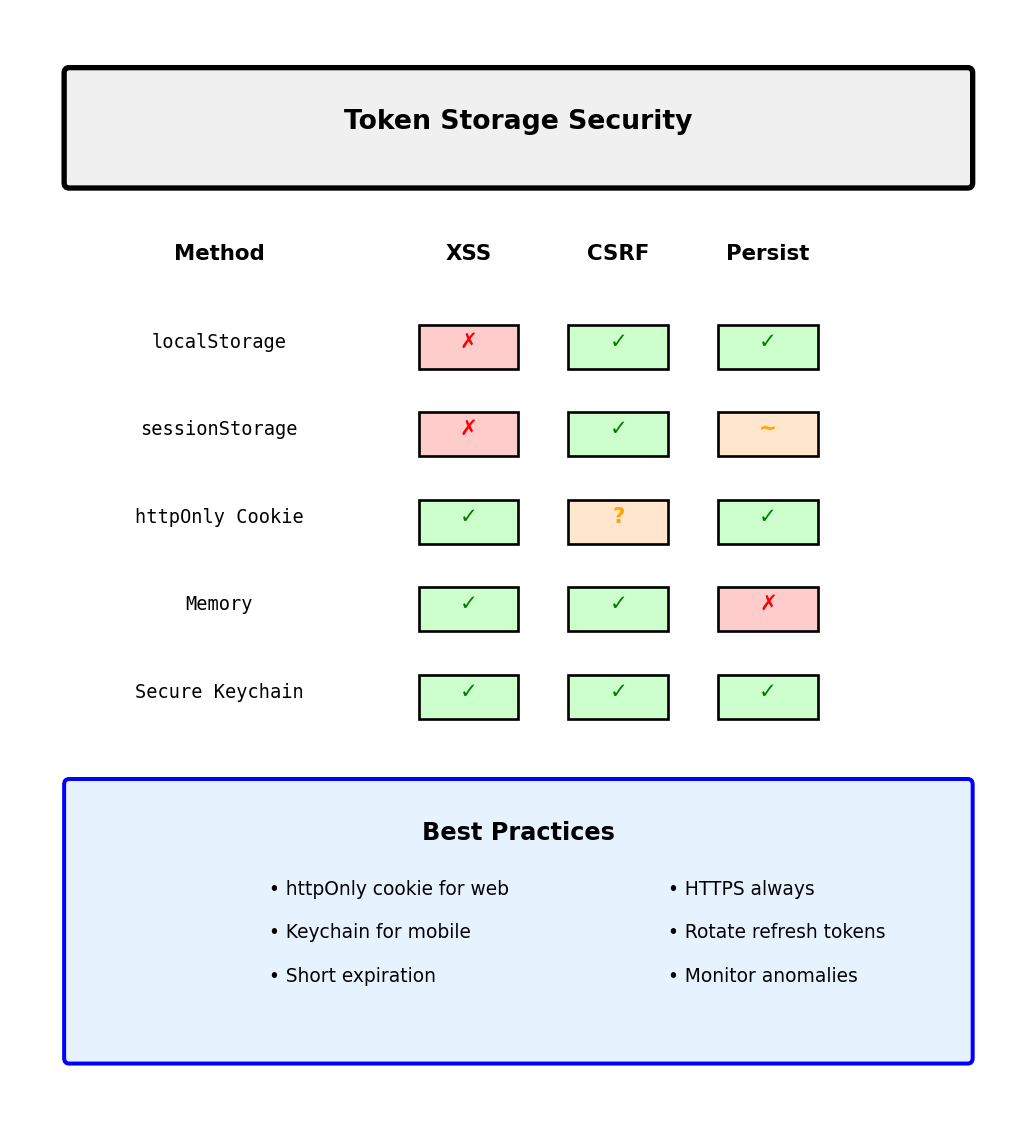

| Aspect | Sessions | Tokens |

|---|---|---|

| Revocation | Immediate | At expiration |

| Scaling | Requires shared store | Linear |

| Network calls | Every request | None |

| State size | Server: O(users) | Server: O(1) |

| Client complexity | Simple cookie | Header management |

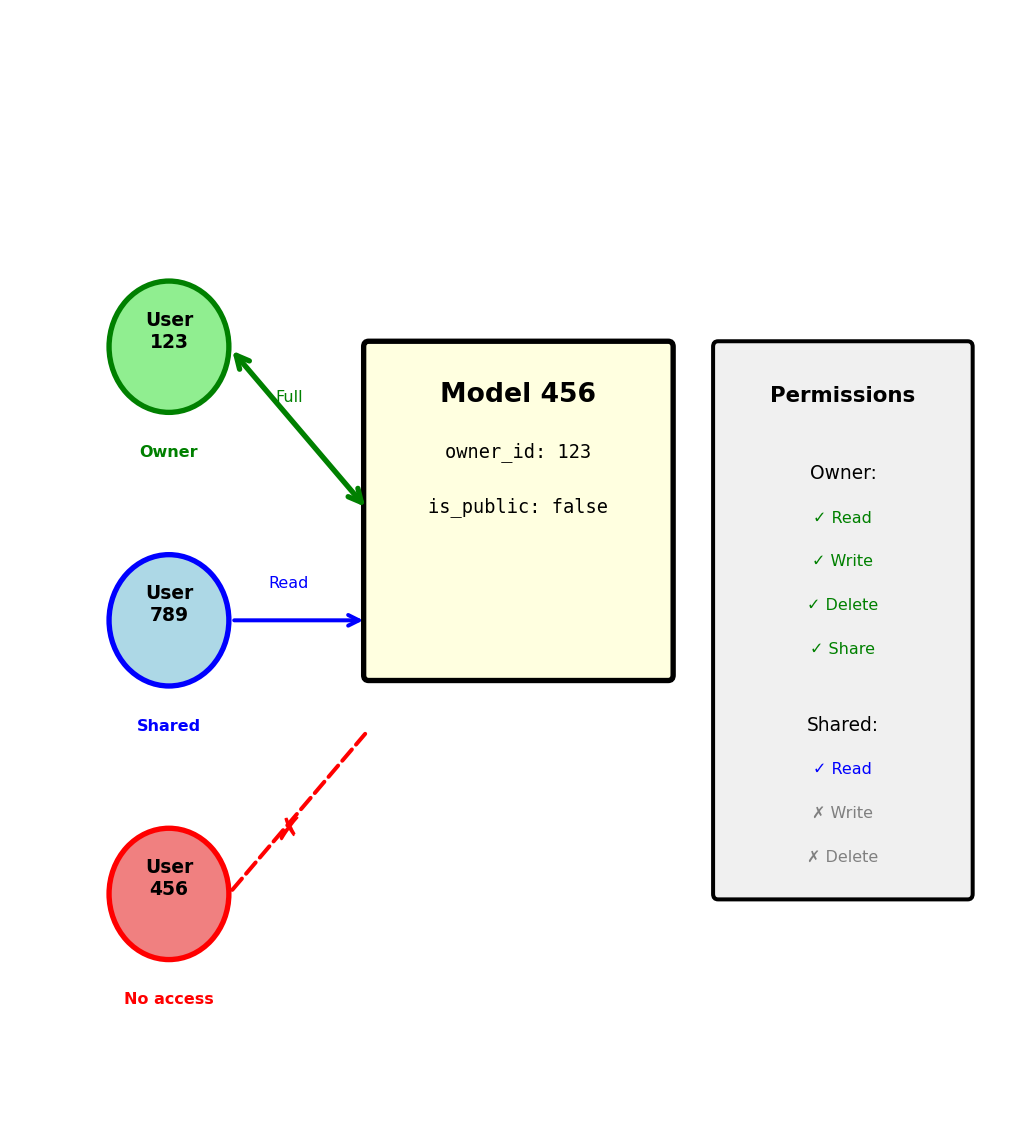

Authorization: From Identity to Permissions

Authentication establishes identity; authorization determines capabilities

def process_request(request):

# Step 1: Who are you? (Authentication)

user_id = validate_token(request.headers['Authorization'])

if not user_id:

return 401 # Unauthorized - don't know who you are

# Step 2: What can you do? (Authorization)

resource = request.path # e.g., /models/123

action = request.method # e.g., DELETE

if not has_permission(user_id, resource, action):

return 403 # Forbidden - know who you are, can't do this

# Step 3: Execute

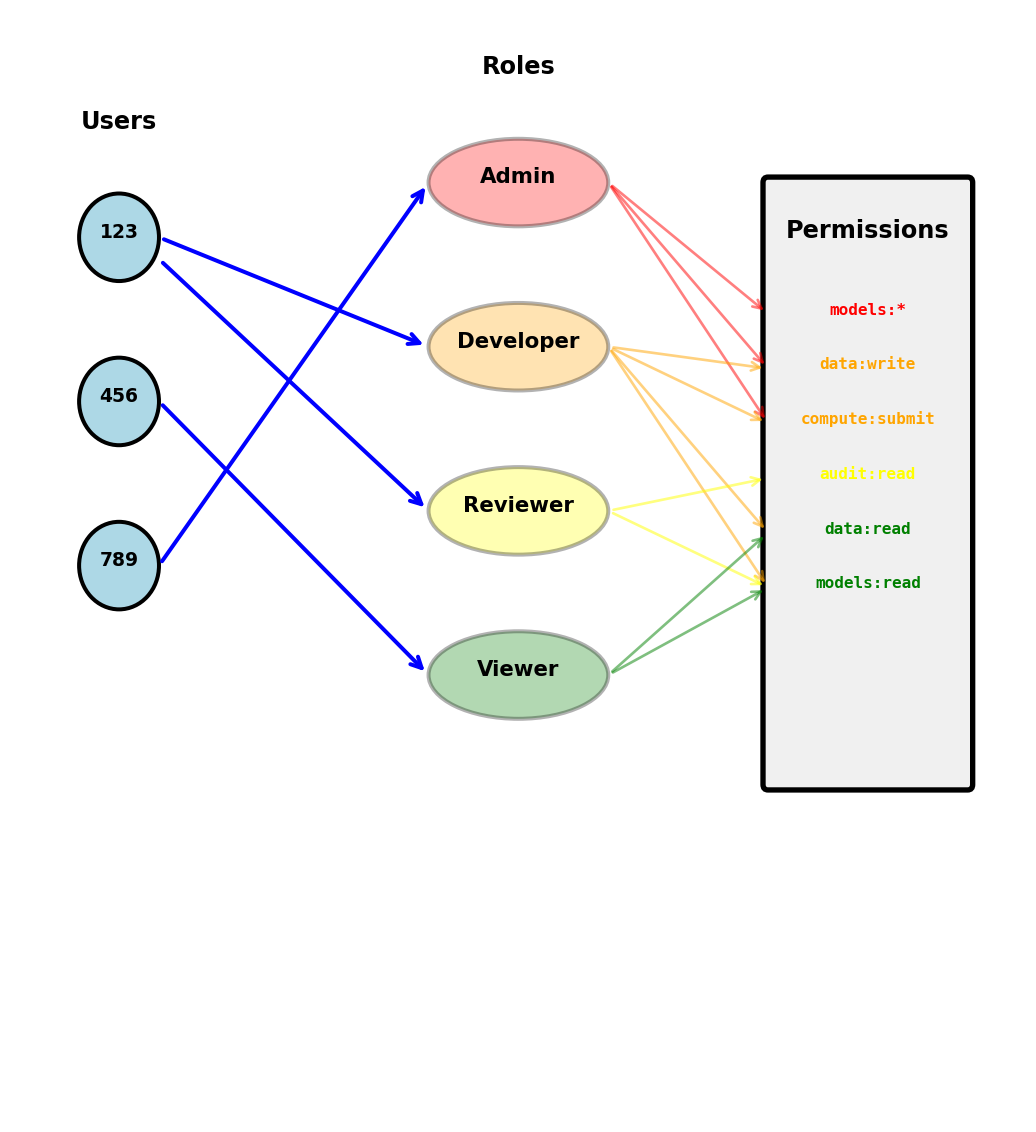

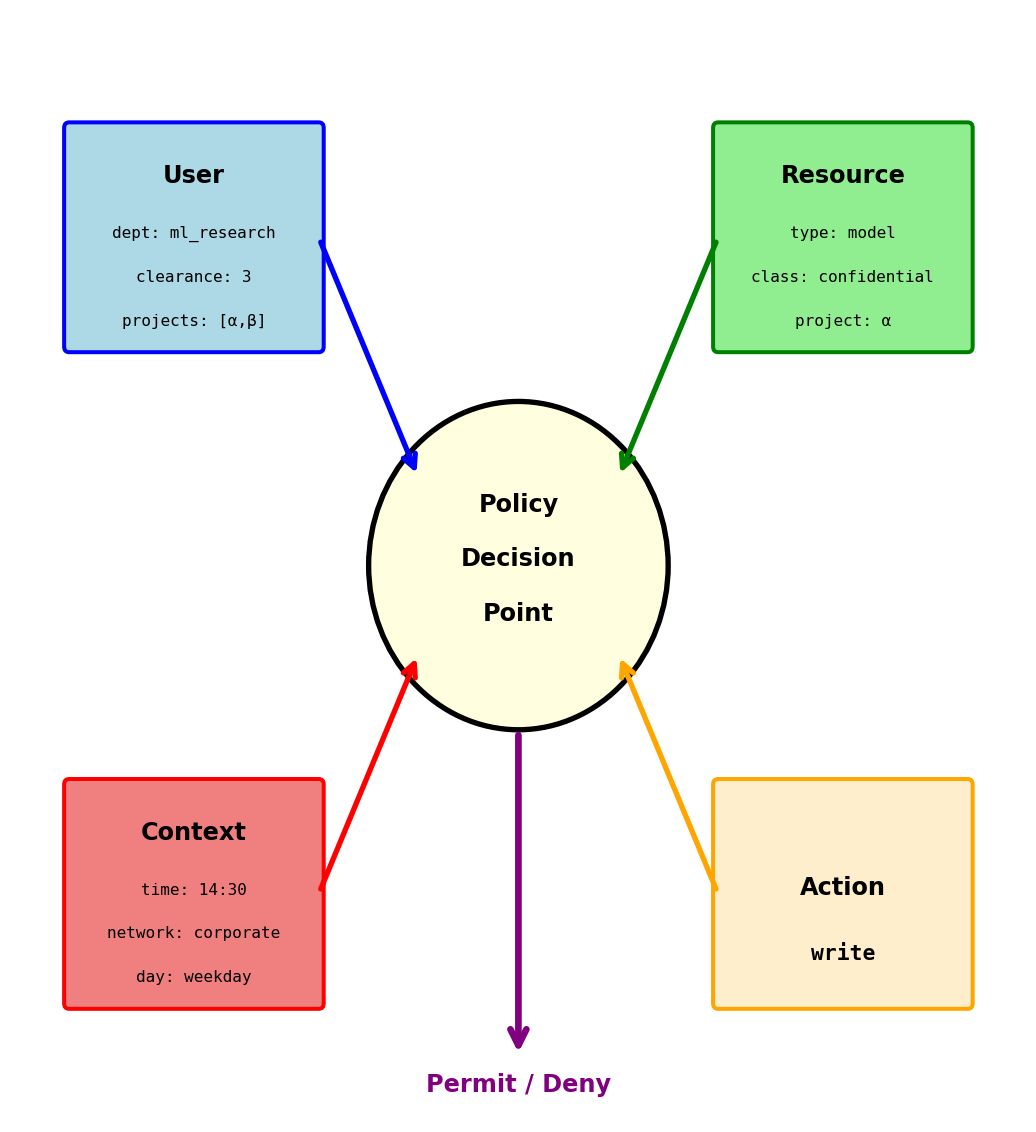

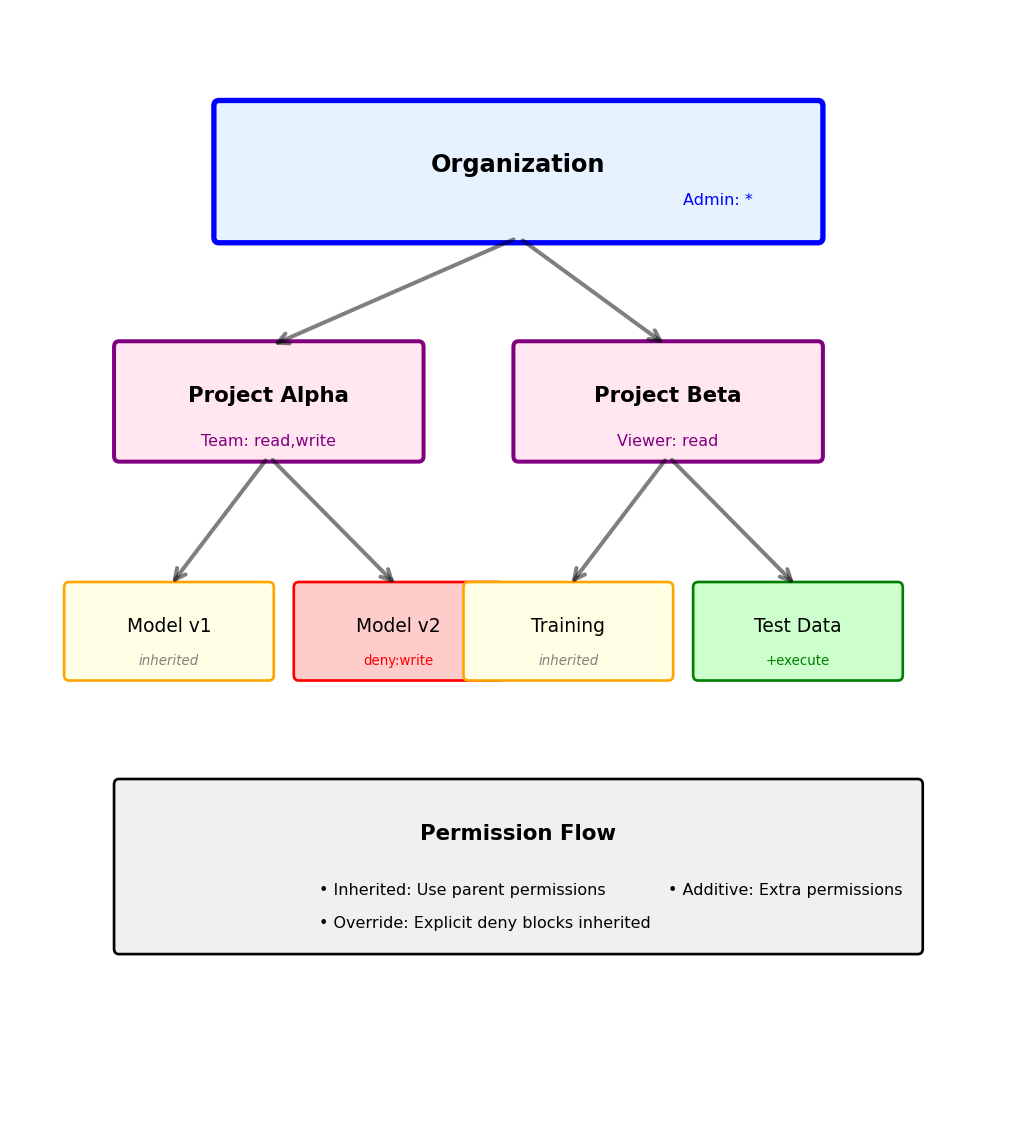

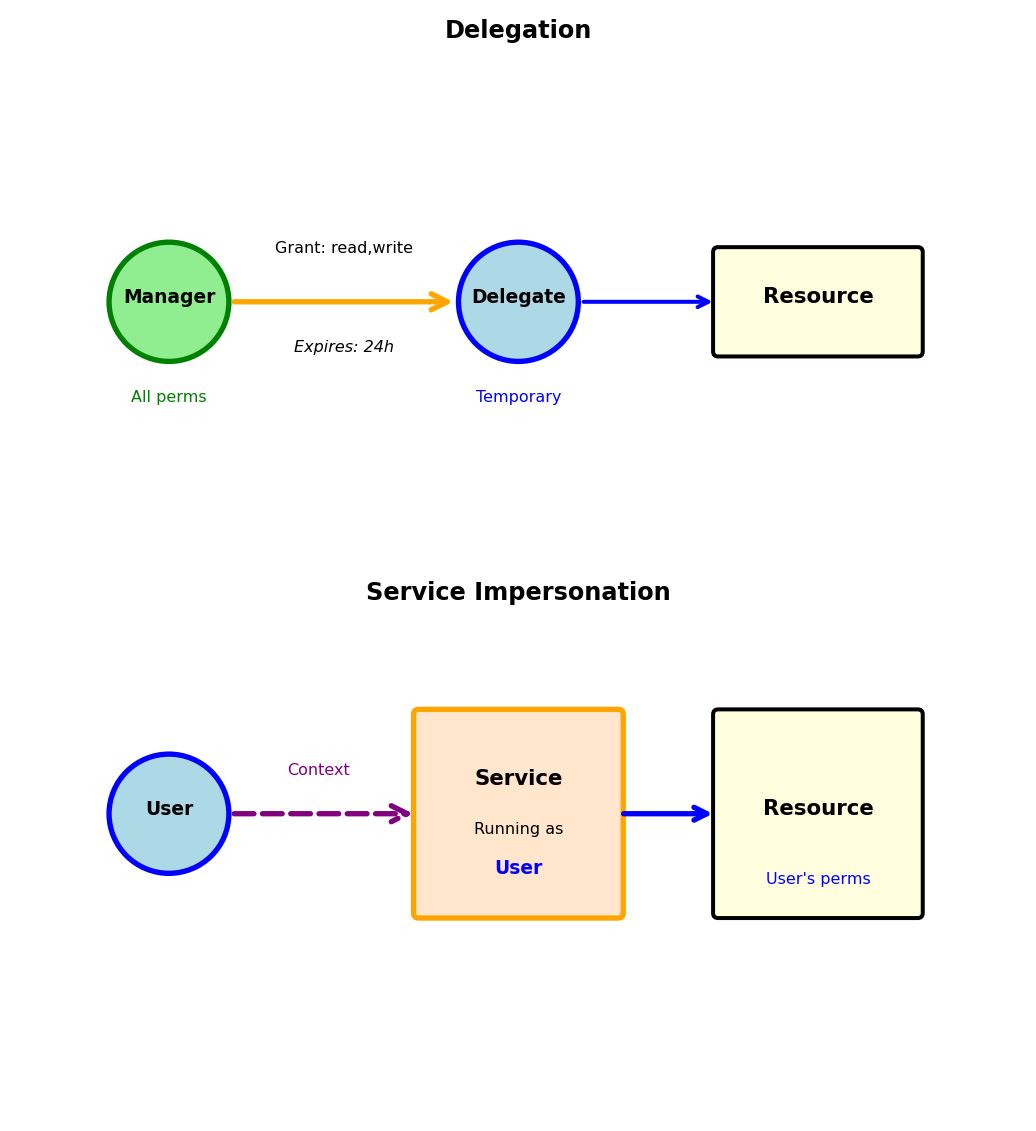

return perform_action(resource, action)Three authorization models:

1. Role-Based (RBAC): Users have roles, roles have permissions

2. Attribute-Based (ABAC): Decisions based on attributes

3. Resource-Based: Users own resources

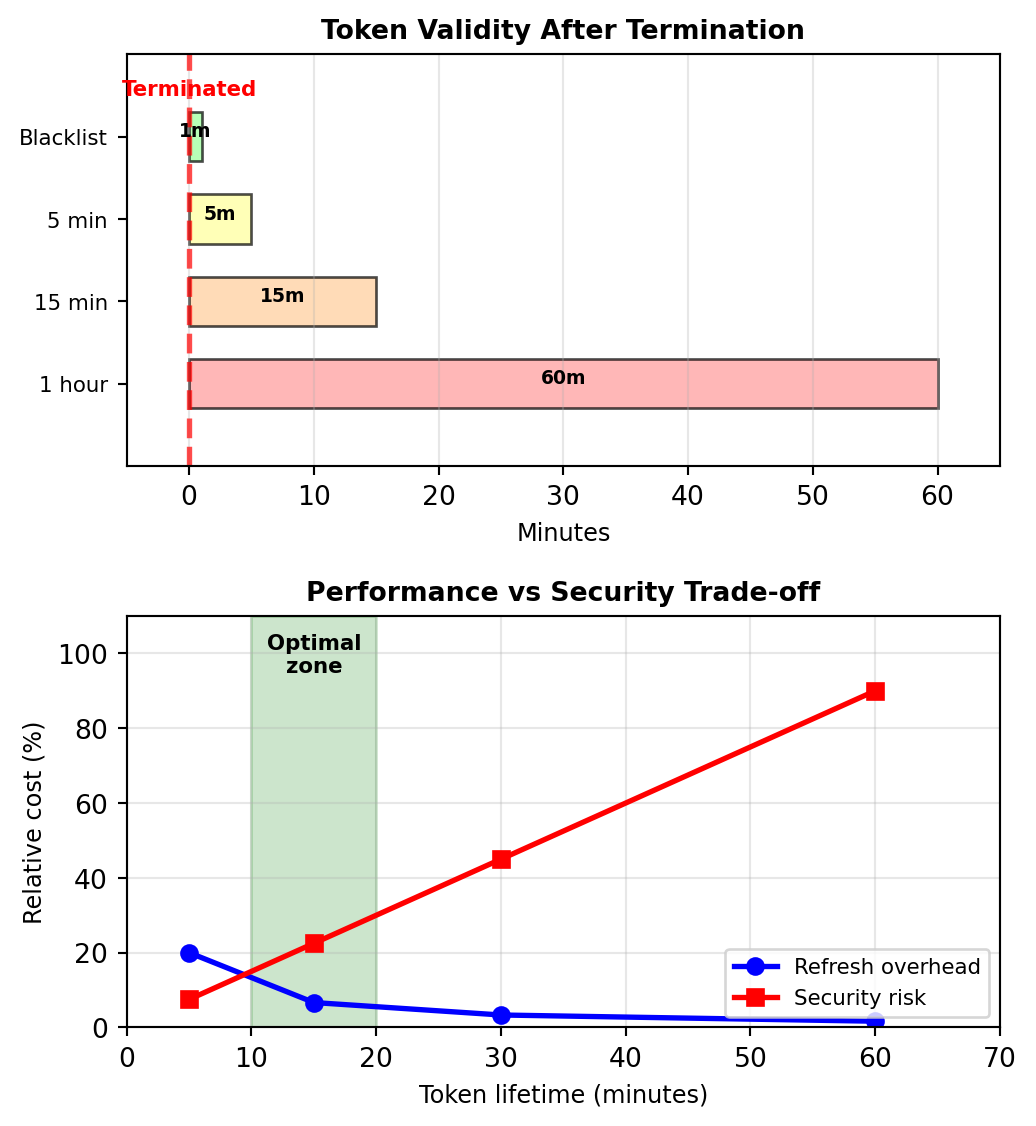

Token Expiration and Revocation Trade-offs

Tokens can’t be recalled after issuing:

Once issued, JWT remains valid until expiration:

Employee terminated at 2:00 PM:

- Token issued: 1:30 PM

- Token expires: 2:30 PM

- Problem: 30 minutes of unauthorized access

Three approaches to bounded revocation:

1. Short-lived access tokens (15 minutes)

2. Blacklist critical tokens

3. Version-based invalidation

Stateless Scaling: The Operational Advantage

Session-based scaling requires coordination

Adding servers with sessions:

Measured impact with 1000 requests/second:

- Sticky sessions: Imbalanced load (Server 1: 89%, Server 2: 11%)

- Redis sessions: 2ms added latency per request

- Redis failure: All users logged out

Token-based scaling is trivial

Adding servers with tokens:

Measured impact with 1000 requests/second:

- Round-robin load balancing: Even distribution (25% each for 4 servers)

- Token validation: 0.1ms CPU time

- Server failure: Requests rerouted, no user impact

Deployment advantages:

| Operation | Sessions | Tokens |

|---|---|---|

| Add server | Update session store | Add server |

| Remove server | Migrate sessions | Remove server |

| Deploy update | Coordinate session drain | Rolling update |

| Region failover | Replicate sessions | No change |

Cost at scale (10K concurrent users):

- Sessions: Redis cluster ($200/month) + Complexity

- Tokens: No infrastructure + 0.1% CPU overhead

Stateless tokens eliminate the shared session store — scaling adds servers, not infrastructure.

JWT and OAuth Enable Token-Based Access

JWT: Self-Contained Identity Tokens

JSON Web Tokens encode identity without server state

JWT structure: Three Base64-encoded parts separated by dots

eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9.

eyJ1c2VyX2lkIjoxMjMsImVtYWlsIjoiYWxpY2VAZXhhbXBsZS5jb20iLCJleHAiOjE3MDUzMjQ4MDB9.

SflKxwRJSMeKKF2QT4fwpMeJf36POk6yJV_adQssw5cPart 1: Header (Algorithm and type)

Part 2: Payload (Claims about user)

Part 3: Signature (Prevents tampering)

HMACSHA256(

base64(header) + "." + base64(payload),

server_secret_key

)Critical properties:

- Self-contained: All data in token, no database lookup

- Tamper-proof: Invalid signature = rejected token

- Stateless: Server only needs secret key

- Not encrypted: Payload readable by anyone (Base64 decode)

JWT Validation: Cryptographic Trust

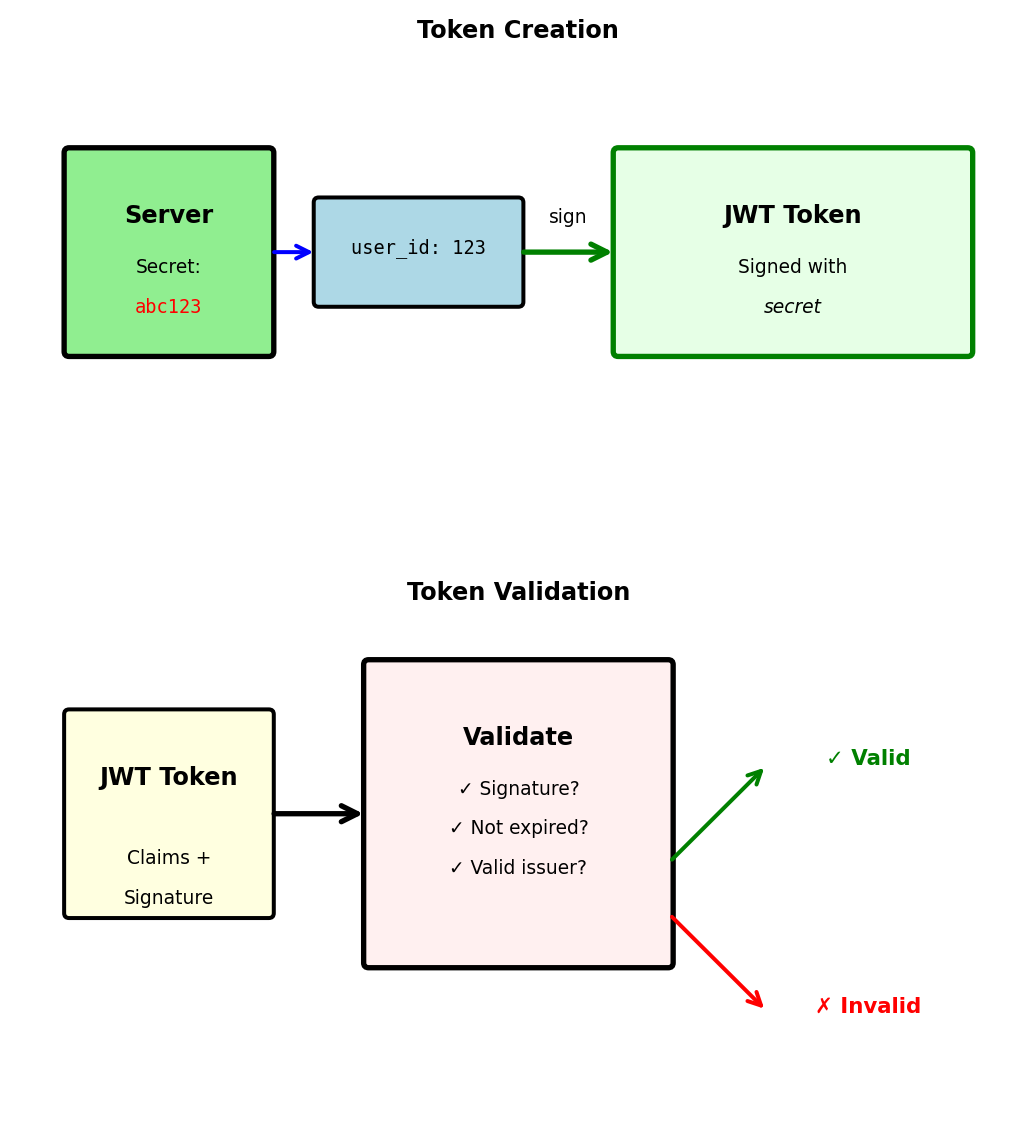

Signature prevents token forgery

Server creates token with secret:

Client cannot modify token:

# Attacker tries to change user_id

decoded = base64.decode(token.split('.')[1])

decoded['user_id'] = 999 # Change to admin

fake_payload = base64.encode(decoded)

# But cannot generate valid signature without secret

fake_token = header + "." + fake_payload + "." + random_signature

# Server will reject: Invalid signatureServer validates with same secret:

Symmetric (HS256) vs Asymmetric (RS256):

- HS256: Same secret for signing and verifying (simple, fast)

- RS256: Private key signs, public key verifies (allows third-party validation)

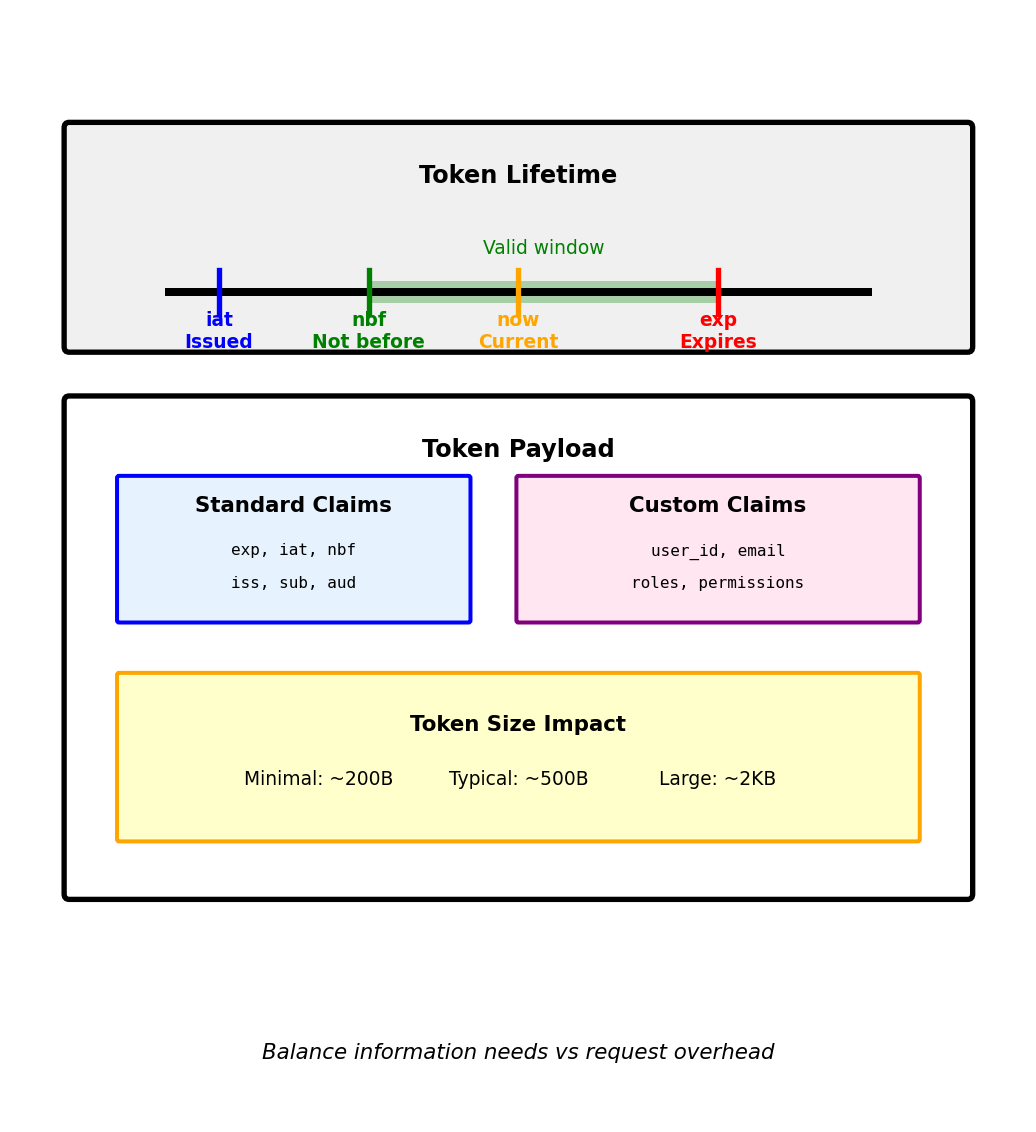

JWT Claims: Standard Fields and Custom Data

Standard claims provide common functionality

Registered claims (predefined meanings):

Time-based validation:

Custom claims for application data:

Token size considerations:

- Each claim adds bytes to every request

- HTTP header limit: ~8KB

- Typical JWT: 200-500 bytes

- With permissions: 500-2000 bytes

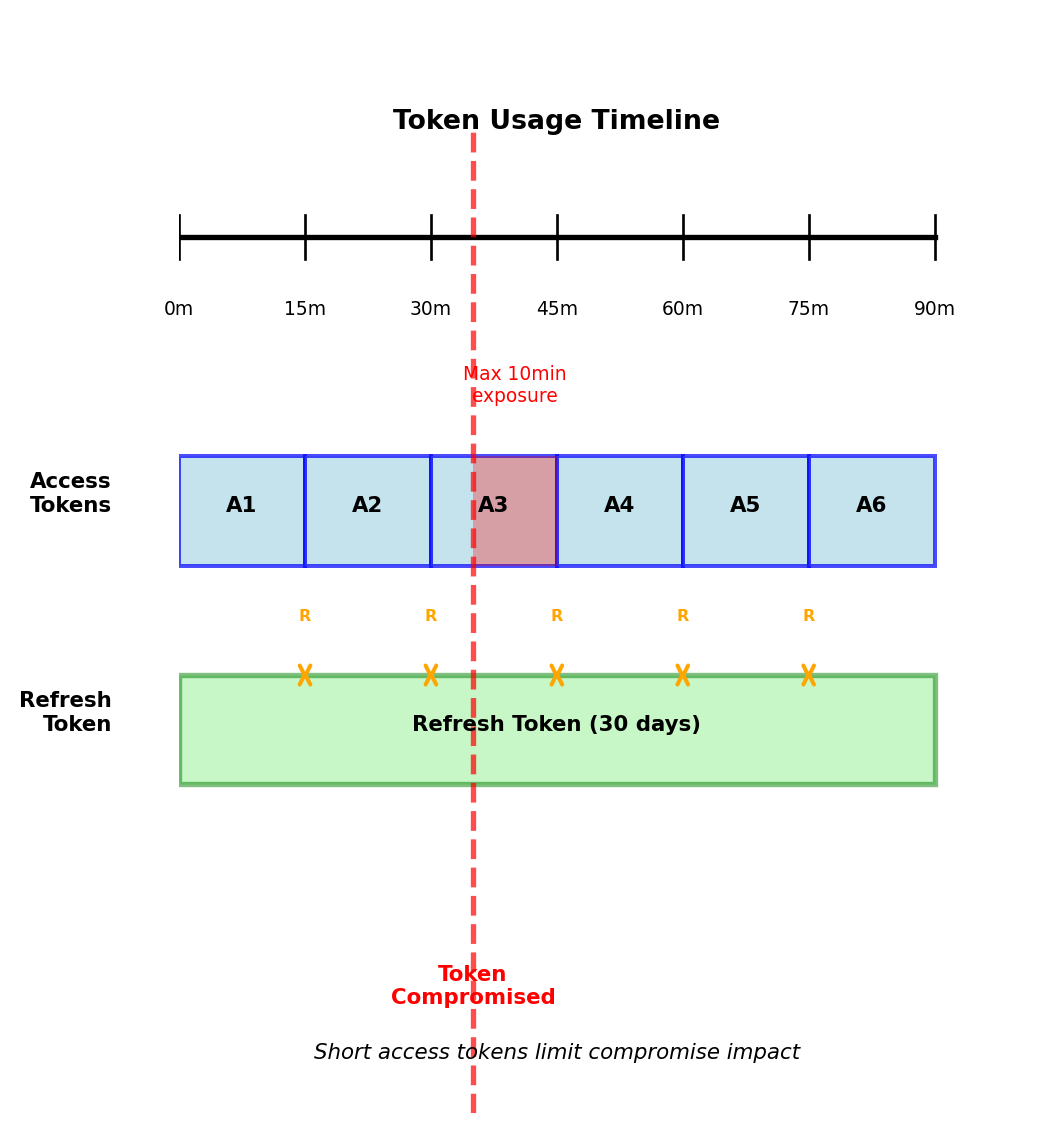

Refresh Tokens: Balancing Security and Usability

Short access tokens + long refresh tokens minimize risk

Dual token pattern:

def login(email, password):

if authenticate(email, password):

# Short-lived for API calls

access_token = create_jwt(

user_id=123,

expires_in=15*60 # 15 minutes

)

# Long-lived for obtaining new access tokens

refresh_token = create_jwt(

user_id=123,

token_type="refresh",

expires_in=30*24*60*60 # 30 days

)

# Store refresh token for revocation

db.store_refresh_token(refresh_token)

return {

"access_token": access_token,

"refresh_token": refresh_token,

"expires_in": 900

}Token refresh flow:

def refresh_access_token(refresh_token):

# Validate refresh token

payload = jwt.decode(refresh_token, secret_key)

# Check if revoked (requires DB check)

if is_revoked(refresh_token):

return 401 # Revoked

# Issue new access token

new_access = create_jwt(

user_id=payload['user_id'],

expires_in=15*60

)

return {"access_token": new_access}Security boundaries:

- Compromise window: Maximum 15 minutes (access token lifetime)

- Refresh check: Database lookup only on refresh (every 15 min)

- Immediate revocation: Possible via refresh token blacklist

- Performance: 1 DB check per 15 minutes vs every request

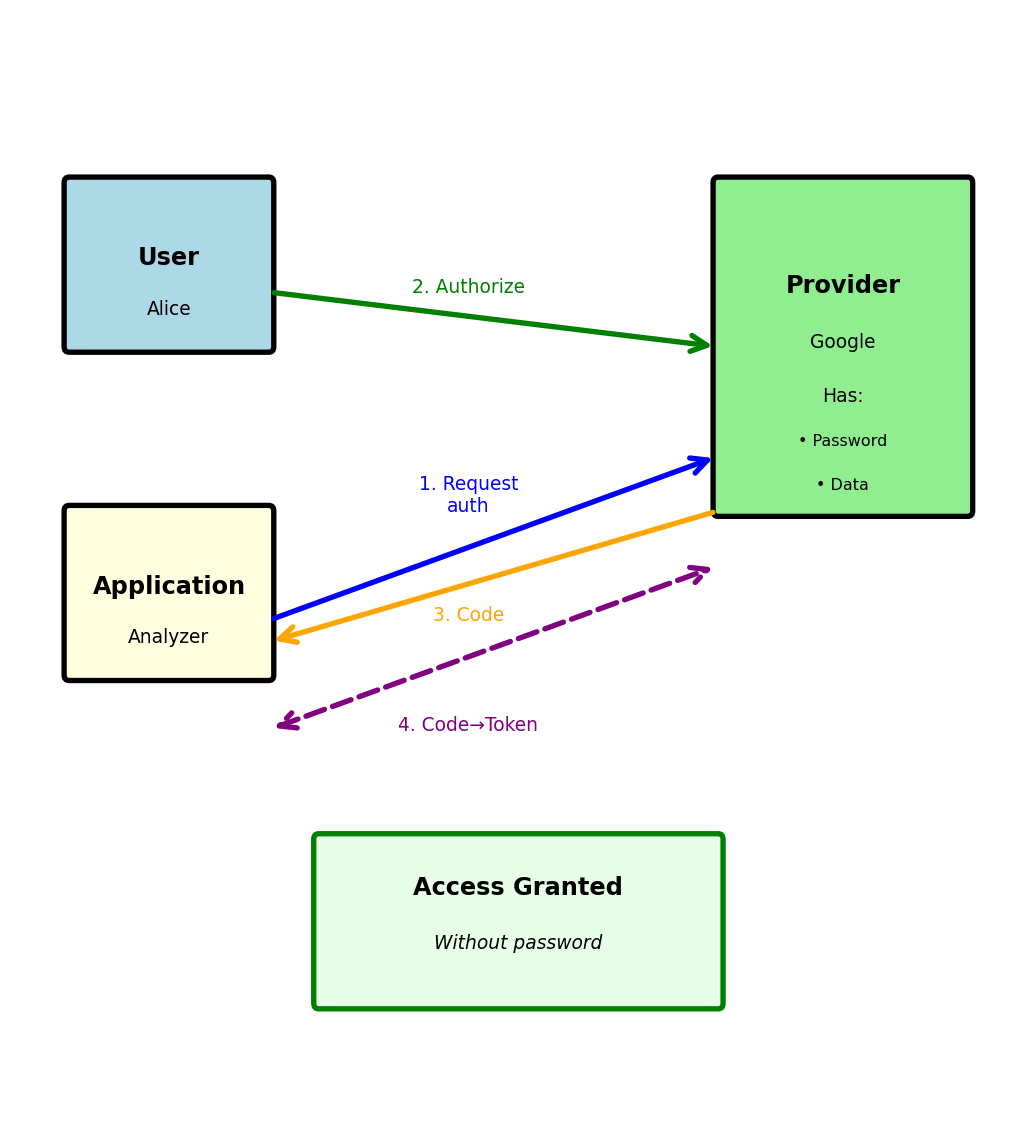

OAuth 2.0: Delegated Authorization

OAuth allows third-party access without sharing passwords

OAuth solves password sharing with third parties:

OAuth authorization flow:

Step 1: User authorizes at provider

Browser → https://accounts.google.com/oauth/authorize?

client_id=github-analyzer&

redirect_uri=https://analyzer.com/callback&

scope=drive.readonly&

response_type=codeStep 2: Provider redirects with authorization code

Browser ← https://analyzer.com/callback?code=abc123Step 3: Exchange code for token (backend)

# Server-to-server, not visible to browser

response = requests.post('https://oauth2.googleapis.com/token', {

'code': 'abc123',

'client_id': 'github-analyzer',

'client_secret': 'secret-key-xyz', # Proves identity

'grant_type': 'authorization_code'

})

tokens = response.json()

# {

# "access_token": "ya29.a0ARrdaM...",

# "token_type": "Bearer",

# "expires_in": 3600,

# "scope": "drive.readonly"

# }Key principles:

- User never gives password to third party

- Provider controls exactly what access is granted

- Access can be revoked without changing password

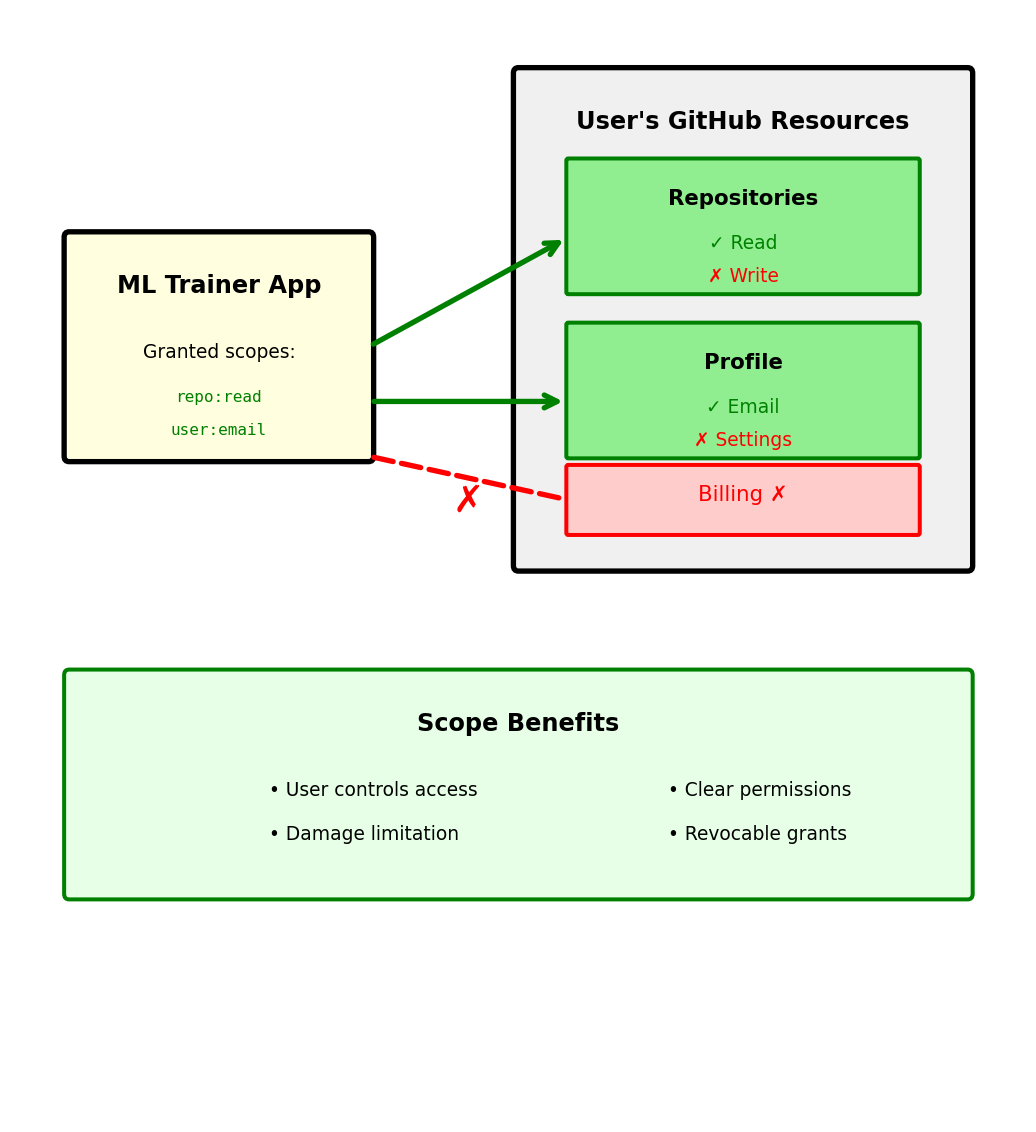

OAuth Scopes: Granular Permissions

Scopes limit what applications can access

Requesting specific permissions:

User sees requested permissions:

ML Trainer App wants to access your GitHub account:

✓ Read access to repositories

- View code, issues, pull requests

- View repository metadata

✓ Read user email addresses

- View primary email

- View verified status

✗ Will NOT be able to:

- Write to repositories

- Delete anything

- Access billing information

[Authorize] [Deny]Token contains granted scopes:

Common scope patterns:

| Provider | Scope | Permission |

|---|---|---|

| GitHub | repo |

Full repository access |

| GitHub | repo:status |

Only commit status |

drive.readonly |

Read files only | |

drive.file |

Only files created by app | |

| Slack | chat:write |

Post messages |

| Slack | users:read |

View user information |

Principle of least privilege: Request minimum necessary scope

OAuth Grant Types: Different Flows for Different Needs

OAuth defines multiple flows for different scenarios

1. Authorization Code (web apps with backend)

2. Client Credentials (service-to-service)